Traffic dropped for identity not found

Is there an existing issue for this?

- [X] I have searched the existing issues

What happened?

When doing cilium monitor --related-to 429 -t policy-verdict to monitor a certain endpoint, I see some requests being denied

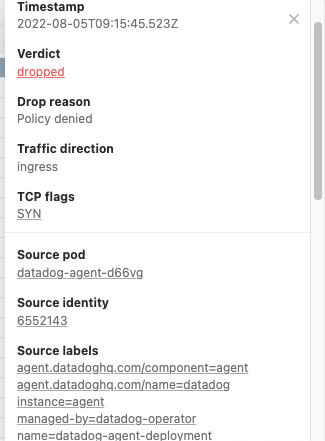

Policy verdict log: flow 0x88362923 local EP ID 429, remote ID 6552143, proto 6, ingress, action deny, match none, 10.210.107.97:38764 -> 10.210.120.103:8080 tcp SYN

Policy verdict log: flow 0x34c4a2e6 local EP ID 429, remote ID 6552143, proto 6, ingress, action deny, match none, 10.210.107.97:38764 -> 10.210.120.103:8080 tcp SYN

But then when I try to verify what identity is 6552143 with cilium identity get 6552143 I got

Error: Cannot get identity for given ID 6552143: [GET /identity/{id}][404] getIdentityIdNotFound

Same request show as dropped in Hubble UI with the same identity. Since the identity is not found, I can’t make a policy to allow this traffic

Cilium Version

Client: 1.12.0 9447cd1 2022-07-19T12:22:00+02:00 go version go1.18.4 linux/amd64 Daemon: 1.12.0 9447cd1 2022-07-19T12:22:00+02:00 go version go1.18.4 linux/amd64

Kernel Version

Linux ip-10-210-119-39.eu-west-1.compute.internal 5.4.204-113.362.amzn2.x86_64 #1 SMP Wed Jul 13 21:34:30 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux

Kubernetes Version

Server Version: version.Info{Major:"1", Minor:"22+", GitVersion:"v1.22.11-eks-18ef993", GitCommit:"b9628d6d3867ffd84c704af0befd31c7451cdc37", GitTreeState:"clean", BuildDate:"2022-07-06T18:06:23Z", GoVersion:"go1.16.15", Compiler:"gc", Platform:"linux/amd64"}

Sysdump

https://drive.google.com/file/d/13EFmujbyu8ljLOvWnrNvlf2PPnfAURQT/view?usp=sharing

Relevant log output

No response

Anything else?

No response

Code of Conduct

- [X] I agree to follow this project's Code of Conduct

@carloscastrojumo Thanks for the issue. Could you run cilium bpf ipcache get 10.210.120.103 from cilium-agent's pod which is running on the node which runs the 10.210.107.97 pod?

Hi @brb

This cluster does not exists anymore, but here from another cluster that I was able to replicate the issue.

Policy verdict log: flow 0xca1265fd local EP ID 3985, remote ID 7232340, proto 17, ingress, action deny, match none, 10.210.112.76:58919 -> 10.210.98.180:8125 udp

Policy verdict log: flow 0xca1265fd local EP ID 3985, remote ID 7232340, proto 17, ingress, action deny, match none, 10.210.112.76:58919 -> 10.210.98.180:8125 udp

cilium identity get 7232340

Error: Cannot get identity for given ID 7232340: [GET /identity/{id}][404] getIdentityIdNotFound

By running cilium bpf ipcache get 10.210.98.180 from cilium-agent's pod which is running on the node which runs 10.210.112.76 pod

root@ip-10-210-97-208:/home/cilium# cilium bpf ipcache get 10.210.98.180

10.210.98.180 maps to identity identity=15602669 encryptkey=0 tunnelendpoint=0.0.0.0

root@ip-10-210-97-208:/home/cilium# cilium bpf ipcache get 10.210.112.76

10.210.112.76 maps to identity identity=15620948 encryptkey=0 tunnelendpoint=0.0.0.0

Cilium Sysdump from this new cluster cilium-sysdump-20220809-091256.zip

@carloscastrojumo Are both 10.210.112.76 and 10.210.98.180 in the same cluster (we are seeing that you use clustermesh)?

Yes, same cluster. In fact, same node. 10.210.98.180 is a pod running with host port.

For info, we've spotted this too.

It seems somewhere along the path the left-most bit of the cluster ID is dropped (could be left-most 2 bits, I haven't had a chance to try a cluster with an ID that sets both).

Our boiled-down scenario is:

- Cilium 1.10

- cluster id > 128

- 2 pods of a deployment on the same node

- ingress policy allowing ingress from pods of the deployment

- curl from one pod to the other is denied

- the identity reported in the policy verdict is lacking the 8th bit of the cluster id

- the clusters I've tried on are not part of a mesh

cilium monitor -t trace --related-to for each endpoint shows:

# Client

root@ip-10-128-58-130:/home/cilium# cilium monitor --related-to 652

Press Ctrl-C to quit

level=info msg="Initializing dissection cache..." subsys=monitor

<- endpoint 652 flow 0x13d2e528 identity 12399018->unknown state new ifindex 0 orig-ip 0.0.0.0: 10.130.89.216:41360 -> 10.130.86.238:80 tcp SYN

-> stack flow 0x13d2e528 identity 12399018->12399018 state new ifindex 0 orig-ip 0.0.0.0: 10.130.89.216:41360 -> 10.130.86.238:80 tcp SYN

# Server

root@ip-10-128-58-130:/home/cilium# cilium monitor --related-to 2133

Press Ctrl-C to quit

level=info msg="Initializing dissection cache..." subsys=monitor

<- stack flow 0x13d2e528 identity 4010410->unknown state new ifindex lxc367ef851a52e orig-ip 0.0.0.0: 10.130.89.216:41360 -> 10.130.86.238:80 tcp SYN

Policy verdict log: flow 0x13d2e528 local EP ID 2133, remote ID 4010410, proto 6, ingress, action deny, match none, 10.130.89.216:41360 -> 10.130.86.238:80 tcp SYN

xx drop (Policy denied) flow 0x13d2e528 to endpoint 2133, identity 4010410->12399018: 10.130.89.216:41360 -> 10.130.86.238:80 tcp SYN

The identity goes from 12399018 (good) to 4010410 (bad):

0000 0000 1011 1101 0011 0001 1010 1010: 12399018

0000 0000 0011 1101 0011 0001 1010 1010: 4010410

^- dropped bit

Adding to Eric's comment, we're running cilium 1.10.13 with endpoint routes enabled.

As spoken with @aanm in slack

I have been doing lots of tests with different clusters. Here are 2 sysdumps for the same 1.12 single cluster (not connected to another cluster yet). The first sysdump is using Cluster ID 165, and I can see the phantom identities on cilium monitor

Policy verdict log: flow 0x3f7033e2 local EP ID 1191, remote ID 2432371, proto 17, ingress, action deny, match none, 10.210.157.246:46098 -> 10.210.135.20:8125 udp

Policy verdict log: flow 0x3f7033e2 local EP ID 1191, remote ID 2432371, proto 17, ingress, action deny, match none, 10.210.157.246:46098 -> 10.210.135.20:8125 udp

root@ip-10-210-130-48:/home/cilium# cilium identity get 2432371

Error: Cannot get identity for given ID 2432371: [GET /identity/{id}][404] getIdentityIdNotFound

The second one is from the same cluster but redeployed with helm with cluster id 20 and deleted the cilium endpoint and identity CRDs and restarted cilium operator and cilium agent pods. Can’t see any match none for identities not found like before after that.

cilium-sysdump-clusterid-165.zip cilium-sysdump-clusterid-20.zip

I found this is a bug specific to the EKS ENI mode.

TLDR

iptables trace result

[ 3745.087686] TRACE: raw:CILIUM_PRE_raw:return:3 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.107683] TRACE: raw:PREROUTING:policy:2 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.128496] TRACE: mangle:PREROUTING:rule:1 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.148672] TRACE: mangle:CILIUM_PRE_mangle:rule:3 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.168986] TRACE: mangle:CILIUM_PRE_mangle:return:10 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f01 <= mark erased

- In bpf_lxc.c, Cilium encodes sec identity into the mark and pass it to stack, higher 8bits of the identity are encoded into the lower 8bits of the mark. In above trace case, it is

0x81 = 129in0x6b990f81. - Before routing, it hits the iptables rule

-A CILIUM_PRE_mangle -i lxc+ -m comment --comment "cilium: primary ENI" -j CONNMARK --restore-mark --nfmask 0x80 --ctmask 0x80(introduced in https://github.com/cilium/cilium/commit/c7f9997d7001c8561583d374dcbd4d973bad6fac v1.9-rc1)- This restores the mark with following calculation nfmark = (nfmark & ~nfmask) ^ (ctmark & ctmask)

- At this point, ctmark=0x0 and nfmark=0x6b990f81 thus, result would be (0x6b990f81 & ~0x80) ^ (0x0 & 0x80) = 0x6b990f01 it erases the uppermost bit of higher 8bits of the identity.

- In the upper 8bits of the identity, we are encoding the ClusterID. Thus, 128 (b10000000) ~ 255 (b11111111) are affected.

Full investigation log.

Setup

eksctl config (eksctl-config.yaml)

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: yutaro-issue-20797

region: ap-northeast-1

managedNodeGroups:

- name: ng-1

desiredCapacity: 1

privateNetworking: true

taints:

- key: "node.cilium.io/agent-not-ready"

value: "true"

effect: "NoExecute"

Deployment (deploy.yaml)

apiVersion: apps/v1

kind: Deployment

metadata:

name: netshoot-deployment

labels:

app: netshoot

spec:

replicas: 2

selector:

matchLabels:

app: netshoot

template:

metadata:

labels:

app: netshoot

spec:

containers:

- name: netshoot

image: nicolaka/netshoot:latest

command: ["tail", "-f"]

Network Policy (netpol.yaml)

apiVersion: "cilium.io/v2"

kind: CiliumNetworkPolicy

metadata:

name: "allow-ingress"

spec:

endpointSelector:

matchLabels:

app: netshoot

ingress:

- fromEndpoints:

- matchLabels:

app: netshoot

toPorts:

- ports:

- port: "80"

protocol: TCP

Setup procedure

eksctl create cluster -f ./eksctl-config.yaml

cilium install --helm-set cluster.id=129 --helm-set cluster.name=test --version v1.12.0 --helm-set endpointRoutes.enabled=true

kubectl apply -f deploy.yaml

kubectl apply -f netpol.yaml

// curl from netshoot to netshoot

Investigation

- See

cilium monitor --related-to <epid>output

// Client side

-> stack flow 0xca05a3ad , identity 8491973->8491973 state new ifindex 0 orig-ip 0.0.0.0: 192.168.179.173:44850 -> 192.168.163.239:80 tcp SYN

// Server side

Policy verdict log: flow 0xf96cf2e7 local EP ID 1607, remote ID 103365, proto 6, ingress, action deny, match none, 192.168.179.173:51670 -> 192.168.163.239:80 tcp SYN

xx drop (Policy denied) flow 0xf96cf2e7 to endpoint 1607, , identity 103365->8491973: 192.168.179.173:51670 -> 192.168.163.239:80 tcp SYN

We can see that the identity is valid before it goes to the stack, but after coming back from the stack, it is changed somehow. The identity is carried to the stack with skb->mark, thus we can see that someone overwrites the mark.

- See iptables tracing

iptables -t raw -I PREROUTING 1 -d <destination pod ip> -j TRACE

// curl from netshoot to netshoot

output

[ 3745.087686] TRACE: raw:CILIUM_PRE_raw:return:3 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.107683] TRACE: raw:PREROUTING:policy:2 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.128496] TRACE: mangle:PREROUTING:rule:1 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.148672] TRACE: mangle:CILIUM_PRE_mangle:rule:3 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f81

[ 3745.168986] TRACE: mangle:CILIUM_PRE_mangle:return:10 IN=lxcf6b49f5d512f OUT= MAC=06:2b:96:ba:ba:5f:0a:73:0a:93:f1:01:08:00 SRC=192.168.107.130 DST=192.168.105.232 LEN=60 TOS=0x00 PREC=0x00 TTL=255 ID=32035 DF PROTO=TCP SPT=57976 DPT=80 SEQ=356477153 ACK=0 WINDOW=62727 RES=0x00 SYN URGP=0 OPT (020423010402080AB79F650B0000000001030307) MARK=0x6b990f01 <= mark erased

It hits the rule mangle:CILIUM_PRE_mangle:rule:3 and returns. During that, iptables erases the mark.

See rule 3

$ iptables -t mangle -vL CILIUM_PRE_mangle 3

3076 245K CONNMARK all -- lxc+ any anywhere anywhere /* cilium: primary ENI */ CONNMARK restore mask 0x80

See connmark value

$ conntrack -L -d 192.168.163.239

tcp 6 80 SYN_SENT src=192.168.179.173 dst=192.168.163.239 sport=53602 dport=80 [UNREPLIED] src=192.168.163.239 dst=192.168.179.173 sport=80 dport=53602 mark=0 use=1

CONNMARK calculates the new mark value with (nfmark & ~nfmask) ^ (ctmark & ctmask), substitute this.

(0x6b990f81 & ~0x80) ^ (0x0 & 0x80) = 0x6b990f01

When connmark is 0x0, it always erases the uppermost bit of the lower 8bits of the mark. In the upper 8bits of the identity, we are encoding the ClusterID. Thus, 128 (b10000000) ~ 255 (b11111111) are affected.

For the moment, the quick workaround would be only using 1-127 as a ClusterID. Let me think about how we can solve this.

@YutaroHayakawa , apologies: I wrote the following up while travelling today and hadn't see your comment above at that point. I'd still like to dump my findings here which are close (or even identical) to your own because it took me 2 full days to get to this point (I have near zero knowledge of the Cilium codebase :D ), though we took slightly different approaches. Also, I have a proposed fix.

The following NF table rule clears bit 0x80 of the mark as it passes through (in certain cases):

table ip mangle { # handle 4

...

chain CILIUM_PRE_mangle { # handle 116

...

iifname "lxc*" counter packets 42077 bytes 5687540 meta mark set ct mark and 0x80 # handle 119

This is the corresponding iptable rule, that is defined in iptables.go:addCiliumENIRules():

-A CILIUM_PRE_mangle -i lxc+ -m comment --comment "cilium: primary ENI" -j CONNMARK --restore-mark --nfmask 0x80 --ctmask 0x80

In certain cases, the mark contains a "serialised" (c.f. set_identity_mark() and get_identity() BPF functions) form of the pod identity, including cluster ID, as follows:

Offset: 24 16 8 0

Identity: XXXX XXXX CCCC CCCC DDDD CCCC BBBB AAAA

<unused > <cluster> < pod identity >

Serialised

to

mark: DDDD CCCC BBBB AAAA PPPP PPPP CCCC CCCC

The P bits are untouched by set_identity_mark() and are used to represent other information about the packet.

The iptable rule above performs a bitwise and with 0x80, which in binary is 1000 0000. This results in clearing the

leftmost bit of the cluster ID, at least in some cases.

I suspect the bug is simply that the mask used in the iptable rule is incorect: it should be 0x8000 in order to target the P bits of the mark as represented above. In other words, is the iptable rule simply targeting the wrong byte, or am I being too optimistic?

I've submitted a PR to fix this: https://github.com/cilium/cilium/pull/21229 (assuming the rule is hitting the wrong byte of the mark...)

Here is a partial nftrace showing the rule flip the wrong bit in the mask as it passes through:

trace id 7cf0ad14 inet filter trace_chain packet: iif "lxc367ef851a52e" ether saddr c6:06:c5:20:81:d3 ether daddr b2:79:bf:eb:ba:fa ip saddr 10.130.89.216 ip daddr 10.130.86.238 ip dscp cs0 ip ecn not-ect ip ttl 64 ip id 32909 ip protocol tcp ip length 60 tcp sport 37002 tcp dport 80 tcp flags == syn tcp window 26883

trace id 7cf0ad14 inet filter trace_chain rule ip daddr 10.130.86.238 meta nftrace set 1 (verdict continue)

trace id 7cf0ad14 inet filter trace_chain verdict continue meta mark 0x31aa0fbd

trace id 7cf0ad14 inet filter trace_chain policy accept meta mark 0x31aa0fbd

trace id 7cf0ad14 ip raw PREROUTING packet: iif "lxc367ef851a52e" ether saddr c6:06:c5:20:81:d3 ether daddr b2:79:bf:eb:ba:fa ip saddr 10.130.89.216 ip daddr 10.130.86.238 ip dscp cs0 ip ecn not-ect ip ttl 64 ip id 32909 ip length 60 tcp sport 37002 tcp dport 80 tcp flags == syn tcp window 26883

trace id 7cf0ad14 ip raw PREROUTING rule counter packets 997478 bytes 709868754 jump CILIUM_PRE_raw (verdict jump CILIUM_PRE_raw)

trace id 7cf0ad14 ip raw CILIUM_PRE_raw verdict continue meta mark 0x31aa0fbd

trace id 7cf0ad14 ip raw PREROUTING verdict continue meta mark 0x31aa0fbd

trace id 7cf0ad14 ip raw PREROUTING policy accept meta mark 0x31aa0fbd <<<<< MARK OK

trace id 7cf0ad14 ip mangle PREROUTING packet: iif "lxc367ef851a52e" ether saddr c6:06:c5:20:81:d3 ether daddr b2:79:bf:eb:ba:fa ip saddr 10.130.89.216 ip daddr 10.130.86.238 ip dscp cs0 ip ecn not-ect ip ttl 64 ip id 32909 ip length 60 tcp sport 37002 tcp dport 80 tcp flags == syn tcp window 26883

trace id 7cf0ad14 ip mangle PREROUTING rule counter packets 997478 bytes 709868754 jump CILIUM_PRE_mangle (verdict jump CILIUM_PRE_mangle)

trace id 7cf0ad14 ip mangle CILIUM_PRE_mangle rule iifname "lxc*" counter packets 32132 bytes 4340095 meta mark set ct mark and 0x80 (verdict continue)

trace id 7cf0ad14 ip mangle CILIUM_PRE_mangle verdict continue meta mark 0x31aa0f3d <<<<< MARK modified and incorrect

trace id 7cf0ad14 ip mangle PREROUTING verdict continue meta mark 0x31aa0f3d

trace id 7cf0ad14 ip mangle PREROUTING policy accept meta mark 0x31aa0f3d

...

In the trace above, the mark changes as follows:

Mark: DDDD CCCC BBBB AAAA PPPP PPPP CCCC CCCC

0011 0001 1010 1010 0000 1111 1011 1101 0x31aa0fbd 833228733 Correct: cluster id = 189

0011 0001 1010 1010 0000 1111 0011 1101 0x31aa0f3d 833228605 Wrong: cluster id = 61

^- cleared bit

@EricMountain Thanks for your info as well!

I suspect the bug is simply that the mask used in the iptable rule is incorect: it should be 0x8000 in order to target the P bits of the mark as represented above. In other words, is the iptable rule simply targeting the wrong byte, or am I being too optimistic?

IIUC, it is not wrong and interfering with ENI CNI marks. We have a mark registry here, and according to that, ENI CNI surely uses the 7th bit. So, the rule should be correct.

https://github.com/fwmark/registry

Connectivity test result for cluster-id=128

📋 Test Report

❌ 12/23 tests failed (26/114 actions), 0 tests skipped, 1 scenarios skipped:

Test [allow-all-except-world]:

❌ allow-all-except-world/pod-to-pod/curl-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

❌ allow-all-except-world/pod-to-pod/curl-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

❌ allow-all-except-world/client-to-client/ping-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/client2-74f4559c78-xzg5n (192.168.101.165:0)

❌ allow-all-except-world/client-to-client/ping-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31:0)

❌ allow-all-except-world/pod-to-service/curl-0: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node (echo-same-node:8080)

❌ allow-all-except-world/pod-to-service/curl-1: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/echo-same-node (echo-same-node:8080)

Test [client-ingress]:

❌ client-ingress/client-to-client/ping-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31:0)

Test [echo-ingress]:

❌ echo-ingress/pod-to-pod/curl-0: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

Test [client-ingress-icmp]:

❌ client-ingress-icmp/client-to-client/ping-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31:0)

Test [echo-ingress-l7]:

❌ echo-ingress-l7/pod-to-pod-with-endpoints/curl-0-public: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> curl-0-public (192.168.110.77:8080)

❌ echo-ingress-l7/pod-to-pod-with-endpoints/curl-0-private: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> curl-0-private (192.168.110.77:8080)

❌ echo-ingress-l7/pod-to-pod-with-endpoints/curl-0-privatewith-header: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> curl-0-privatewith-header (192.168.110.77:8080)

Test [echo-ingress-l7-named-port]:

❌ echo-ingress-l7-named-port/pod-to-pod-with-endpoints/curl-1-public: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> curl-1-public (192.168.110.77:8080)

❌ echo-ingress-l7-named-port/pod-to-pod-with-endpoints/curl-1-private: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> curl-1-private (192.168.110.77:8080)

❌ echo-ingress-l7-named-port/pod-to-pod-with-endpoints/curl-1-privatewith-header: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> curl-1-privatewith-header (192.168.110.77:8080)

Test [echo-ingress-from-other-client-deny]:

❌ echo-ingress-from-other-client-deny/pod-to-pod/curl-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

❌ echo-ingress-from-other-client-deny/client-to-client/ping-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/client2-74f4559c78-xzg5n (192.168.101.165:0)

❌ echo-ingress-from-other-client-deny/client-to-client/ping-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31:0)

Test [client-ingress-from-other-client-icmp-deny]:

❌ client-ingress-from-other-client-icmp-deny/pod-to-pod/curl-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

❌ client-ingress-from-other-client-icmp-deny/pod-to-pod/curl-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

❌ client-ingress-from-other-client-icmp-deny/client-to-client/ping-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/client2-74f4559c78-xzg5n (192.168.101.165:0)

Test [client-egress-to-echo-deny]:

❌ client-egress-to-echo-deny/client-to-client/ping-0: cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31) -> cilium-test/client2-74f4559c78-xzg5n (192.168.101.165:0)

❌ client-egress-to-echo-deny/client-to-client/ping-1: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/client-7bdbddd7b-7rrzq (192.168.22.31:0)

Test [client-ingress-to-echo-named-port-deny]:

❌ client-ingress-to-echo-named-port-deny/pod-to-pod/curl-0: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

Test [client-egress-to-echo-expression-deny]:

❌ client-egress-to-echo-expression-deny/pod-to-pod/curl-0: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

Test [client-egress-to-echo-service-account-deny]:

❌ client-egress-to-echo-service-account-deny/pod-to-pod/curl-0: cilium-test/client2-74f4559c78-xzg5n (192.168.101.165) -> cilium-test/echo-same-node-7894f8ffcd-vws46 (192.168.110.77:8080)

connectivity test failed: 12 tests failed

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs.

This issue has not seen any activity since it was marked stale. Closing.