Extreme Low Testing Accuracy

Hi,

First of all, thank you very much for sharing this work. I am trying to train and test on my own dataset. I formed my dataset into the tusimple format and completed the training. However, the test accuracy that I got was extremely low, like 0.0003. I ran the demo.py and it seems that the result is not bad. I am confused why the reported accuracy is extremely low.

I used labelme to annotate the training dataset and saved it to the png file. For the test dataset, I manually created the json label. Can this cause the problem? I appreciate any help.

Thank you!

@bz815 I'm wondering what metrics do you use to evaluate? What are the values of the top1, top2, top3 accuracies during training?

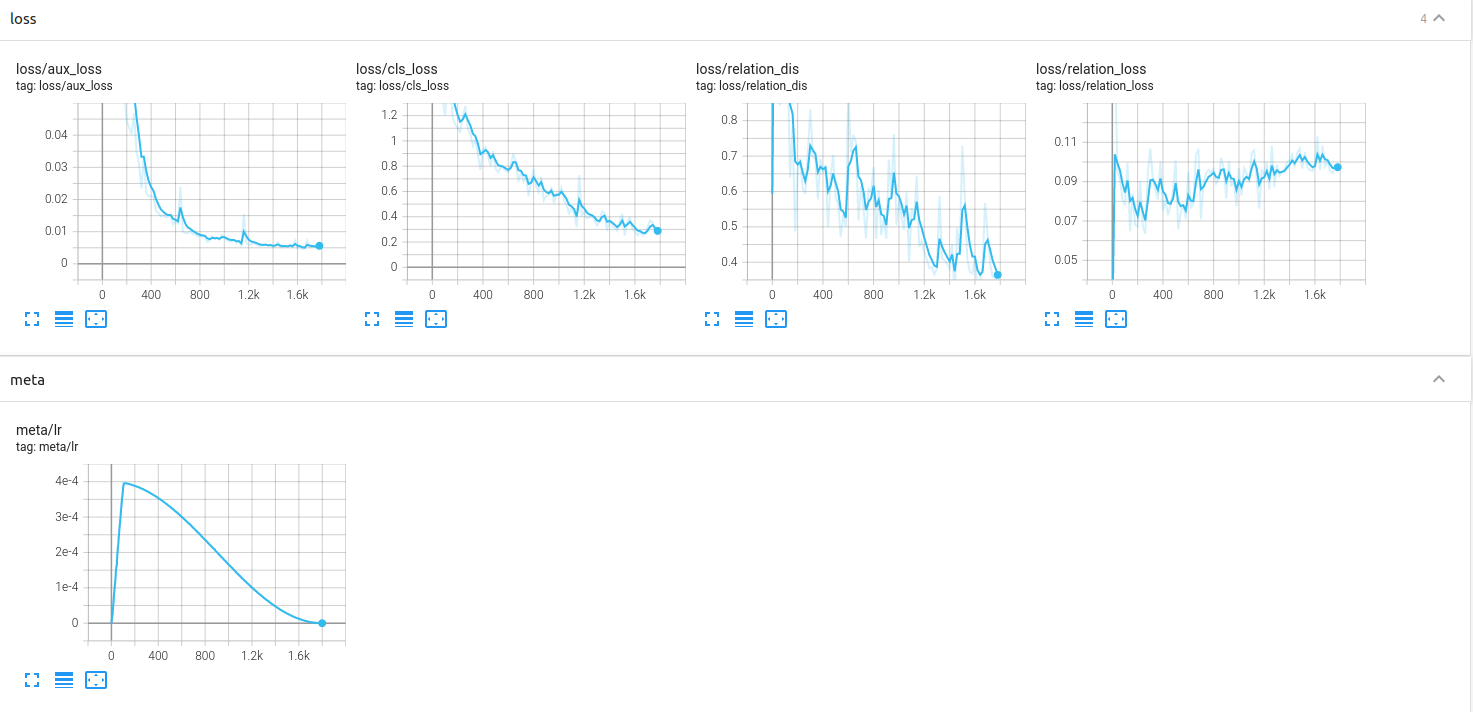

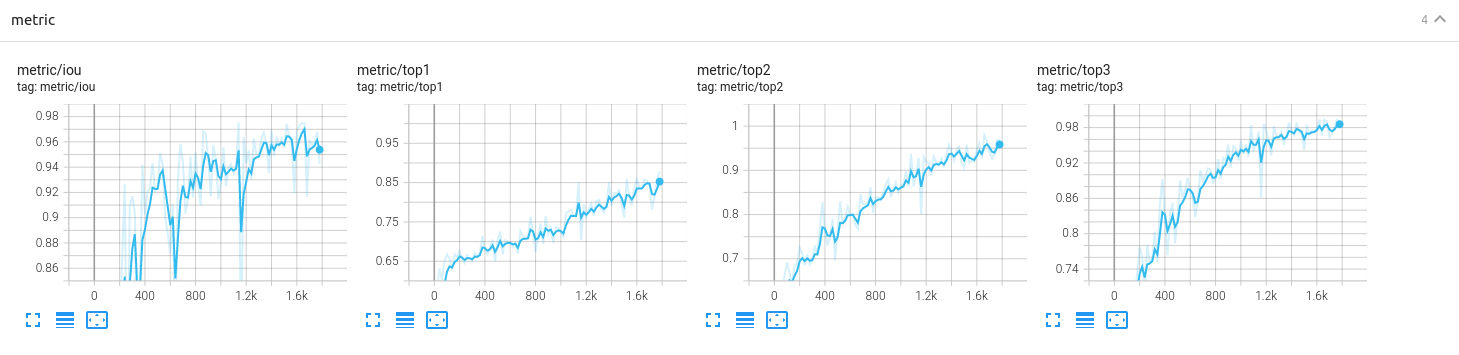

Hi, thanks for your reply. I attached the tensorboard screenshot here. What do you mean by "what metrics"? Sorry I am pretty new to this

.

.

@bz815 It seems the results are pretty good since the top3 accuracy could achieve ~98%. "what metrics" means what kind of source that you get the value of 0.0003? It is 0.0003 F1-meansure, 0.0003 accuracy, or 0.0003 recall?

@cfzd Thanks for your reply. If my understanding is correctly, it should be the accuracy metric, since I am implementing the tusimple testing. Since I got high accuracy for training, I am wondering if something is wrong with my test image labeling. What would be a proper way to label my test dataset? I saw that from the original dataset, the test label is in a json file, and it is made of discrete points. When I label my data, can I label a line that goes through the center of the lane mark by setting the "h_samples" the same as yours and find the "x" values manually? Thank you.

@bz815 Yes, you can make it similar as the tusimple annotations and eval the accuracy as the tusimple testing.