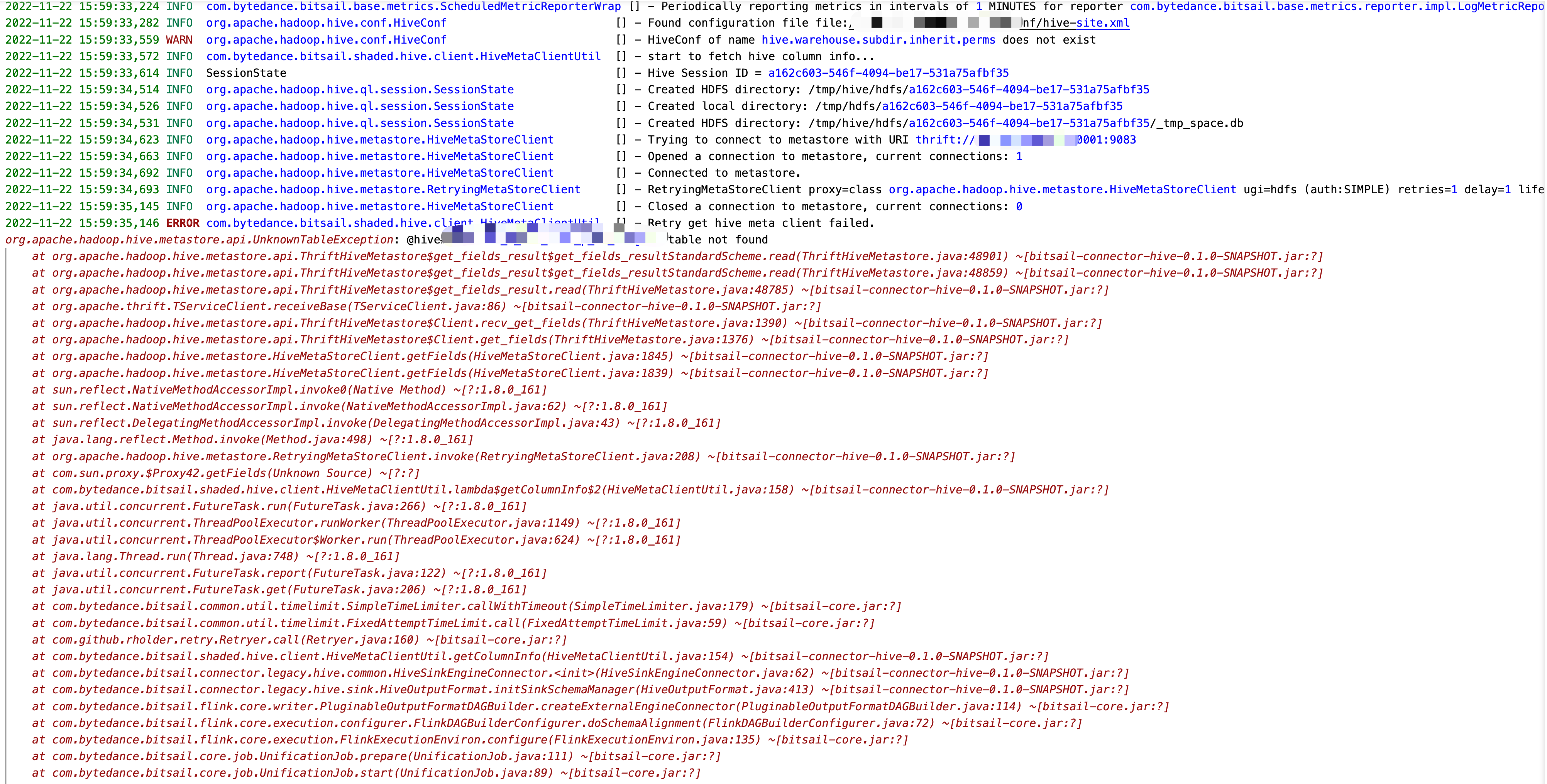

Retry get hive meta client failed.

ENV : hive-version : 2.3.9

hi @numbernumberone , did you create the hive table before running the job?

@garyli1019 hi This can run

HiveConf hiveConf = new HiveConf();

hiveConf.set("hive.metastore.uris","thrift://*.*.*.*:9083");

HiveMetaStoreClient hiveMetaStoreClient = new HiveMetaStoreClient(hiveConf);

hiveMetaStoreClient.setMetaConf("hive.metastore.client.capability.check","false");

Table table = hiveMetaStoreClient.getTable("test", "tablename");

the exception path goes to the shade hive which is in the bitsail-shade, would you change the hive.version to 2.3.9 on the root pom file and recompile the project?

@garyli1019 thank you,I change the hive.version to 2.3.9 on the root pom file and recompile the project。but env

- flink lib list

-rw-r--r-- 1 hdfs hdfs 91041 Dec 15 2021 flink-csv-1.11.6.jar

-rw-r--r-- 1 hdfs hdfs 108946857 Dec 15 2021 flink-dist_2.11-1.11.6.jar

-rw-r--r-- 1 hdfs hdfs 95311 Dec 15 2021 flink-json-1.11.6.jar

-rwxr-xr-x 1 hdfs hdfs 41368997 Nov 23 16:01 flink-shaded-hadoop-2-uber-2.7.5-10.0.jar

-rw-r--r-- 1 hdfs hdfs 35924064 Nov 23 15:34 flink-shaded-hadoop3-uber-blink-3.6.8.jar

-rw-r--r-- 1 hdfs hdfs 7712156 Dec 15 2021 flink-shaded-zookeeper-3.4.14.jar

-rw-r--r-- 1 hdfs hdfs 33547360 Dec 15 2021 flink-table_2.11-1.11.6.jar

-rw-r--r-- 1 hdfs hdfs 37566494 Dec 15 2021 flink-table-blink_2.11-1.11.6.jar

-rw-r--r-- 1 hdfs hdfs 207909 Dec 15 2021 log4j-1.2-api-2.16.0.jar

-rw-r--r-- 1 hdfs hdfs 301892 Dec 15 2021 log4j-api-2.16.0.jar

-rw-r--r-- 1 hdfs hdfs 1789565 Dec 15 2021 log4j-core-2.16.0.jar

-rw-r--r-- 1 hdfs hdfs 24258 Dec 15 2021 log4j-slf4j-impl-2.16.0.jar

- error log

Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Could not find a file system implementation for scheme 'hdfs'. The scheme is not directly supported by Flink and no Hadoop file system to support this scheme could be loaded. For a full list of supported file systems, please see https://ci.apache.org/projects/flink/flink-docs-stable/ops/filesystems/.

at org.apache.flink.core.fs.FileSystem.getUnguardedFileSystem(FileSystem.java:531)

at org.apache.flink.core.fs.FileSystem.get(FileSystem.java:408)

at org.apache.flink.core.fs.Path.getFileSystem(Path.java:274)

at com.bytedance.bitsail.connector.hadoop.util.HdfsUtils.lambda$checkExists$1(HdfsUtils.java:97)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

at java.util.concurrent.FutureTask.report(FutureTask.java:122)

at java.util.concurrent.FutureTask.get(FutureTask.java:206)

at com.google.common.util.concurrent.SimpleTimeLimiter.callWithTimeout(SimpleTimeLimiter.java:130)

at com.github.rholder.retry.AttemptTimeLimiters$FixedAttemptTimeLimit.call(AttemptTimeLimiters.java:107)

at com.github.rholder.retry.Retryer.call(Retryer.java:160)

... 16 more

Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Hadoop File System abstraction does not support scheme 'hdfs'. Either no file system implementation exists for that scheme, or the relevant classes are missing from the classpath.

at org.apache.flink.runtime.fs.hdfs.HadoopFsFactory.create(HadoopFsFactory.java:100)

at org.apache.flink.core.fs.FileSystem.getUnguardedFileSystem(FileSystem.java:527)

at org.apache.flink.core.fs.FileSystem.get(FileSystem.java:408)

at org.apache.flink.core.fs.Path.getFileSystem(Path.java:274)

at com.bytedance.bitsail.connector.hadoop.util.HdfsUtils.lambda$checkExists$1(HdfsUtils.java:97)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

... 1 more

Caused by: java.io.IOException: No FileSystem for scheme: hdfs

at org.apache.hadoop.fs.FileSystem.getFileSystemClass(FileSystem.java:2658)

at org.apache.flink.runtime.fs.hdfs.HadoopFsFactory.create(HadoopFsFactory.java:98)

... 8 more

Have you config Hadoop conf? This is because Hadoop HDFS filesystem is not initialed.

@numbernumberone hello, did you resolve this issue? If not, we can have a quick call to triage this issue.

@garyli1019 Not resolved,Thank you so much How can we contact? tenxun meeting ?