avoiding the get_reader function argument

creating an EntryReader requires passing a function that takes an offset and returns an instance of Read: https://github.com/rust-compress/rc-zip/blob/69b884f85085c5c99a846703f83ddc48e0d086ac/src/format/archive.rs#L218

It happens because a StoredEntry has no access to the original data source.

While the internal state machine should be able to work without owning the IO source, the public API is nicer if it wraps it. But that would also be dependent on the IO source. Some would support seeking, others would not.

So, ideally, the public API would allow writing this:

let file = File::open(matches.value_of("file").unwrap())?;

let reader = file.read_zip()?;

info(&reader);

for entry in reader.entries() {

println!("Extracting {}", entry.name());

let mut contents = Vec::<u8>::new();

entry.read_to_end(&mut contents)?;

}

But it would also support an IO source that would do HTTP range requests to get the entries.

In lapin, we had the async crate that would give the state machine. Its use was low level, just supporting buffers in and out: https://github.com/sozu-proxy/lapin/blob/0.16.0/async/examples/connection.rs

But the futures based API was wrapping the state machine and the IO source (that could be a TcpStream, or a SSL stream defined with openssl or rustls) inside a tokio transport:

https://github.com/sozu-proxy/lapin/blob/0.16.0/futures/src/transport.rs#L45-L137

It happens because a

StoredEntryhas no access to the original data source.

Right! There's a few constraints we have to deal with here:

- Entries might not be files, they might be symlinks or dirs -

Readonly makes sense for one of those three. - When extracting "symlinks", we actually have to read the compressed content, because it contains the symlink's target (so it's not just reading from the central directory structs in RAM, it's actually doing I/O on the file)

- When extracting files, we might want to check against the CRC-32, but we also might not. The API has to be granular enough to allow skipping it. (To make things more complicated, in the zip format, sometimes the only place the CRC-32 is actually present is after the compressed entry data).

- I want the API to be granular enough to just get the "uncompressed" data of the entry. This'll be useful when creating a zip file from another zip file, with "those entries as-is + some other new entries we'll compress now".

There's other constraints, like pluggable method handlers (bring your own inflate, basically), but this one is not as constraining as the others imho.

In lapin, we had the async crate that would give the state machine. Its use was low level, just supporting buffers in and out:

So is the currently EntryReader API suitable as that low-level API? I've been trying to expose a higher-level API via traits.

yes, I think that the current EntryReader would work as the low level API (that should still be exposed somewhere because there will always be someone with specific requirements).

Would you be ok with a high level API that creates a kind of ArchiveInner type that is stored in an Arc and stored in the ArchiveReader, but also Entry and EntryReader? There would probably be an issue if you want to decompress from multiple readers at the same time though, but maybe you have some ideas about that use case?

There would probably be an issue if you want to decompress from multiple readers at the same time though, but maybe you have some ideas about that use case?

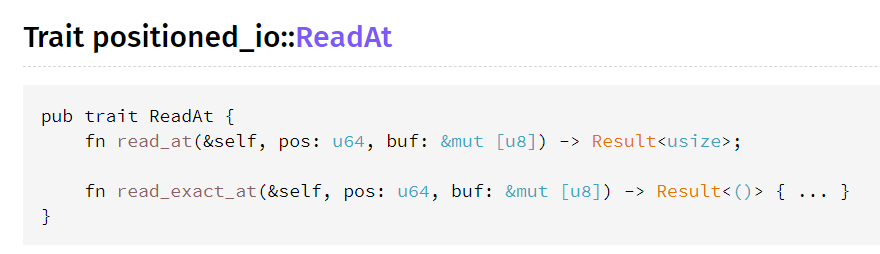

Right - I do want parallel entry decompression to be possible. That's why I like positioned-io's ReadAt interface: its read_at method only needs &self, not &mut self:

As far as the current design goes: Archive, Entry and StoredEntry are plain old data structures by design - you can pass them around, shouldn't need to mutate them (if you're just reading), and the *Reader ones are stateful and do the actual work.

So maybe we go the other way around for the high-level interface: maybe there's a function that takes ownership of a ReadAt, and returns an OpenedArchive, which owns an Archive, and instead of returning a bunch of StoredEntry, returns OwnedEntry - which can then be opened(), etc. - they'd all share an Arc<ReadAt>.

That would work for all my use cases, and it leaves the lower-level interface alone. The annoying bit is.. to relay most getters from OpenedArchive to Archive (but not all). I think implementing Deref in that case is kind of an anti-pattern.

Also, OpenedArchive is maybe not a great name - I'm starting to think the current ArchiveReader should really be called ArchiveOpener or something - and what I just described as OpenedArchive should be the one called ArchiveReader.

I'm going to go ahead and add things like 1) non-DEFLATE methods 2) porting more of archive/zip's tests, 3) a zip writer 4) demos for "updating archives", and maybe we'll see things more clearly. It's a bit hard to design in a vacuum, especially in Rust!

Thinking about this more, I'm not sure we need an Arc? If the higher-level interface takes a ReadAt, which only ever needs &self, it looks like we can get away with just lifetimes (which would be faster).

I'm not sure "being able to have entry readers after dropping the archive reader" is really a requirement.