[tidb-cdc] Channel shutdown invoked

Describe the bug(Please use English) [tidb-cdc] Prompt after running for a period of time "Channel shutdown invoked"

Environment :

- Flink version : 1.13.5/1.13.6/1.14.4

- Flink CDC version: 2.2.0/2.2.1

- Database and version: v5.4/v6.0

To Reproduce Steps to reproduce the behavior:

- Thes test data :

mysql> select count(id) from source_table;

+-----------+

| count(id) |

+-----------+

| 761920 |

+-----------+

1 row in set (0.61 sec)

mysql> show table source_table regions;

+-----------+---------------------------------------------------------------------+-------------------------------------------------------------------------------------+-----------+-----------------+---------------------+------------+---------------+------------+----------------------+------------------+

| REGION_ID | START_KEY | END_KEY | LEADER_ID | LEADER_STORE_ID | PEERS | SCATTERING | WRITTEN_BYTES | READ_BYTES | APPROXIMATE_SIZE(MB) | APPROXIMATE_KEYS |

+-----------+---------------------------------------------------------------------+-------------------------------------------------------------------------------------+-----------+-----------------+---------------------+------------+---------------+------------+----------------------+------------------+

| 16637 | t_1640_i_2_015343383538333933ff3836373233363138ff0000000000000000f7 | t_1640_r_57561 | 16640 | 5 | 16638, 16639, 16640 | 0 | 0 | 239604046 | 96 | 917109 |

| 17049 | t_1640_r_57561 | t_1640_r_180751 | 17050 | 1 | 17050, 17051, 17052 | 0 | 0 | 524664294 | 54 | 122881 |

| 16601 | t_1640_r_180751 | t_1640_r_367125 | 16604 | 5 | 16602, 16603, 16604 | 0 | 0 | 851717262 | 76 | 204800 |

| 17109 | t_1640_r_367125 | t_1640_r_608667 | 17111 | 4 | 17110, 17111, 17112 | 0 | 0 | 1101693912 | 94 | 245760 |

| 9181 | t_1640_r_608667 | t_1643_5f698000000000000001016d616c6c31383138ff3631383631363233ff3035310000000000fa | 9183 | 4 | 9182, 9183, 9184 | 0 | 0 | 703376597 | 64 | 164126 |

| 16569 | t_1622_ | t_1640_i_1_01736d617274626f78ff3632373935303835ff3730363133370000fd | 16571 | 4 | 16570, 16571, 16572 | 0 | 561 | 180 | 69 | 768937 |

| 16393 | t_1640_i_1_01736d617274626f78ff3632373935303835ff3730363133370000fd | t_1640_i_2_015343383538333933ff3836373233363138ff0000000000000000f7 | 16394 | 1 | 16394, 16395, 16396 | 0 | 388 | 40 | 51 | 647180 |

+-----------+---------------------------------------------------------------------+-------------------------------------------------------------------------------------+-----------+-----------------+---------------------+------------+---------------+------------+----------------------+------------------+

7 rows in set (0.02 sec)

- The test code :

CREATE TABLE source_table (

id STRING,

create_time TIMESTAMP(0),

update_time TIMESTAMP(0),

`user_id` BIGINT,

`version` INT,

order_no STRING,

pay_type BIGINT,

pay_status INT,

data_source INT,

amount DOUBLE,

tenant_id BIGINT,

PRIMARY KEY (pay_id) NOT ENFORCED

) WITH (

'connector' = 'tidb-cdc',

'tikv.grpc.timeout_in_ms' = '20000',

'tikv.grpc.scan_timeout_in_ms' = '20000',

'pd-addresses' = '****',

'scan.startup.mode' = 'latest-offset',

'database-name' = '****',

'table-name' = '****'

);

CREATE TABLE sink_table_print(

id STRING,

create_time TIMESTAMP(0),

update_time TIMESTAMP(0),

`user_id` BIGINT,

`version` INT,

order_no STRING,

pay_type BIGINT,

pay_status INT,

data_source INT,

amount DOUBLE,

tenant_id BIGINT,

PRIMARY KEY (id) NOT ENFORCED

) WITH (

'connector' = 'print'

);

INSERT INTO

sink_table_print

SELECT

o.id AS id,

o.create_time,

o.update_time,

o.`user_id`,

o.`version`,

o.order_no,

o.pay_order_no,

o.pay_type,

o.pay_status,

o.data_source,

o.data_source,

o.amount,

o.tenant_id AS tenant_id

FROM

source_table AS o;

- The error : running for a period of time

2022-06-06 15:07:08,844 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717045835857921, regionId: 9181

2022-06-06 15:07:08,920 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717045862334466, regionId: 17109

2022-06-06 15:07:09,846 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717046098264068, regionId: 9181

2022-06-06 15:07:09,921 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717046124478466, regionId: 17109

2022-06-06 15:07:10,921 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717046360145921, regionId: 9181

2022-06-06 15:07:11,001 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717046399729667, regionId: 17109

2022-06-06 15:07:11,855 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717046622289922, regionId: 9181

2022-06-06 15:07:12,117 INFO org.tikv.cdc.CDCClient [] - handle resolvedTs: 433717046399729667, regionId: 17109

2022-06-06 15:07:12,311 ERROR org.tikv.cdc.RegionCDCClient [] - region CDC error: region: 16637, error: org.tikv.shade.io.grpc.StatusRuntimeException: UNAVAILABLE: Keepalive failed. The connection is likely gone

2022-06-06 15:07:12,312 ERROR org.tikv.cdc.RegionCDCClient [] - region CDC error: region: 17049, error: org.tikv.shade.io.grpc.StatusRuntimeException: UNAVAILABLE: Keepalive failed. The connection is likely gone

2022-06-06 15:07:12,313 INFO org.tikv.cdc.CDCClient [] - handle error: org.tikv.shade.io.grpc.StatusRuntimeException: UNAVAILABLE: Keepalive failed. The connection is likely gone, regionId: 16637

2022-06-06 15:07:12,314 INFO org.tikv.cdc.CDCClient [] - remove regions: [16637]

2022-06-06 15:07:12,314 INFO org.tikv.cdc.RegionCDCClient [] - close (region: 16637)

2022-06-06 15:07:12,314 INFO org.tikv.cdc.RegionCDCClient [] - terminated (region: 16637)

2022-06-06 15:07:12,317 INFO org.tikv.cdc.CDCClient [] - remove regions: [17049]

2022-06-06 15:07:12,317 INFO org.tikv.cdc.RegionCDCClient [] - close (region: 17049)

2022-06-06 15:07:12,317 INFO org.tikv.cdc.RegionCDCClient [] - terminated (region: 17049)

2022-06-06 15:07:12,317 INFO org.tikv.cdc.CDCClient [] - add regions: [{Region[16637] ConfVer[5] Version[793] Store[4] KeyRange[t\200\000\000\000\000\000\006h_i\200\000\000\000\000\000\000\002\001SC858393\37786723618\377\000\000\000\000\000\000\000\000\367]:[t\200\000\000\000\000\000\006h_r\200\000\000\000\000\000\340\331]}, {Region[17049] ConfVer[5] Version[785] Store[4] KeyRange[t\200\000\000\000\000\000\006h_r\200\000\000\000\000\000\340\331]:[t\200\000\000\000\000\000\006h_r\200\000\000\000\000\002\302\017]}], timestamp: 433583509931556869

2022-06-06 15:07:12,317 INFO org.tikv.cdc.RegionCDCClient [] - start streaming region: 16637, running: true

2022-06-06 15:07:12,318 INFO org.tikv.cdc.RegionCDCClient [] - start streaming region: 17049, running: true

2022-06-06 15:07:12,318 ERROR org.tikv.cdc.RegionCDCClient [] - region CDC error: region: 16637, error: org.tikv.shade.io.grpc.StatusRuntimeException: UNAVAILABLE: Channel shutdown invoked

2022-06-06 15:07:12,318 INFO org.tikv.cdc.CDCClient [] - keyRange applied

2022-06-06 15:07:12,318 ERROR org.tikv.cdc.RegionCDCClient [] - region CDC error: region: 17049, error: org.tikv.shade.io.grpc.StatusRuntimeException: UNAVAILABLE: Channel shutdown invoked

2022-06-06 15:07:12,341 INFO org.tikv.cdc.CDCClient [] - handle error: org.tikv.shade.io.grpc.StatusRuntimeException: UNAVAILABLE: Keepalive failed. The connection is likely gone, regionId: 17049

2022-06-06 15:07:12,342 INFO org.tikv.cdc.CDCClient [] - remove regions: [17049]

2022-06-06 15:07:12,342 INFO org.tikv.cdc.RegionCDCClient [] - close (region: 17049)

2022-06-06 15:07:12,342 INFO org.tikv.cdc.RegionCDCClient [] - terminated (region: 17049)

2022-06-06 15:07:12,343 INFO org.tikv.cdc.CDCClient [] - remove regions: [16637]

2022-06-06 15:07:12,343 INFO org.tikv.cdc.RegionCDCClient [] - close (region: 16637)

Additional Description

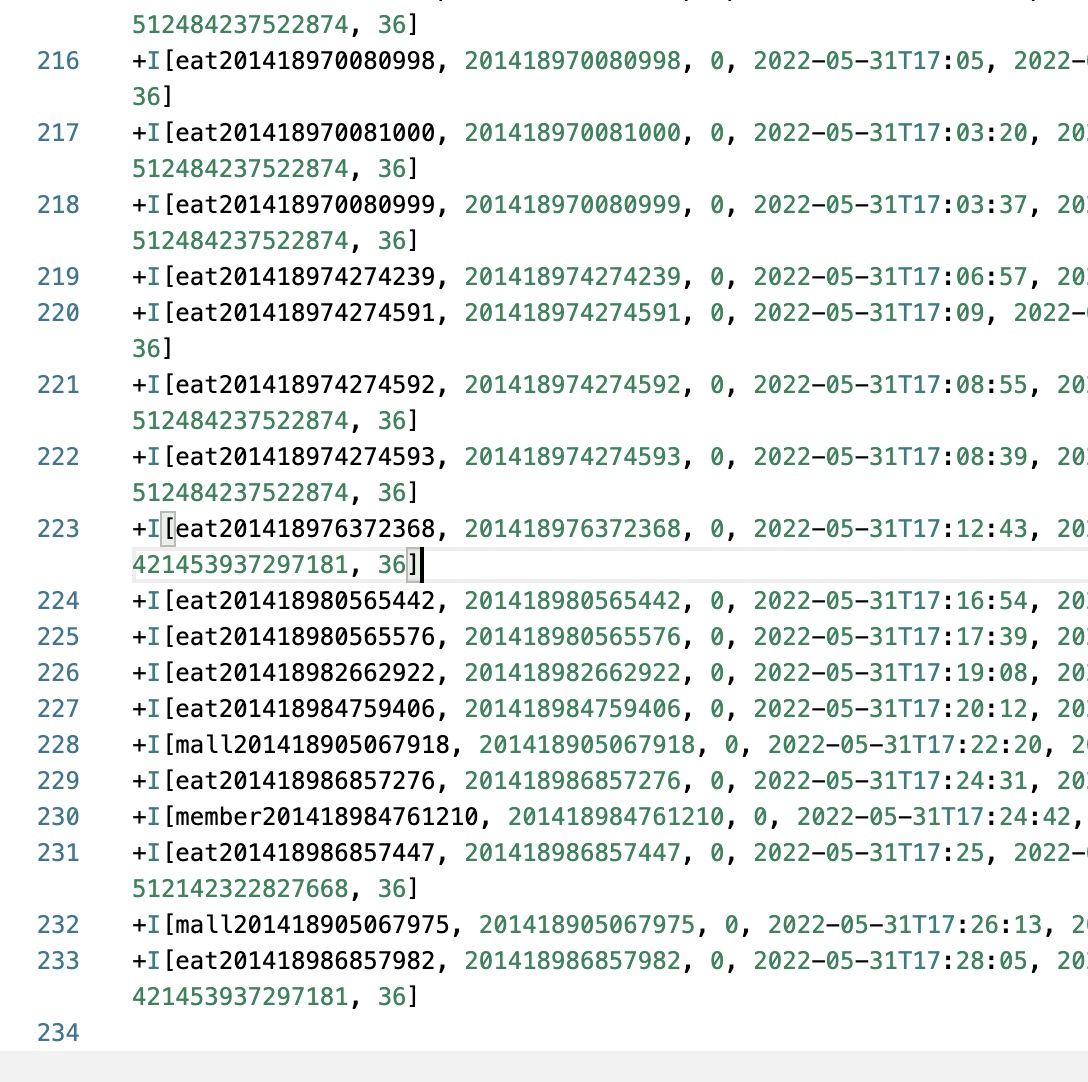

(1)insert some data into TIDB source table, but no change events

mysql> select count(id) from source_table;

+-----------+

| count(id) |

+-----------+

| 761923 |

+-----------+

1 row in set (0.61 sec)

I try change Flink、Flink-CDC、TiDB version,but it didn't change the result (2)I have observed that the region that listens to tidb seems to be inconsistent with tidb after a period of time, it is just my guess, I suspect that the tidb client does not refresh the region topology in time, because I try to listen to a table with only one region, it works without seeing exceptions

mysql> show table biz_table regions;

+-----------+-----------------------------+-----------------------------+-----------+-----------------+------------------+------------+---------------+------------+----------------------+------------------+

| REGION_ID | START_KEY | END_KEY | LEADER_ID | LEADER_STORE_ID | PEERS | SCATTERING | WRITTEN_BYTES | READ_BYTES | APPROXIMATE_SIZE(MB) | APPROXIMATE_KEYS |

+-----------+-----------------------------+-----------------------------+-----------+-----------------+------------------+------------+---------------+------------+----------------------+------------------+

| 6737 | t_1142_5f72800022b5f7868877 | t_1295_5f720000000000000000 | 6738 | 1 | 6738, 6739, 6740 | 0 | 0 | 4224980 | 72 | 603294 |

+-----------+-----------------------------+-----------------------------+-----------+-----------------+------------------+------------+---------------+------------+----------------------+------------------+

1 row in set (0.01 sec)

Me too.Did the owner solve it?

Closing this issue because it was created before version 2.3.0 (2022-11-10). Please try the latest version of Flink CDC to see if the issue has been resolved. If the issue is still valid, kindly report it on Apache Jira under project Flink with component tag Flink CDC. Thank you!