eval2.py failing with "You must feed a value for placeholder tensor 'y_mel'

Hello,

I have made a lot of progress in getting this project to run. I am successfully training net1 to 70% accuracy, and net 2 to within 0.007 loss.

When I run eval2.py, I get the error described in the title, and the following traceback:

File "eval2.py", line 68, in <module>

eval(logdir1=logdir_train1, logdir2=logdir_train2)

File "eval2.py", line 47, in eval

summ_loss, = predictor(x_mfccs, y_spec)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/predict/base.py", line 39, in __call__

output = self._do_call(dp)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/predict/base.py", line 131, in _do_call

return self._callable(*dp)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 1152, in _generic_run

return self.run(fetches, feed_dict=feed_dict, **kwargs)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 877, in run

run_metadata_ptr)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 1100, in _run

feed_dict_tensor, options, run_metadata)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 1272, in _do_run

run_metadata)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 1291, in _do_call

raise type(e)(node_def, op, message)

tensorflow.python.framework.errors_impl.InvalidArgumentError: You must feed a value for placeholder tensor 'y_mel' with dtype float and shape [?,401,80]

[[Node: y_mel = Placeholder[dtype=DT_FLOAT, shape=[?,401,80], _device="/job:localhost/replica:0/task:0/device:GPU:0"]()]]

[[Node: net2/cbhg_linear/highwaynet_7/dense1/Tensordot/Shape/_1681 = _Recv[client_terminated=false, recv_device="/job:localhost/replica:0/task:0/device:CPU:0", send_device="/job:localhost/replica:0/task:0/device:GPU:0", send_device_incarnation=1, tensor_name="edge_2452_net2/cbhg_linear/highwaynet_7/dense1/Tensordot/Shape", tensor_type=DT_INT32, _device="/job:localhost/replica:0/task:0/device:CPU:0"]()]]

Caused by op u'y_mel', defined at:

File "eval2.py", line 68, in <module>

eval(logdir1=logdir_train1, logdir2=logdir_train2)

File "eval2.py", line 44, in eval

predictor = OfflinePredictor(pred_conf)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/predict/base.py", line 146, in __init__

input.setup(config.inputs_desc)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/utils/argtools.py", line 181, in wrapper

return func(*args, **kwargs)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/input_source/input_source_base.py", line 97, in setup

self._setup(inputs_desc)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/input_source/input_source.py", line 44, in _setup

self._all_placehdrs = [v.build_placeholder_reuse() for v in inputs]

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/graph_builder/model_desc.py", line 67, in build_placeholder_reuse

return self.build_placeholder()

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/graph_builder/model_desc.py", line 51, in build_placeholder

self.type, shape=self.shape, name=self.name)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/ops/array_ops.py", line 1735, in placeholder

return gen_array_ops.placeholder(dtype=dtype, shape=shape, name=name)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/ops/gen_array_ops.py", line 4925, in placeholder

"Placeholder", dtype=dtype, shape=shape, name=name)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/framework/op_def_library.py", line 787, in _apply_op_helper

op_def=op_def)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/util/deprecation.py", line 454, in new_func

return func(*args, **kwargs)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/framework/ops.py", line 3155, in create_op

op_def=op_def)

File "/home/mark/.local/lib/python2.7/site-packages/tensorflow/python/framework/ops.py", line 1717, in __init__

self._traceback = tf_stack.extract_stack()

InvalidArgumentError (see above for traceback): You must feed a value for placeholder tensor 'y_mel' with dtype float and shape [?,401,80]`

[[Node: y_mel = Placeholder[dtype=DT_FLOAT, shape=[?,401,80], _device="/job:localhost/replica:0/task:0/device:GPU:0"]()]]`

[[Node: net2/cbhg_linear/highwaynet_7/dense1/Tensordot/Shape/_1681 = _Recv[client_terminated=false, recv_device="/job:localhost/replica:0/task:0/device:CPU:0", send_device="/job:localhost/replica:0/task:0/device:GPU:0", send_device_incarnation=1, tensor_name="edge_2452_net2/cbhg_linear/highwaynet_7/dense1/Tensordot/Shape", tensor_type=DT_INT32, _device="/job:localhost/replica:0/task:0/device:CPU:0"]()]]

When I run convert.py, I get an assertion error:

File "convert.py", line 150, in <module>

do_convert(args, logdir1=logdir_train1, logdir2=logdir_train2)

File "convert.py", line 105, in do_convert

audio, y_audio, ppgs = convert(predictor, df)

File "convert.py", line 45, in convert

pred_spec, y_spec, ppgs = predictor(next(df().get_data()))

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/predict/base.py", line 39, in __call__

output = self._do_call(dp)

File "/home/mark/.local/lib/python2.7/site-packages/tensorpack/predict/base.py", line 119, in _do_call

"{} != {}".format(len(dp), len(self.input_tensors))

AssertionError: 1 != 3

I am also getting a number of warnings when I run train2.py, eval2.py, and convert.py which I'm not sure are related:

[0831 18:26:38 @sessinit.py:90] WRN The following variables are in the graph, but not found in the checkpoint: net2/prenet/dense1/kernel, net2/prenet/dense1/bias, net2/prenet/dense2/kernel, net2/prenet/dense2/bias, net2/cbhg_mel/conv1d_banks/num_1/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_1/normalize/beta, net2/cbhg_mel/conv1d_banks/num_1/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_2/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_2/normalize/beta, net2/cbhg_mel/conv1d_banks/num_2/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_3/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_3/normalize/beta, net2/cbhg_mel/conv1d_banks/num_3/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_4/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_4/normalize/beta, net2/cbhg_mel/conv1d_banks/num_4/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_5/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_5/normalize/beta, net2/cbhg_mel/conv1d_banks/num_5/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_6/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_6/normalize/beta, net2/cbhg_mel/conv1d_banks/num_6/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_7/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_7/normalize/beta, net2/cbhg_mel/conv1d_banks/num_7/normalize/gamma, net2/cbhg_mel/conv1d_banks/num_8/conv1d/conv1d/kernel, net2/cbhg_mel/conv1d_banks/num_8/normalize/beta, net2/cbhg_mel/conv1d_banks/num_8/normalize/gamma, net2/cbhg_mel/conv1d_1/conv1d/kernel, net2/cbhg_mel/normalize/beta, net2/cbhg_mel/normalize/gamma, net2/cbhg_mel/conv1d_2/conv1d/kernel, net2/cbhg_mel/highwaynet_0/dense1/kernel, net2/cbhg_mel/highwaynet_0/dense1/bias, net2/cbhg_mel/highwaynet_0/dense2/kernel, net2/cbhg_mel/highwaynet_0/dense2/bias, net2/cbhg_mel/highwaynet_1/dense1/kernel, net2/cbhg_mel/highwaynet_1/dense1/bias, net2/cbhg_mel/highwaynet_1/dense2/kernel, net2/cbhg_mel/highwaynet_1/dense2/bias, net2/cbhg_mel/highwaynet_2/dense1/kernel, net2/cbhg_mel/highwaynet_2/dense1/bias, net2/cbhg_mel/highwaynet_2/dense2/kernel, net2/cbhg_mel/highwaynet_2/dense2/bias, net2/cbhg_mel/highwaynet_3/dense1/kernel, net2/cbhg_mel/highwaynet_3/dense1/bias, net2/cbhg_mel/highwaynet_3/dense2/kernel, net2/cbhg_mel/highwaynet_3/dense2/bias, net2/cbhg_mel/highwaynet_4/dense1/kernel, net2/cbhg_mel/highwaynet_4/dense1/bias, net2/cbhg_mel/highwaynet_4/dense2/kernel, net2/cbhg_mel/highwaynet_4/dense2/bias, net2/cbhg_mel/highwaynet_5/dense1/kernel, net2/cbhg_mel/highwaynet_5/dense1/bias, net2/cbhg_mel/highwaynet_5/dense2/kernel, net2/cbhg_mel/highwaynet_5/dense2/bias, net2/cbhg_mel/highwaynet_6/dense1/kernel, net2/cbhg_mel/highwaynet_6/dense1/bias, net2/cbhg_mel/highwaynet_6/dense2/kernel, net2/cbhg_mel/highwaynet_6/dense2/bias, net2/cbhg_mel/highwaynet_7/dense1/kernel, net2/cbhg_mel/highwaynet_7/dense1/bias, net2/cbhg_mel/highwaynet_7/dense2/kernel, net2/cbhg_mel/highwaynet_7/dense2/bias, net2/cbhg_mel/gru/bidirectional_rnn/fw/gru_cell/gates/kernel, net2/cbhg_mel/gru/bidirectional_rnn/fw/gru_cell/gates/bias, net2/cbhg_mel/gru/bidirectional_rnn/fw/gru_cell/candidate/kernel, net2/cbhg_mel/gru/bidirectional_rnn/fw/gru_cell/candidate/bias, net2/cbhg_mel/gru/bidirectional_rnn/bw/gru_cell/gates/kernel, net2/cbhg_mel/gru/bidirectional_rnn/bw/gru_cell/gates/bias, net2/cbhg_mel/gru/bidirectional_rnn/bw/gru_cell/candidate/kernel, net2/cbhg_mel/gru/bidirectional_rnn/bw/gru_cell/candidate/bias, net2/pred_mel/kernel, net2/pred_mel/bias, net2/dense/kernel, net2/dense/bias, net2/cbhg_linear/conv1d_banks/num_1/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_1/normalize/beta, net2/cbhg_linear/conv1d_banks/num_1/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_2/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_2/normalize/beta, net2/cbhg_linear/conv1d_banks/num_2/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_3/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_3/normalize/beta, net2/cbhg_linear/conv1d_banks/num_3/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_4/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_4/normalize/beta, net2/cbhg_linear/conv1d_banks/num_4/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_5/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_5/normalize/beta, net2/cbhg_linear/conv1d_banks/num_5/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_6/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_6/normalize/beta, net2/cbhg_linear/conv1d_banks/num_6/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_7/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_7/normalize/beta, net2/cbhg_linear/conv1d_banks/num_7/normalize/gamma, net2/cbhg_linear/conv1d_banks/num_8/conv1d/conv1d/kernel, net2/cbhg_linear/conv1d_banks/num_8/normalize/beta, net2/cbhg_linear/conv1d_banks/num_8/normalize/gamma, net2/cbhg_linear/conv1d_1/conv1d/kernel, net2/cbhg_linear/normalize/beta, net2/cbhg_linear/normalize/gamma, net2/cbhg_linear/conv1d_2/conv1d/kernel, net2/cbhg_linear/highwaynet_0/dense1/kernel, net2/cbhg_linear/highwaynet_0/dense1/bias, net2/cbhg_linear/highwaynet_0/dense2/kernel, net2/cbhg_linear/highwaynet_0/dense2/bias, net2/cbhg_linear/highwaynet_1/dense1/kernel, net2/cbhg_linear/highwaynet_1/dense1/bias, net2/cbhg_linear/highwaynet_1/dense2/kernel, net2/cbhg_linear/highwaynet_1/dense2/bias, net2/cbhg_linear/highwaynet_2/dense1/kernel, net2/cbhg_linear/highwaynet_2/dense1/bias, net2/cbhg_linear/highwaynet_2/dense2/kernel, net2/cbhg_linear/highwaynet_2/dense2/bias, net2/cbhg_linear/highwaynet_3/dense1/kernel, net2/cbhg_linear/highwaynet_3/dense1/bias, net2/cbhg_linear/highwaynet_3/dense2/kernel, net2/cbhg_linear/highwaynet_3/dense2/bias, net2/cbhg_linear/highwaynet_4/dense1/kernel, net2/cbhg_linear/highwaynet_4/dense1/bias, net2/cbhg_linear/highwaynet_4/dense2/kernel, net2/cbhg_linear/highwaynet_4/dense2/bias, net2/cbhg_linear/highwaynet_5/dense1/kernel, net2/cbhg_linear/highwaynet_5/dense1/bias, net2/cbhg_linear/highwaynet_5/dense2/kernel, net2/cbhg_linear/highwaynet_5/dense2/bias, net2/cbhg_linear/highwaynet_6/dense1/kernel, net2/cbhg_linear/highwaynet_6/dense1/bias, net2/cbhg_linear/highwaynet_6/dense2/kernel, net2/cbhg_linear/highwaynet_6/dense2/bias, net2/cbhg_linear/highwaynet_7/dense1/kernel, net2/cbhg_linear/highwaynet_7/dense1/bias, net2/cbhg_linear/highwaynet_7/dense2/kernel, net2/cbhg_linear/highwaynet_7/dense2/bias, net2/cbhg_linear/gru/bidirectional_rnn/fw/gru_cell/gates/kernel, net2/cbhg_linear/gru/bidirectional_rnn/fw/gru_cell/gates/bias, net2/cbhg_linear/gru/bidirectional_rnn/fw/gru_cell/candidate/kernel, net2/cbhg_linear/gru/bidirectional_rnn/fw/gru_cell/candidate/bias, net2/cbhg_linear/gru/bidirectional_rnn/bw/gru_cell/gates/kernel, net2/cbhg_linear/gru/bidirectional_rnn/bw/gru_cell/gates/bias, net2/cbhg_linear/gru/bidirectional_rnn/bw/gru_cell/candidate/kernel, net2/cbhg_linear/gru/bidirectional_rnn/bw/gru_cell/candidate/bias, net2/pred_spec/kernel, net2/pred_spec/bias

[0831 18:26:38 @sessinit.py:90] WRN The following variables are in the checkpoint, but not found in the graph: global_step:0, learning_rate:0

I have seen some similar problems like #23 and #42 which had the same error, but when running train2.py, and they say the problem was solved by setting "queue=True". I'm not sure where to do that. I've added it in line 25 of eval2.py: def eval(logdir1, logdir2, queue=True): but I still get the same error. I can't shake the feeling that it's something obvious (like a version error) and I just don't know enough about tensorflow/tensorpack to fix it.

Any help would be appreciated, as I've spent a few days trying to find out the nature of this issue. If it helps, I'm running on a single GPU, Ubuntu 18.04, and python 2.7

Am facing the same problem. Were you able to get a resolution for this ?

solved this. in function get_eval_input_names(), change the output to return ['x_mfccs', 'y_spec','y_mel'] and change the input to the predictor accordingly

Fantastic, that worked! Thanks!

I was also able to fix the assertion error for convert.py by moving the predictor call out from convert() and passing everything to convert() manually:

def convert(predictor, df, pred_spec, y_spec, ppgs):

...

...

...

pr, y_s, pp = next(df().get_data())

pred_spec, y_spec, ppgs = predictor(pr, y_s, pp)

audio, y_audio, ppgs = convert(predictor, df, pred_spec, y_spec, ppgs)

I'm still a little worried about those warnings, but I'm getting an output now. It's still really garbled, though. I'm using a custom dataset, so I'll re-check and see if there's any noise in it. (maybe I just need to train longer)

Hi, may I ask how many epoch was enough to reach 70% acc on net1? @CIDFarwin @ashavish I'm not on train2 yet so, thanks for the code, that will save me debug time.

@carlfm01 I ran mine for 260 epochs and it seemed to be leveling off at 70% after 200. I might run it for longer, but the README says accuracy of net1 is less important.

There's also a pre-trained model in #12 that I couldn't get to work, but you might have more luck.

Thanks, I use Azure credit so I need a reference to know if the thing is going well and not spend money on broken hparams. Two more questions, are you using the default params? And I noticed the GPU clock drops, I have to use the option allow_soft_placement=True to avoid GPU operation crash, you got it working without it? I tried to use the pre-trained model but no luck, thanks.

Well I'm just starting with tensorflow so I wasn't aware of the allow_soft_placement option (which I'll be playing around with now.) I was able to stop the GPU crashing by editing hparams/default.yaml to lower the batch size to 8 and set num_gpu to 1.

I also lowered the number of epochs to 20 (which takes about an hour on my system) because I noticed that the epochs were taking more time the longer I had the script run, but restarting the script would have them back at 2-5 minutes per epoch.

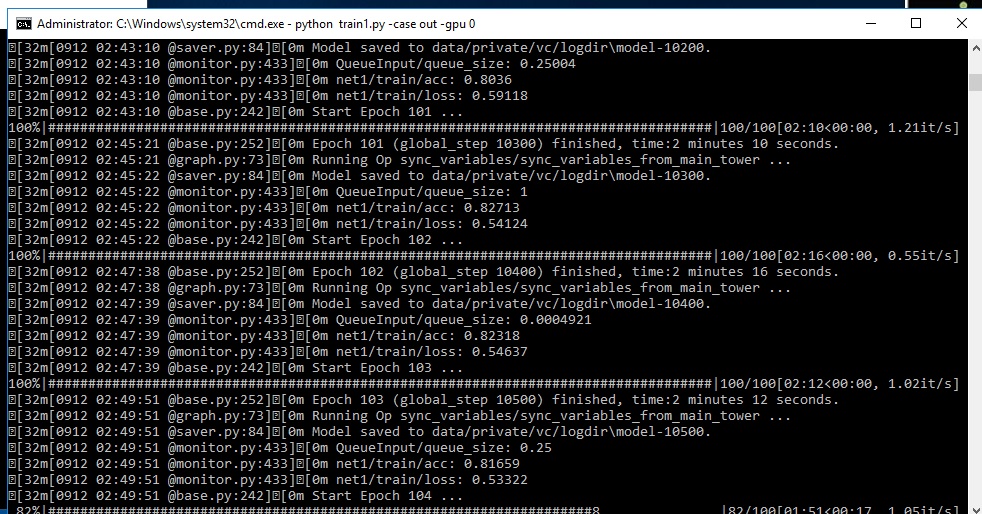

I'm new to Tensorflow too, I'm confused, the training for net 1 is taking too long and got stuck at 108 epoch with acc of 0.8 like 6h. Now I'm start to think that the 0.8 means 80%, I don't know.

Take a look

I don't recommend you to use allow_soft_placement if there is no crash like

Cannot assign a device for operation 'tower0/net1/dense/Tensordot/ListDiff': Could not satisfy explicit device specification '/device:GPU:0' because no supported kernel for GPU devices is available. Registered kernels:

Sometimes the GPU doesn't support all the operations of the session, so the cpu executes the operations that the GPU can't.

@CIDFarwin how was your result quality. I ran train 1 for 374 epochs and got to abt 86% and train 2 abt 128 epochs, but the quality of output is not great (the audio is too low to hear clearly ..wonder why is that and it sounds robotic with noise)

@carlfm01 I believe that 0.8 means 80% accuracy, yes. I'm not sure why it would get stuck at epoch 104 if it got that far (When mine gets stuck it's usually around epoch 14 or so)

@ashavish I've trained my net2 for over 2000 epochs with around 0.005 loss and I have the same problem (too quiet and robotic with lots of noise.) are you using the arctic/TIMIT datasets or custom ones? (I'm trying custom data for net2)

@CIDFarwin my net1 was on TMIT and net 2 tried two variants. 1 on Arctic and 1 on custom data set. Have loss in the range of 0.007.Arctic one was slightly better and audible but still noisy and robotic. The custom one, can hardly hear.

@ashavish Which hparams are you using? There's like 3 with different hidden_units 128,256 and 512, in one model the dropout_rate is set to 0 in the default is set to 0.2, the same for num_highway_blocks. Looks like he trained the net1 with default params and for net2 he changed few params depending on the dataset.

@carlfm01 for arctic, used the same as whats in the default and for the custom set, didnt change anything other than the data path, so it would have used the same params. Good point though, will try changing and see if the result gets better. But if it involves changing the network for each dataset, tuning it would become a headache.

My training got stuck, so I checked the params and the min_db was wrong 😢 , I have to start over. As soon as I got something good or bad I'll comment.

Terrible, at 209 epoch with 0.006 loss I still can't hear something. I think I'm using wrong Hparams. Can someone upload the params that is using,, please. individualAudio2.zip

I'll retrain with https://github.com/andabi/deep-voice-conversion/blob/3b891b0c88642ad20a06ed355ca83f27a5096682/hparams/default.yaml

@carlfm01 you will get some output (not great, but audible) if you train with default params for arctic. its the custom set which sucks for me.

So glad to see new discussions. I just run the code with TIMIT, the accrucy for Net1 is 0.81505 at 457 epoch, loss for Net2 is 0.0059194 at 320 epoch. Also, the converted audio sounds strange as attached. I tried to convert a costumed audio with the model directly, a Chinese sentence, with a terrible effect... So I wonder what the requirement for the data if we want to train a model for a new person, or even for a new language?

Hi @sailor88128 , your audio sounds pretty good. I think you should train little more and then convert changing the emphasis, by default is 0.97, try using 1.0, 1.05, 1.2.. to 2. Unfortunately I can't produce audible examples, I think I changed something somewhere that is causing the net 2 goes crazy. Maybe it can be realted to the version of python in the lambda. I'll try to upload my trained model so you can try.

To support a new languague you need a dataset that contains the speech and its respective phoneme file that contains the time of the each phoneme, a dictionary of the phonemes contained in the phoneme files, you can see it on timit .phn, the for the network 2 you need a considerable amount of audio examples you can get it from here http://www.openslr.org/18/ for chinese. The you need to adjust the win lenght and hop lenght to fit the chinese phonemes, I don't know if they are similar to the english phonemes.

I wan't to mask an existing tts, I'll try by generating the audio from that tts, build the phoneme file for each wav and play with the parameters. I wish someone could implement net pruning to speed up inference time, that is out of my knowledge.

They are reaching real time on mobile cpu by doing weight pruning https://arxiv.org/pdf/1802.08435v1.pdf

https://neoxzai-my.sharepoint.com/:u:/p/accounts/EeTK5jFIUr1OgGEyNKBzFdYBumHrDajU9FDpafkvDGLq0g?e=bBY6MK

My models, you can try?

Hi @carlfm01 , thanks for you detailed discussion. For convert.py, the above solution by @CIDFarwin is useful and you can check the audio in tensorboard.

Yes, I've check the .phn files in TIMIT, it seems difficult to find open source Chinese data set with phoneme label. I will try...

Yeah, speeding up the inference is a problem. For a 2second audio, 20+ seconds is needed to convert :(

I'll try your model later~

Now I'm getting

File ".\convert.py", line 102, in do_convert

audio, y_audio, ppgs = convert(predictor, df)

File ".\convert.py", line 63, in convert

audio = inv_preemphasis(audio, coeff=hp.Default.preemphasis)

File "C:\Users\Carlos\Desktop\deep-voice\audio.py", line 248, in inv_preemphasis

wav = signal.lfilter(b=[1], a=[1, -coeff], x=preem_wav,axis=0)

File "D:\py\lib\site-packages\scipy\signal\signaltools.py", line 1354, in lfilter

return sigtools._linear_filter(b, a, x, axis)

ValueError: selected axis is out of range

I tried #20 but getting the same weird audio, I'm using python 3.6.

@carlfm01 I used python2.7 directly and there are only several bugs. Maybe you can build a new conda env and try, building env is quick.

Hi @CIDFarwin @carlfm01 @ashavish , as I understand till now, this synthesis method generate wave for each phoneme, frame by frame, right? If so, it is possible to realize a real-time version?

So glad to see new discussions. I just run the code with TIMIT, the accrucy for Net1 is 0.81505 at 457 epoch, loss for Net2 is 0.0059194 at 320 epoch. Also, the converted audio sounds strange as attached. I tried to convert a costumed audio with the model directly, a Chinese sentence, with a terrible effect... So I wonder what the requirement for the data if we want to train a model for a new person, or even for a new language?

hi ,ur result sounds great,can u tell me params u used to train net1 & net2? THX!

hey guys, I successfully run the code and got some audible results . u can dawnload it and have a test.

audio.zip

i trained net1 with TIMIT and got accrucy of 0.70(test acc, not train acc which is about 0.85) at around 100 epoch, loss for Net2 decreased from 0.016 to 0.0058 at 600 epoch. In the above zip file ,i also upload

conversion results from different epochs of training(Marked by loss value).

@CIDFarwin I used the code to manually passing the values into the convert function. However, i dont understand how to view the result of the cpnversion . can u please help ?

Now I'm getting File ".\convert.py", line 102, in do_convert audio, y_audio, ppgs = convert(predictor, df) File ".\convert.py", line 63, in convert audio = inv_preemphasis(audio, coeff=hp.Default.preemphasis) File "C:\Users\Carlos\Desktop\deep-voice\audio.py", line 248, in inv_preemphasis wav = signal.lfilter(b=[1], a=[1, -coeff], x=preem_wav,axis=0) File "D:\py\lib\site-packages\scipy\signal\signaltools.py", line 1354, in lfilter return sigtools._linear_filter(b, a, x, axis) ValueError: selected axis is out of range

I tried #20 but getting the same weird audio, I'm using python 3.6.

How did you solve this?