OpenLane

OpenLane copied to clipboard

OpenLane copied to clipboard

Include PDK into alternative docker image

Prompt

OpenROAD has the capability to include the PDK into the docker image, this is a nice deployment feature.

Proposal

Two docker images should be built, one with and one without the PDK files

Alternatives

Deployment for pre-built OpenLane is trickier without this feature. The user already has a transport mechanism for the Docker image, now he needs a second to download the PDK.

I actually want to do this, not as an "alternative image," but all images period. For context, the reason we don't include it is that the final built PDK is relatively large. The downside to not including it here is that the PDK also takes forever to build (and is finicky to hook up). It's essentially a tradeoff between pull/clone time and compute time, but honestly, I'm leaning towards just throwing the PDK in the image.

I'll discuss this with the rest of the team.

@donn Thanks!

So... https://github.com/The-OpenROAD-Project/OpenLane/pull/846 was supposed to do it, except the final image is 5 gigabytes and takes like a good 30 minutes to build on an 8-core machine, which means our CI is going to blow a gasket.

Read the PR's writeup for more info.

How often are these images updated? All I need is a published and tagged image every once in a while. As a regular user there is no need for me to have the latest of everything. So perhaps you could just do a tagged release with the bundled PDK which is updated when needed (e.g. before a MPW run or whenever major bugs are found) and then you can disable the PDK bundling for your internal CI.

Daily. We'll have to take a look- too many people are typing in "make openlane" and expecting things to work as is, so I'm not hot on the idea of multiple images.

Well, my hope is that no one will have to download the openlane repo and type make openlane at all unless they have a very specific reason. I just want openlane it to be available and ready to use, like the rest of my EDA tools. Just as a reference, here's a launcher script I'm shipping with Edalize https://github.com/olofk/edalize/blob/master/scripts/el_docker This allows me to seamlessly run most of the FOSSi EDA tools (OpenLANE included, although needs a new image reference) containerized which is both a time saver and has also simplified the github actions to run FuseSoC-enabled projects.

@donn bear in mind that you will likely have to support many PDKs in the future. I don't think you want to put them all into a single mega-image that will be enormous and the end user may only want a small fraction of.

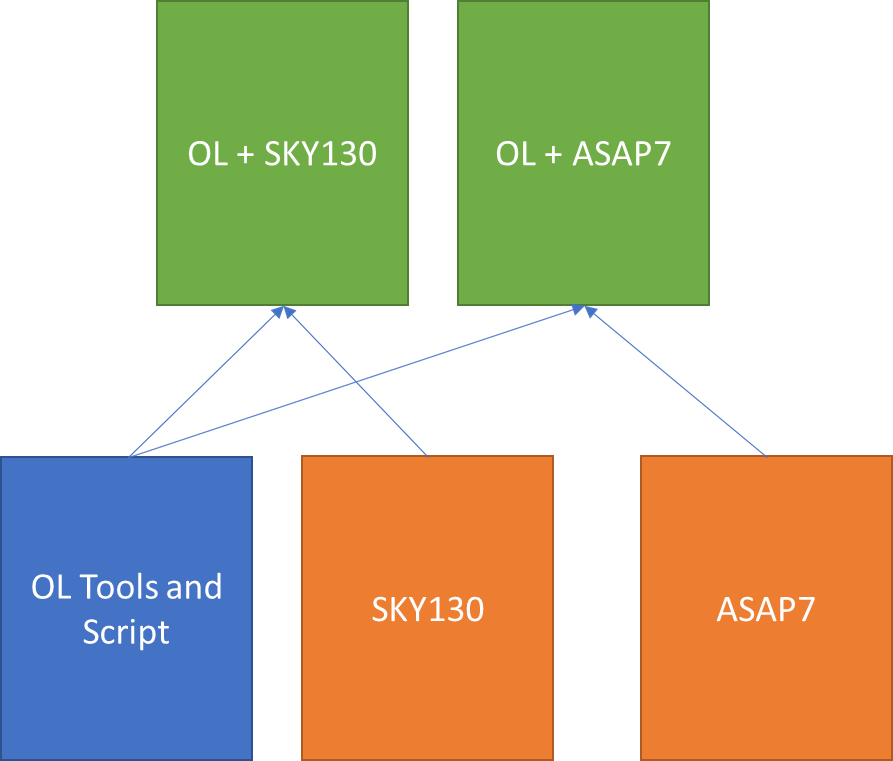

I think of it like:

orange PDKs have low frequency of change and are large. Blue is high frequency of change. Green is also high but just combines other images so is quick to build and limited in size to one PDK. End users use green images.

orange PDKs have low frequency of change and are large. Blue is high frequency of change. Green is also high but just combines other images so is quick to build and limited in size to one PDK. End users use green images.

Fair point to consider. A slightly different route to go would be to just have the pre-built PDK available as a blob. That's pretty much the approach I used for my first integration attempt where I just dumped the build results in a github repo so that my image generation script could pick it up.

The build output of @RTimothyEdwards' https://github.com/RTimothyEdwards/open_pdks for SKY130 is being generated and pushed to https://foss-eda-tools.googlesource.com/skywater-pdk/output/ however that discovered https://github.com/RTimothyEdwards/open_pdks/issues/195

@mithro Is it possible to access the build results as tarballs instead of a git repo to save a bit of bandwidth?

Looking at https://github.com/RTimothyEdwards/open_pdks/actions/runs/1744248303 I see @rtimothyedwards produces an archive for each library which is exactly what I'm looking for but hoping to find a way to get it without needing to go through the github api

Relatedly, why does the directory structure at https://foss-eda-tools.googlesource.com/skywater-pdk/output/ look so different from when building open_pdks the normal way?

The build artifacts are uploaded to Google Cloud (as specified in the commit message) -> https://console.cloud.google.com/storage/browser/open_pdks/skywater-pdk/artifacts/prod/foss-fpga-tools/skywater-pdk/open_pdks/continuous

See https://console.cloud.google.com/storage/browser/open_pdks/skywater-pdk/artifacts/prod/foss-fpga-tools/skywater-pdk/open_pdks/continuous/1640/20220125-082715 for example.

Relatedly, why does the directory structure at https://foss-eda-tools.googlesource.com/skywater-pdk/output/ look so different from when building open_pdks the normal way?

I'm not sure what you mean? You can find how the output here is built at https://foss-eda-tools.googlesource.com/skywater-pdk/builder/+/refs/heads/main/open_pdks/build-open_pdks.sh

The output is post processed with https://foss-eda-tools.googlesource.com/skywater-pdk/builder/+/refs/heads/main/open_pdks/post-open_pdks.sh and packaged up with the commands at https://foss-eda-tools.googlesource.com/skywater-pdk/builder/+/refs/heads/main/open_pdks/run.sh#101

I see. I missed the xz archives at GCP before. That answers my first question.

Regarding the different structure, the builds done by (I think) @rtimothyedwards with the GitGub CI action produces six different tar balls (sky130_fd_sc_hd, sky130_fd_sc_hdll, sky130_fd_sc_hs, sky130_fd_sc_hvl, sky130_fd_sc_ls, sky130_fd_sc_ms) with libs.ref and libs.tech in the root directory.

The builds at GCP finds libs.ref and libs.tech under usr/local/share/pdk/sky130{A,B}. and libs.ref only has the libraries sky130_fd_io, sky130_fd_pr, sky130_fd_sc_hd and sky130_fd_sc_hvl.

For the work I'm looking at doing (a PDK manager) the former layout would be preferred, but I would love to have publicly accessible tar balls like the latter produces. I don't know the history here but could we (do we want to?) take the artifacts built on github and just store those at gcp instead? I'm a bit confused why there are two separate artifact generators that produces slightly different results. I suspect historical reasons is involved :)

The GitHub Actions CI at https://github.com/RTimothyEdwards/open_pdks is support to test that open_pdks is working correctly. GitHub Actions CI has generous but limited resources which means trying to do all the libraries at the same time means runs it out of disk space.

The continuous builder at https://foss-eda-tools.googlesource.com/skywater-pdk/builder was designed to test the current combination of skywater-pdk, open_pdks and magic. It also has much bigger and faster machines.

I was initially hoping with the big builder to generate a build asset for each supported tool (see the loop at https://foss-eda-tools.googlesource.com/skywater-pdk/builder/+/refs/heads/main/open_pdks/build-open_pdks.sh#26). However, I discovered that the --enable-XXXX and other flags don't seem to do anything and then we also discovered the non-determinism.

We can also make the builder run all the libraries - currently it just does https://foss-eda-tools.googlesource.com/skywater-pdk/builder/+/refs/heads/main/open_pdks/build-skywater-pdk.sh

@mithro Having the GCP builder machines doing this makes sense if they are beefier and we can also store the artifacts with a public URL. My preference would be then to change that build-skywater-pdk.shscript to create individual tar balls like the github CI action does. But maybe just start with adding the missing libraries to the script and do a single tar ball if that isn't too large. Once we have that and a relocatable PDK I can get to work

We can build each separately and build a single tarball too.

Even better. Can I send patches somewhere or should someone on your side modify this?

At the moment there is no easy way to send a pull request to the builder repo. Can you push a copy of the git repository with the changes somewhere and tell me to pull the changes into the foss-eda-tools repository?

I'll try to do that starting with an extended version of build-skywater-pdk.sh that builds all the libs

SGTM - Thanks!

We've ultimately decided against this- Volare has since been released.

We've ultimately decided against this- Volare has since been released.

@maliberty What is the relationship between ORFS and Volare?

ORFS does not use Volare as far as I'm aware.