GLM-130B

GLM-130B copied to clipboard

Infer time increases dramatically when start two server

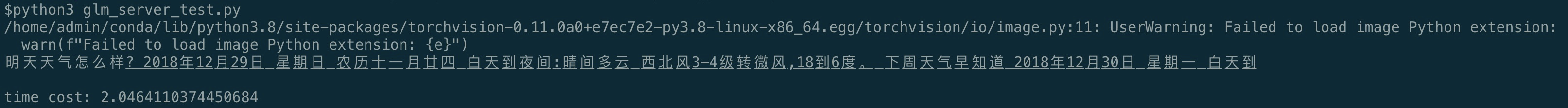

According to the step, i run ../examples/pytorch/glm/glm_server.sh on A100 * 8 and i get 2s with one sentence.

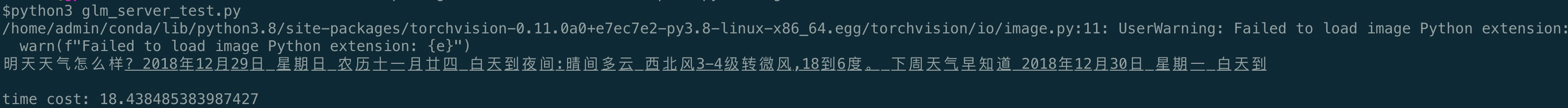

But when i start two server A and B, infer time increases to 18s with the same input after i request A server then request B server:

Is there any other settings i miss? Look forward to your reply!

你运行的是fp16的模型还是int4的?

fp16的