更新了模型后,报这样的错。

Is there an existing issue for this?

- [X] I have searched the existing issues

Current Behavior

RuntimeError: The following operation failed in the TorchScript interpreter.

Traceback of TorchScript (most recent call last):

RuntimeError: The following operation failed in the TorchScript interpreter.

Traceback of TorchScript (most recent call last):

File "/home/train/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 169, in

Expected Behavior

这是新模型的 bug吗?

Steps To Reproduce

这是新模型的 bug吗?如何解决呢

Environment

- OS:centos 7.9

- Python: 3.7.16

- Transformers: 4.27.1

- PyTorch: 1.13

- CUDA Support (`python -c "import torch; print(torch.cuda.is_available())"`) :

Anything else?

No response

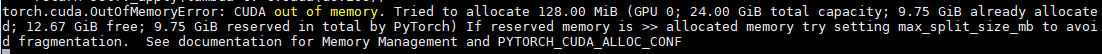

这是显存爆了,跟模型没关系吧

我也遇到类似问题了,chatglm-6b一直起不来,24G显存才用不到10G,就报OOM了。环境变量配置了:PYTORCH_CUDA_ALLOC_CONF="max_split_size_mb:24" 也不顶用。。。

- OS: Debian11(WSL2)

- Python: 3.10.10

- Transformers: 4.27.1

- PyTorch:

- CUDA Support: True