texture_nets

texture_nets copied to clipboard

texture_nets copied to clipboard

Run Test.lua and Train.lua, lots of errors

dataset/train/dummy contain a lot of images (train2014.zip unzip) dataset/val/dummy contain a lot of images (val2014.zip unzip)

VGG_ILSVRC_19_layers.caffemodel VGG_ILSVRC_19_layers_deploy.prototxt vgg_normalised.caffemodel put in data/pretrained/

who can tell me why?

every file is download, never changed

add require 'cutorch' require 'cudnn' require 'cunn'

test.lua is ok.

Looks like script cannot find the images. If you use symlinks make sure they are in absolute paths. Make sure dataset/train/dummy actually has something. Try rm gen/* to clean cache.

Got it! train.lua works! Just like this:

dataset/train dataset/train/dummy dataset/val/

wget http://msvocds.blob.core.windows.net/coco2014/train2014.zip

unzip train2014.zip

mkdir -p dataset/train

mkdir -p dataset/val

ln -s pwd/train2014 dataset/train/dummy

Updated the model to a better one https://github.com/DmitryUlyanov/texture_nets/commit/e303863e869ac20c91d4ea1b08de90c0317eadaf

with the new one you will not need change test.lua since it is converted to CPU mode by default

thanks.

after training , I got model_1000.t7.....model_50000.t7, size 2,686,350 bytes.

command line:th train.lua -data dataset -style_image data/textures/cezanne.jpg

unfortunately,

after using commad line: th test.lua -input_image data/readme_pics/karya.jpg -model_t7 data/checkpoints/model_50000.t7

I got a pic stylized.jpg, black, all black!

Try lowering learning rate. Make sure you don't have nan and inf during training.

ok,thanks!

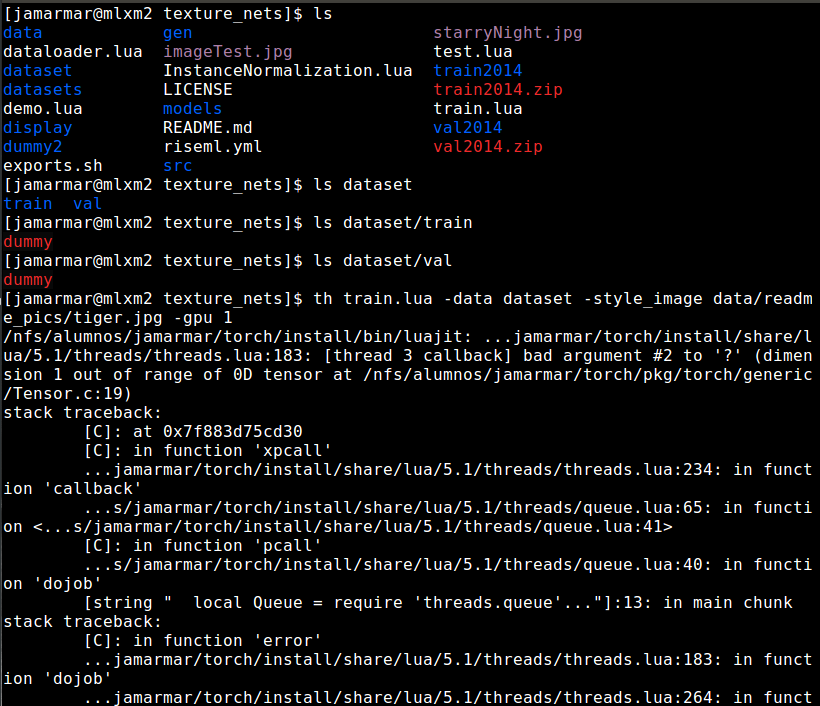

Hi Dmitry, I wanted to use your Texture Nets for a bachelor project. However, I get the same first error as @lvjadey when I tried to train (bad argument #2 to '?').

My command is: th train.lua -data dataset -style_image starryNight.jpg -gpu 1. Where starryNight is 768x576 and I tried with style_image data/readme_pics/tiger.jpg, too.

I had dataset/train/dummy/(lots of images) and dataset/train/val/(lots of images) and it didn't work. I tried with ln -s pwd/val2014 dataset/val/dummy and ln -s pwd/train2014 dataset/train/dummy, but it didn't work neither. Now I have it like this:

It would be nice if you could help me. Thanks.

Hi, this path is correct:

dataset/train/dummy/ dataset/val/dummy/

Maybe the problem is in symlinks and how torch treats them. Can you please try to place several images in these folders as files, not as symlinks and see if it generates the same error?

On Tue, May 22, 2018 at 7:38 PM Jeavy [email protected] wrote:

Hi Dmitry, I wanted to use your Texture Nets for a bachelor project. However, I get the same first error as @lvjadey https://github.com/lvjadey when I tried to train (bad argument #2 https://github.com/DmitryUlyanov/texture_nets/issues/2 to '?').

My command is: th train.lua -data dataset -style_image starryNight.jpg -gpu 1. Where starryNight is 768x576 and I tried with style_image data/readme_pics/tiger.jpg, too.

I had dataset/train/dummy/(lots of images) and dataset/train/val/(lots of images) and it didn't work. I tried with ln -s pwd/val2014 dataset/val/dummy and ln -s pwd/train2014 dataset/train/dummy, but it didn't work neither. Now I have it like this:

[image: image] https://user-images.githubusercontent.com/32073609/40376675-d25ecd8c-5de6-11e8-977d-c53141ac4b31.png

It would be nice if you could help me. Thanks.

— You are receiving this because you commented.

Reply to this email directly, view it on GitHub https://github.com/DmitryUlyanov/texture_nets/issues/40#issuecomment-391058377, or mute the thread https://github.com/notifications/unsubscribe-auth/AGanZHvuDJzMxjT6VajElhSocw_5HXOGks5t1D8HgaJpZM4JqlVZ .

-- Best, Dmitry

Hi, I've placed several images from train2014 and val2014 zips in dataset/train/dummy and dataset/val/dummy and it didn't work (same error).

I followed your steps, but I don't know why it's failing.

Thank you.

What extension do the images have?

They all have .jpg extension

Don't know how to help, sorry...

Hi, I just downloaded and installed everything again and it's working fine. Maybe I had an obsolete version. Thank you, this is a nice work!