[Question][jdbc splitpk] splitpk supports time type?

Search before asking

-

[X] I had searched in the issues and found no similar question.

-

[X] I had googled my question but i didn't get any help.

-

[X] I had read the documentation: ChunJun doc but it didn't help me.

Description

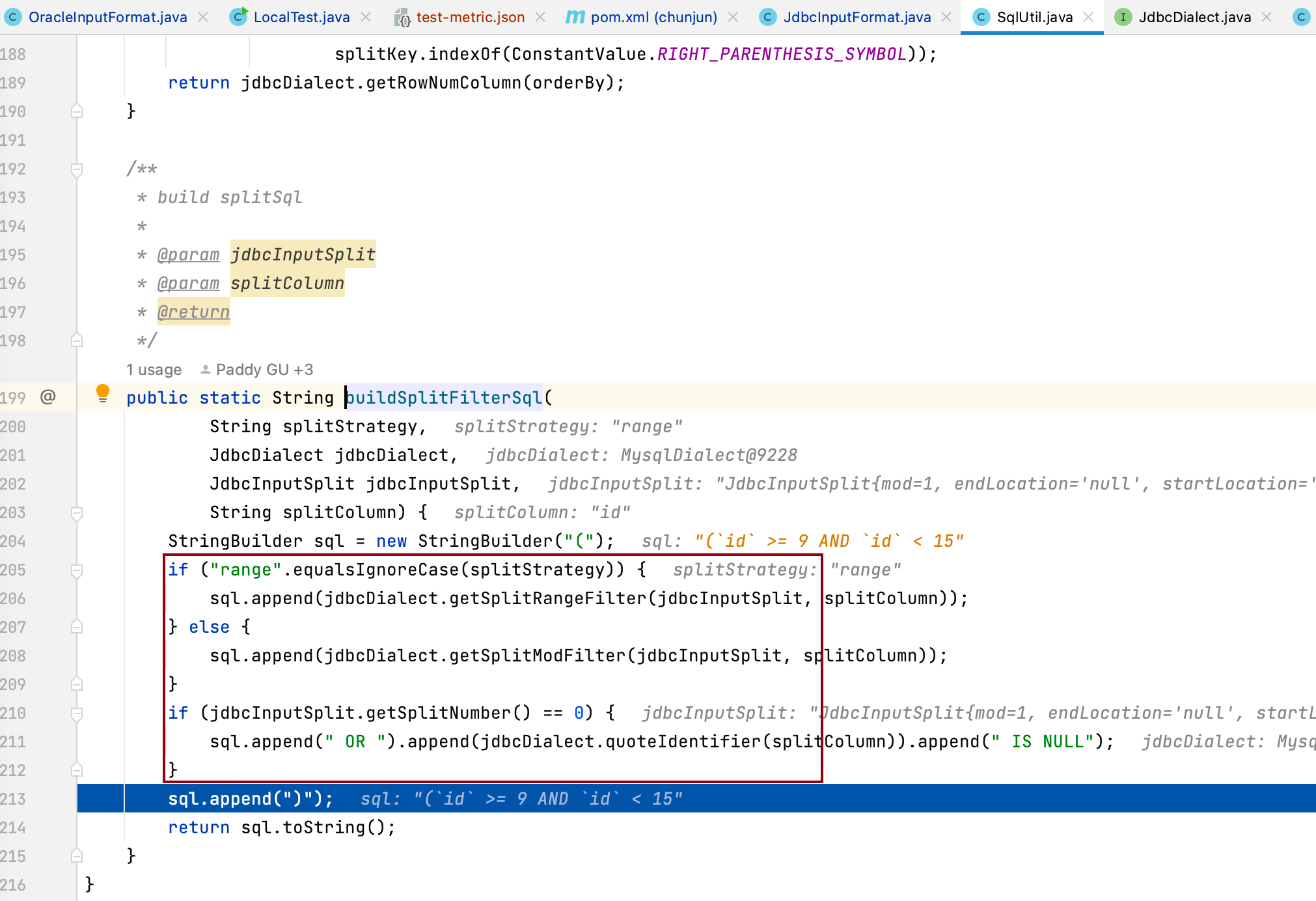

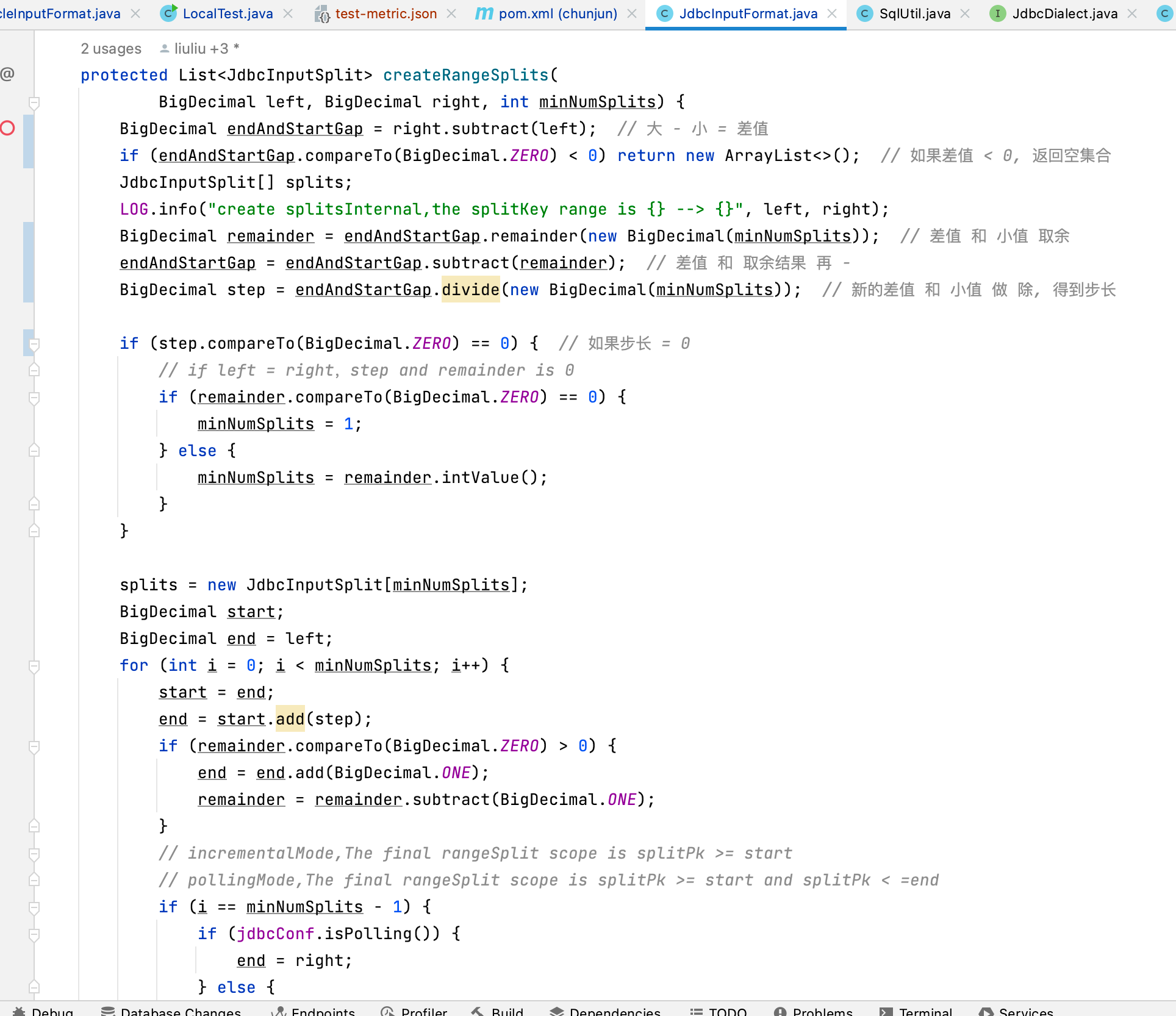

I want to use a field of type time as splitpk, which requires the user to enter a field of type time, and then chunjun starts to calculate the start and end values of the time, and also supports the user to input the start and end values by himself, and then calculates the step size to get Each parallelism sql, but after reading the relevant code, I found that it is not easy to expand, can you explain how to modify it?

Code of Conduct

- [X] I agree to follow this project's Code of Conduct

In spark, the user can automatically input a set of intervals for a period of time for parallel division:

val conf = new SparkConf()

.setMaster("local[*]")

.setAppName(this.getClass.getName)

val spark = SparkSession.builder().config(conf).getOrCreate()

val prop = new Properties()

prop.put("user", "root")

prop.put("password", "123456")

prop.put("driver", "com.mysql.jdbc.Driver")

val predicates =

Array(

"2015-09-16" -> "2015-09-30",

"2015-10-01" -> "2015-10-15",

"2015-10-16" -> "2015-10-31",

"2015-11-01" -> "2015-11-14",

"2015-11-15" -> "2015-11-30",

"2015-12-01" -> "2015-12-15"

).map {

case (start, end) =>

s"cast(time as date) >= date '$start' " + s"AND cast(time as date) <= date '$end'"

}

val readDf = spark.read.jdbc("jdbc:mysql://***.***.***.**:3306/data_base?useUnicode=true&characterEncoding=utf-8&useSSL=false",

"salej", predicates)

println("读取数据库的并行度是: "+readDf.rdd.partitions.size)

i think it's a good idea.