Improve texture creation performance

Unity is unfortunately lacking an API that allows us to create textures from a worker thread. So for models with large textures, the texture upload happening on the main thread causes significant frame rate drops.

@mwilsnd noticed in profiling that the Texture2D constructor is actually a significant hotspot, even before we upload data. Presumably it is synchronizing with the render thread and causing a stall. So simply pooling textures, rather than creating and destroying them, may be a big win.

He also pointed out that this Unity-provided example: https://github.com/Unity-Technologies/NativeRenderingPlugin shows how to register for a callback on Unity's renderer thread, and create a render API specific texture there. Then, in the main thread, we use Unity's Texture2D.CreateExternalTexture to create Unity texture from the RHI texture without stalling the main thread.

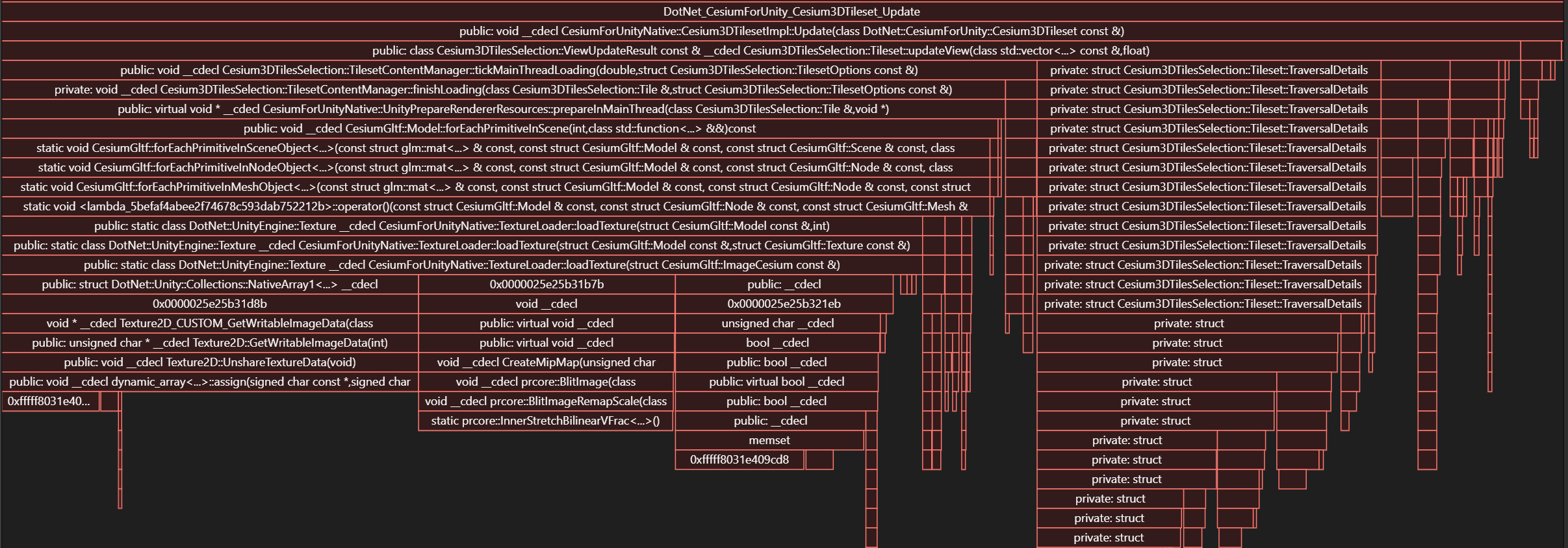

This capture of the tileset update method shows where the cost is:

Zoomed in a bit on loadTexture:

GetRawTextureData is the worst offender, you can get a callback from the render thread in a native plugin here. Pushing mipmap generation into a worker thread or even a compute shader might not be a bad idea either.

Thanks @mwilsnd, that's really helpful! Are you using the Visual Studio profiler with the Unity symbol server configured there? Do you have any other tips for profiling in Unity?

Yeah, I just attach Visual Studio making sure the debugger is set to native code only and use the built in profiling tools. For debugging on the C# side, Unity's built in profiler is better.

For the above, I just do a debug break and enable CPU profiling, resume and run around for 10 or 20 seconds, then break again to get the profiler results.

For native profiling you may also want to profile debug builds as well as release, or at least builds with less aggressive inlining or things like LTO, since full release builds can obfuscate how you see the stack.

@mwilsnd, I looked into the NativeRenderingPlugin and it looks like, when it comes to textures, it only shows how to modify the pixel buffer on the render thread for each Render API. This could help remove the cost of GetRawTextureData. However it would be nice to create the texture and upload it to the GPU on the render thread as well. Do you know if this code is also available?

Thank you.

@joseph-kaile The plugin doesn't include texture creation so that'll be something you need to extend the plugin to support. For OpenGL/ES it should be rather straightforward glGenTextures + glTexImage2D calls. D3D11 and Metal are also relatively direct. I've yet to use D3D12 so I can't weigh in on that surface, but for Vulkan it would be a bit more involved as you have to contend with allocating device memory. You can see some of this in CreateVulkanBuffer, though it doesn't seem like Unity exposes their own GPU allocator so you may want to try and use VMA since you'll be allocating lots of small and medium size textures.

Thanks for all the info @mwilsnd!

@joseph-kaile while I do think it will be useful to go down this path, I also think it's likely to get pretty complicated, so we should start with some simpler improvements. Based on the flame graphs above, I think there is some low hanging fruit here that might cut our texture load time by roughly a quarter to a third (or more!) without very much work.

The first thing is to move the mipmap generation entirely off the main thread. We're already doing this in Unreal, and it should be straightforward in Unity, too. CesiumImage has a mechanism to store mipmaps, and we can compute them in a worker thread by using STB. And then we just need to tell Unity not to generate them itself, and instead include them in the data we send through to Unity in loadTexture.

Next, let's try switching from Texture2D::GetRawTextureData to Texture2D::LoadRawTextureData. The docs say GetRawTextureData should be faster, because it avoids a copy. But in reality it just means we're doing the copy ourselves (see the std::memcpy in TextureLoader::loadTexture). And meanwhile that time-consuming UnshareTextureData followed by assign in the flame graph makes me think GetRawTextureData isn't the simple "give me a pointer to the buffer" that it would appear. So I think there's at least a chance that LoadRawTextureData could be faster. Let's profile it and see.

After that, we can circle back and consider implementing low-level, rendering-API-specific texture loading.

Hello! I noticed this was a major bottleneck in the performance of my app! Is there a plan to make this improvement anytime soon? I am also curious if you have considered adding an object pool for the tileset gameobjects to prevent the low performance overhead of instantiating tiles in realtime!

Thanks so much!

We moved mipmap generation off the main thread in #278. Other things above haven't been explored yet.