langflow

langflow copied to clipboard

langflow copied to clipboard

Local Server setup with Docker

Hey LangFlow Dev Team!

I have a feature request that I think would be awesome for the platform. Can you add a local server with Docker, so users can use chained prompts on local networks? It's like an OpenAI API server proxy, and would let us experiment with more complex flows for personal servers and systems.

Hey @cogscides!

I'm not sure I understand it.

Do you mean a person uses another person's flow or just deploy with an external IP for a local network?

The external IP (like streamlit) is something I'm exploring today, actually.

Second one. I mean ability to deploy via docker and than use it as API.

Sharing chains also would be very nice. It's very similar to what n8n do.

We have implemented exporting and importing in the app, and also importing an exported JSON file into a LangChain object.

We are still fleshing it out, especially the documentation, but it is there, so you can share and import other's flows.

About the n8n approach, that is something we might consider at some point. Now, serving it on a local network is definitely a use case we aim on making it work.

On Tue, Mar 14, 2023, 21:35 cσgscídєs @.***> wrote:

Second one. I mean ability to deploy via docker and than use it as API.

Sharing prompt also would be very nice. It's very similar to what n8n do.

— Reply to this email directly, view it on GitHub https://github.com/logspace-ai/langflow/issues/19#issuecomment-1469057239, or unsubscribe https://github.com/notifications/unsubscribe-auth/AF5N3VNN3BYZLHWR72L5GY3W4EFFFANCNFSM6AAAAAAV3CPXJU . You are receiving this because you commented.Message ID: @.***>

@cogscides I got langflow working w/ Docker and nginx locally (VirtualBox Ubuntu VM hosted on win11; containers are running on Ubuntu)

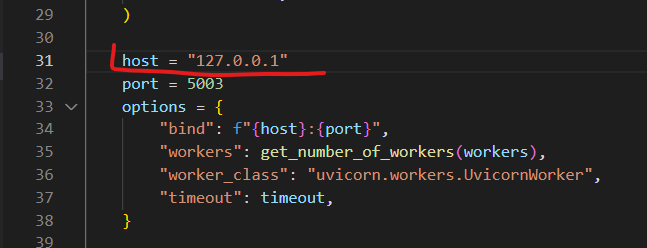

It requires a code change in langflow/backend/langflow_backend/__main__.py (Docker cannot use loopback IP, needs to be 0.0.0.0)

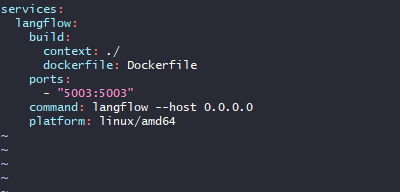

docker-compose.yml

version: '3'

services:

langflow-nginx:

image: nginx:1.19-alpine

container_name: langflow-nginx

volumes:

- ./nginx.conf:/etc/nginx/conf.d/default.conf

ports:

- "80"

depends_on:

- langflow-backend

langflow-backend:

build:

context: ./

dockerfile: ./python.Dockerfile

container_name: langflow-backend

image: langflow-backend-python

ports:

- "5003:5003"

entrypoint:

- bash

tty: true

stdin_open: true

nginx.conf

upstream langflow-backend-server {

server langflow-backend:5003;

}

server {

listen 80;

server_name langflow.dev.x.com; # change this to your hostname

root /langflow;

location / {

proxy_pass http://langflow-backend-server;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

}

}

ensure langflow is installed w/ pip

sudo docker exec -it langflow-backend sh -c "cd /langflow && python3 -m venv env && . env/bin/activate && pip3 install langflow"

then start the backend with the following command

sudo docker exec -it langflow-backend sh -c ". env/bin/activate && langflow"

then hit the domain langflow.dev.x.com with your browser

@ogabrielluiz I'll make a PR for langflow/backend/langflow_backend/__main__.py if you're interested.

disregard my post 😳 just saw this update:

Hey, Mike! Thanks for the post.

We've made some changes to how we deploy it but we are still not sure if it is the best approach.

Docker compose now runs a script to add a proxy when running locally for development and when running through langflow the frontend just calls fetch which calls the API directly.

Git cloned and then ran docker compose up in the langflow directory.

Seemed to spin up fine, but I'm getting errors when I go to localhost:3000.

Keep getting proxy error.

Running on linux mint, but that shouldn't cause any issues since it's in docker.

See screenshots.

This is a proxy problem. You might want to check the proxy attribute in the package.json.

It seems the backend is not running or the proxy is pointing to the wrong url.

I have the same issue running the docker containers with WSL. The default, http://localhost:7860 gets the same proxy error:

langflow-frontend-1 | Proxy error: Could not proxy request /favicon.ico from 127.0.0.1:3000 to http://localhost:7860.

langflow-frontend-1 | See https://nodejs.org/api/errors.html#errors_common_system_errors for more information (ECONNREFUSED).

but I can access that URL and http://localhost:7860/all in the browser:

langflow-backend-1 | INFO: 172.19.0.1:47462 - "GET /all HTTP/1.1" 200 OK

langflow-backend-1 | INFO: 172.19.0.1:47462 - "GET /favicon.ico HTTP/1.1" 404 Not Found

I do not see any connection attempts in the backend logs while opening the frontend.

System: Windows 11 build 22621 WSL Ubuntu 20.04 Docker engine v20.10.23

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.