Add annotations do defaultBackend deploy

What this PR does / why we need it:

This PR adds annotations to the defaultBackend deployment. Annotations are useful when integrating the ingress resources with other tools, like datree or kube-score.

Types of changes

- [ ] Bug fix (non-breaking change which fixes an issue)

- [x] New feature (non-breaking change which adds functionality)

- [ ] Breaking change (fix or feature that would cause existing functionality to change)

- [ ] Documentation only

Which issue/s this PR fixes

fixes #8679

How Has This Been Tested?

Tested locally with kind as follow.

Clone repo and checkout new branch.

git clone https://github.com/nu12/ingress-nginx.git

cd ingress-nginx

git checkout feature/backend-annotation

Change charts/ingress-nginx/values.yaml in line 757 to enabled: true and line 892 to include an annotation. My values.yaml is set as follow (some lines were ommited):

cat charts/ingress-nginx/values.yaml

...

defaultBackend:

enabled: true

...

annotations:

my-custom/annotation: here

...

Check if the manifest created by helm includes the annnotation as intended. my-custom/annotation: here should appear as one of the annotations for the defaultBackend.

helm template ingress-nginx charts/ingress-nginx/ -n ingress

---

# Source: ingress-nginx/templates/controller-serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx

namespace: ingress

automountServiceAccountToken: true

---

# Source: ingress-nginx/templates/default-backend-serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: default-backend

name: ingress-nginx-backend

namespace: ingress

automountServiceAccountToken: true

---

# Source: ingress-nginx/templates/controller-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller

namespace: ingress

data:

allow-snippet-annotations: "true"

---

# Source: ingress-nginx/templates/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

name: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

- namespaces

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io

resources:

- ingressclasses

verbs:

- get

- list

- watch

---

# Source: ingress-nginx/templates/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

name: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: "ingress"

---

# Source: ingress-nginx/templates/controller-role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx

namespace: ingress

rules:

- apiGroups:

- ""

resources:

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- endpoints

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io

resources:

- ingressclasses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- configmaps

resourceNames:

- ingress-controller-leader

verbs:

- get

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

---

# Source: ingress-nginx/templates/controller-rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx

namespace: ingress

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: "ingress"

---

# Source: ingress-nginx/templates/controller-service-webhook.yaml

apiVersion: v1

kind: Service

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller-admission

namespace: ingress

spec:

type: ClusterIP

ports:

- name: https-webhook

port: 443

targetPort: webhook

appProtocol: https

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

---

# Source: ingress-nginx/templates/controller-service.yaml

apiVersion: v1

kind: Service

metadata:

annotations:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller

namespace: ingress

spec:

type: LoadBalancer

ipFamilyPolicy: SingleStack

ipFamilies:

- IPv4

ports:

- name: http

port: 80

protocol: TCP

targetPort: http

appProtocol: http

- name: https

port: 443

protocol: TCP

targetPort: https

appProtocol: https

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

---

# Source: ingress-nginx/templates/default-backend-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: default-backend

name: ingress-nginx-defaultbackend

namespace: ingress

spec:

type: ClusterIP

ports:

- name: http

port: 80

protocol: TCP

targetPort: http

appProtocol: http

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: default-backend

---

# Source: ingress-nginx/templates/controller-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller

namespace: ingress

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

replicas: 1

revisionHistoryLimit: 10

minReadySeconds: 0

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

spec:

dnsPolicy: ClusterFirst

containers:

- name: controller

image: "k8s.gcr.io/ingress-nginx/controller:v1.2.1@sha256:5516d103a9c2ecc4f026efbd4b40662ce22dc1f824fb129ed121460aaa5c47f8"

imagePullPolicy: IfNotPresent

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

args:

- /nginx-ingress-controller

- --default-backend-service=$(POD_NAMESPACE)/ingress-nginx-defaultbackend

- --publish-service=$(POD_NAMESPACE)/ingress-nginx-controller

- --election-id=ingress-controller-leader

- --controller-class=k8s.io/ingress-nginx

- --ingress-class=nginx

- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller

- --validating-webhook=:8443

- --validating-webhook-certificate=/usr/local/certificates/cert

- --validating-webhook-key=/usr/local/certificates/key

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

runAsUser: 101

allowPrivilegeEscalation: true

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: LD_PRELOAD

value: /usr/local/lib/libmimalloc.so

livenessProbe:

failureThreshold: 5

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

ports:

- name: http

containerPort: 80

protocol: TCP

- name: https

containerPort: 443

protocol: TCP

- name: webhook

containerPort: 8443

protocol: TCP

volumeMounts:

- name: webhook-cert

mountPath: /usr/local/certificates/

readOnly: true

resources:

requests:

cpu: 100m

memory: 90Mi

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx

terminationGracePeriodSeconds: 300

volumes:

- name: webhook-cert

secret:

secretName: ingress-nginx-admission

---

# Source: ingress-nginx/templates/default-backend-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: default-backend

name: ingress-nginx-defaultbackend

namespace: ingress

annotations:

my-custom/annotation: here

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: default-backend

replicas: 1

revisionHistoryLimit: 10

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: default-backend

spec:

containers:

- name: ingress-nginx-default-backend

image: "k8s.gcr.io/defaultbackend-amd64:1.5"

imagePullPolicy: IfNotPresent

securityContext:

capabilities:

drop:

- ALL

runAsUser: 65534

runAsNonRoot: true

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /healthz

port: 8080

scheme: HTTP

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 3

readinessProbe:

httpGet:

path: /healthz

port: 8080

scheme: HTTP

initialDelaySeconds: 0

periodSeconds: 5

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 6

ports:

- name: http

containerPort: 8080

protocol: TCP

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx-backend

terminationGracePeriodSeconds: 60

---

# Source: ingress-nginx/templates/controller-ingressclass.yaml

# We don't support namespaced ingressClass yet

# So a ClusterRole and a ClusterRoleBinding is required

apiVersion: networking.k8s.io/v1

kind: IngressClass

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: nginx

spec:

controller: k8s.io/ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/validating-webhook.yaml

# before changing this value, check the required kubernetes version

# https://kubernetes.io/docs/reference/access-authn-authz/extensible-admission-controllers/#prerequisites

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

name: ingress-nginx-admission

webhooks:

- name: validate.nginx.ingress.kubernetes.io

matchPolicy: Equivalent

rules:

- apiGroups:

- networking.k8s.io

apiVersions:

- v1

operations:

- CREATE

- UPDATE

resources:

- ingresses

failurePolicy: Fail

sideEffects: None

admissionReviewVersions:

- v1

clientConfig:

service:

namespace: "ingress"

name: ingress-nginx-controller-admission

path: /networking/v1/ingresses

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: ingress-nginx-admission

namespace: ingress

annotations:

"helm.sh/hook": pre-install,pre-upgrade,post-install,post-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: ingress-nginx-admission

annotations:

"helm.sh/hook": pre-install,pre-upgrade,post-install,post-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

rules:

- apiGroups:

- admissionregistration.k8s.io

resources:

- validatingwebhookconfigurations

verbs:

- get

- update

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: ingress-nginx-admission

annotations:

"helm.sh/hook": pre-install,pre-upgrade,post-install,post-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: "ingress"

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: ingress-nginx-admission

namespace: ingress

annotations:

"helm.sh/hook": pre-install,pre-upgrade,post-install,post-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

rules:

- apiGroups:

- ""

resources:

- secrets

verbs:

- get

- create

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: ingress-nginx-admission

namespace: ingress

annotations:

"helm.sh/hook": pre-install,pre-upgrade,post-install,post-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: "ingress"

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/job-createSecret.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: ingress-nginx-admission-create

namespace: ingress

annotations:

"helm.sh/hook": pre-install,pre-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

spec:

template:

metadata:

name: ingress-nginx-admission-create

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

spec:

containers:

- name: create

image: "k8s.gcr.io/ingress-nginx/kube-webhook-certgen:v1.1.1@sha256:64d8c73dca984af206adf9d6d7e46aa550362b1d7a01f3a0a91b20cc67868660"

imagePullPolicy: IfNotPresent

args:

- create

- --host=ingress-nginx-controller-admission,ingress-nginx-controller-admission.$(POD_NAMESPACE).svc

- --namespace=$(POD_NAMESPACE)

- --secret-name=ingress-nginx-admission

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

securityContext:

allowPrivilegeEscalation: false

restartPolicy: OnFailure

serviceAccountName: ingress-nginx-admission

nodeSelector:

kubernetes.io/os: linux

securityContext:

runAsNonRoot: true

runAsUser: 2000

fsGroup: 2000

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/job-patchWebhook.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: ingress-nginx-admission-patch

namespace: ingress

annotations:

"helm.sh/hook": post-install,post-upgrade

"helm.sh/hook-delete-policy": before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

spec:

template:

metadata:

name: ingress-nginx-admission-patch

labels:

helm.sh/chart: ingress-nginx-4.1.3

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: "1.2.1"

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

spec:

containers:

- name: patch

image: "k8s.gcr.io/ingress-nginx/kube-webhook-certgen:v1.1.1@sha256:64d8c73dca984af206adf9d6d7e46aa550362b1d7a01f3a0a91b20cc67868660"

imagePullPolicy: IfNotPresent

args:

- patch

- --webhook-name=ingress-nginx-admission

- --namespace=$(POD_NAMESPACE)

- --patch-mutating=false

- --secret-name=ingress-nginx-admission

- --patch-failure-policy=Fail

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

securityContext:

allowPrivilegeEscalation: false

restartPolicy: OnFailure

serviceAccountName: ingress-nginx-admission

nodeSelector:

kubernetes.io/os: linux

securityContext:

runAsNonRoot: true

runAsUser: 2000

fsGroup: 2000

Create the dev-env with the current branch running make dev-env.

Check pods

kubectl get pods -n ingress-nginx

NAME READY STATUS RESTARTS AGE

ingress-nginx-admission-create-nw96j 0/1 Completed 0 38s

ingress-nginx-admission-patch-c9zp7 0/1 Completed 0 38s

ingress-nginx-controller-5d769b5644-jjkrw 1/1 Running 0 38s

ingress-nginx-defaultbackend-84b9d5bdfc-fst8z 1/1 Running 0 38s

Both controller and defaultBackend are running. Visit the browser or run curl localhost to see the default backend - 404 message as we didn't define any ingress.

Get deployments.

kubectl get deployments -n ingress-nginx

NAME READY UP-TO-DATE AVAILABLE AGE

ingress-nginx-controller 1/1 1 1 8m27s

ingress-nginx-defaultbackend 1/1 1 1 8m27s

Describe defaultBackend deployment to find the annotation. This is the intended result.

kubectl describe deployment ingress-nginx-defaultbackend -n ingress-nginx

Name: ingress-nginx-defaultbackend

Namespace: ingress-nginx

CreationTimestamp: Thu, 16 Jun 2022 13:12:46 +0100

Labels: app.kubernetes.io/component=default-backend

app.kubernetes.io/instance=ingress-nginx

app.kubernetes.io/managed-by=Helm

app.kubernetes.io/name=ingress-nginx

app.kubernetes.io/part-of=ingress-nginx

app.kubernetes.io/version=1.2.1

helm.sh/chart=ingress-nginx-4.1.3

Annotations: deployment.kubernetes.io/revision: 1

my-custom/annotation: here

Selector: app.kubernetes.io/component=default-backend,app.kubernetes.io/instance=ingress-nginx,app.kubernetes.io/name=ingress-nginx

Replicas: 1 desired | 1 updated | 1 total | 1 available | 0 unavailable

StrategyType: RollingUpdate

MinReadySeconds: 0

RollingUpdateStrategy: 25% max unavailable, 25% max surge

Pod Template:

Labels: app.kubernetes.io/component=default-backend

app.kubernetes.io/instance=ingress-nginx

app.kubernetes.io/name=ingress-nginx

Service Account: ingress-nginx-backend

Containers:

ingress-nginx-default-backend:

Image: k8s.gcr.io/defaultbackend-amd64:1.5

Port: 8080/TCP

Host Port: 0/TCP

Liveness: http-get http://:8080/healthz delay=30s timeout=5s period=10s #success=1 #failure=3

Readiness: http-get http://:8080/healthz delay=0s timeout=5s period=5s #success=1 #failure=6

Environment: <none>

Mounts: <none>

Volumes: <none>

Conditions:

Type Status Reason

---- ------ ------

Available True MinimumReplicasAvailable

Progressing True NewReplicaSetAvailable

OldReplicaSets: <none>

NewReplicaSet: ingress-nginx-defaultbackend-84b9d5bdfc (1/1 replicas created)

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal ScalingReplicaSet 9m53s deployment-controller Scaled up replica set ingress-nginx-defaultbackend-84b9d5bdfc to 1

Checklist:

- [ ] My change requires a change to the documentation.

- [ ] I have updated the documentation accordingly.

- [x] I've read the CONTRIBUTION guide

- [ ] I have added tests to cover my changes.

- [x] All new and existing tests passed.

The committers listed above are authorized under a signed CLA.

- :white_check_mark: login: nu12 (eee6348d8d6befff5c62634f05bdef8ec71be129)

@nu12: This issue is currently awaiting triage.

If Ingress contributors determines this is a relevant issue, they will accept it by applying the triage/accepted label and provide further guidance.

The triage/accepted label can be added by org members by writing /triage accepted in a comment.

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

Welcome @nu12!

It looks like this is your first PR to kubernetes/ingress-nginx 🎉. Please refer to our pull request process documentation to help your PR have a smooth ride to approval.

You will be prompted by a bot to use commands during the review process. Do not be afraid to follow the prompts! It is okay to experiment. Here is the bot commands documentation.

You can also check if kubernetes/ingress-nginx has its own contribution guidelines.

You may want to refer to our testing guide if you run into trouble with your tests not passing.

If you are having difficulty getting your pull request seen, please follow the recommended escalation practices. Also, for tips and tricks in the contribution process you may want to read the Kubernetes contributor cheat sheet. We want to make sure your contribution gets all the attention it needs!

Thank you, and welcome to Kubernetes. :smiley:

Hi @nu12. Thanks for your PR.

I'm waiting for a kubernetes member to verify that this patch is reasonable to test. If it is, they should reply with /ok-to-test on its own line. Until that is done, I will not automatically test new commits in this PR, but the usual testing commands by org members will still work. Regular contributors should join the org to skip this step.

Once the patch is verified, the new status will be reflected by the ok-to-test label.

I understand the commands that are listed here.

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

[APPROVALNOTIFIER] This PR is NOT APPROVED

This pull-request has been approved by: nu12

To complete the pull request process, please assign cpanato after the PR has been reviewed.

You can assign the PR to them by writing /assign @cpanato in a comment when ready.

The full list of commands accepted by this bot can be found here.

Approvers can indicate their approval by writing /approve in a comment

Approvers can cancel approval by writing /approve cancel in a comment

@nu12, thnx for contribution.

- keel.sh is not explained

- dat tree is not explained

- kube-score is not explained

- No working example config is posted

- No screenshot of benefit is posted

- Association of custom-default-backend to annotation is not explained

For the above reasons and for providing helpful insight into how this is an improvement, kindly create a issue and link it here. Please put the details of the problem you want to solve in the issue.

@longwuyuan, thank you for the comment. I hope to address some of your concerns below:

- Working example

Add the annotation in a custom values.yaml file as following.

# -- Annotations to be added to the defaultBackend Deployment

##

annotations:

my.custom/deployment: annotation

Then, running helm template charts/ingress-nginxwill generate the manifest with the appropriate values for the annotation.

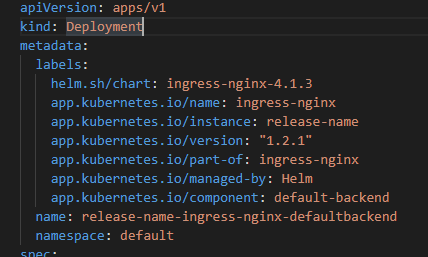

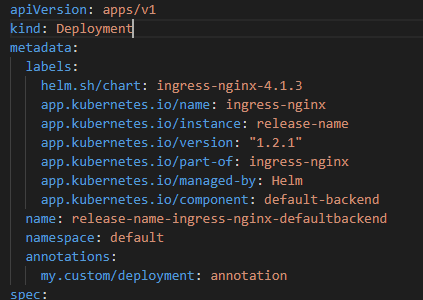

Please note in the screenshots below that this is only possible to have annotation in the defaultBackend deployment using helm install or helm update by adding the proposed changes (2nd picture), otherwise the generated manifest will not contain any annotation for the resource (1st picture).

This is intented to work like the annotation for the controller deployment.

- Screenshot of benefit

Before the PR: there is no way to add the annotation via the values.yaml file.

After the PR: the above example will generate the following manifest.

- datree and kube-score

These tools perform checks against the manifests generated by helm. The way we control which check each manifest is subject to is via annotations.

Skipping a check in datree Ignoring a test in kube-score

Enabling annotations to the defaultBackend adds an extra level of configuration for the deployment (not pods or containers), which can be useful in such case.

https://github.com/kubernetes/ingress-nginx/issues/8679

Thanks for the info @nu12

/ok-to-test

By working example, I was hoping you wold show a annotation that you spec out to the default-backend manifest and what actually happens to the default-backend workload, that can not happen now. I hope you can edit your info to show that benefit.

Once again thank you for the contribution.

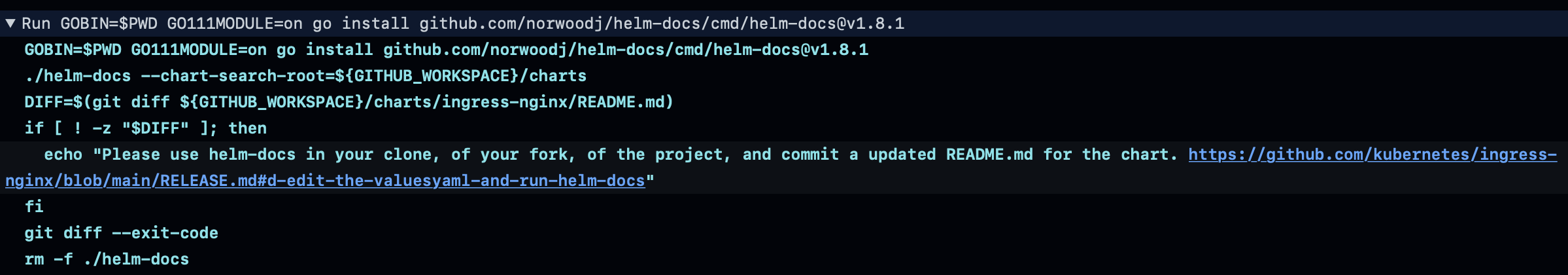

You may need to run helm-docs locally, that generates a new /charts/ingress.nginx/README.md

and checkin the new README.md, making sure to avoid checking-in the helm-docs binary executable. Its detailed in the /RELEASE.md of the project

@longwuyuan thanks for pointing the documentation update step.

Edited the previous comments to fill some gaps.

Updated test procedure details.

The Kubernetes project currently lacks enough contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle stale - Mark this issue or PR as rotten with

/lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle rotten

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the PR is closed

You can:

- Reopen this PR with

/reopen - Mark this PR as fresh with

/remove-lifecycle rotten - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close

@k8s-triage-robot: Closed this PR.

In response to this:

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied- After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied- After 30d of inactivity since

lifecycle/rottenwas applied, the PR is closedYou can:

- Reopen this PR with

/reopen- Mark this PR as fresh with

/remove-lifecycle rotten- Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.