vsphere-csi-driver

vsphere-csi-driver copied to clipboard

vsphere-csi-driver copied to clipboard

Deploying vsphere csi also schedules on master nodes, docs and deployments needs updating

Is this a BUG REPORT or FEATURE REQUEST?:

Uncomment only one, leave it on its own line:

/kind bug

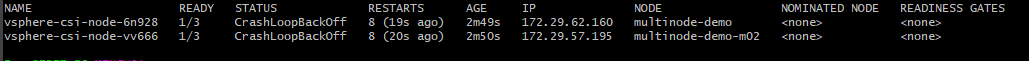

What happened: toleration on daemonset allows csiplugin to run on control-plane nodes What you expected to happen: csiplugin runs on worker nodes only How to reproduce it (as minimally and precisely as possible): since the node-selector kubernetes.io/os: linux applies to all nodes and the daemonset has the following tolerations: - effect: NoExecute operator: Exists - effect: NoSchedule operator: Exists

with the taint, it allows the ds pods to schedule on control-plane nodes, after removing the taint, the pods were schduled on worker nodes only, tho https://docs.vmware.com/en/VMware-vSphere-Container-Storage-Plug-in/2.0/vmware-vsphere-csp-getting-started/GUID-4E47B9F1-B250-4B36-8FEC-8F45E6529D23.html

there should be a different node-selector that goes with linux ds and windows ds or have no taints at all and the user will have to add tolerations based on the environment

Anything else we need to know?: A label should be added to each worker node relying on csi kubectl label node <worker_node> vsphere-csi-node=true the nodeselector should be - or something like that nodeSelector: vsphere-csi-node: true

Environment:

- csi-vsphere version: 2.7

- vsphere-cloud-controller-manager version: 2.7

- Kubernetes version: 1.22

- vSphere version: 6.7

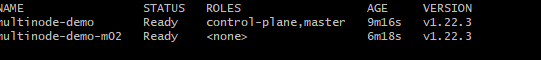

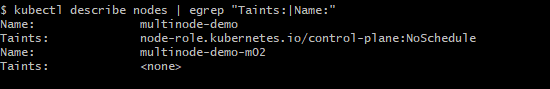

here are images, 2 nodes, 1 master one worker

the master have the taint required

and the daemonset still applies to the master node

In case it's helpful, we've been modifying the nodeSelector & tolerations (of the Deployment, not the Daemonset) since we started using the vsphere-csi-driver a couple years (and several versions) ago:

...

# nodeSelector/tolerations differ purposefully from upstream

nodeSelector:

node.kubernetes.io/role: master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

...

/assign @divyenpatel

The Kubernetes project currently lacks enough contributors to adequately respond to all issues.

This bot triages un-triaged issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue as fresh with

/remove-lifecycle stale - Close this issue with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues.

This bot triages un-triaged issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue as fresh with

/remove-lifecycle rotten - Close this issue with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle rotten

/remove-lifecycle rotten

The Kubernetes project currently lacks enough contributors to adequately respond to all issues.

This bot triages un-triaged issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue as fresh with

/remove-lifecycle stale - Close this issue with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues.

This bot triages un-triaged issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue as fresh with

/remove-lifecycle rotten - Close this issue with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle rotten

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Reopen this issue with

/reopen - Mark this issue as fresh with

/remove-lifecycle rotten - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close not-planned

@k8s-triage-robot: Closing this issue, marking it as "Not Planned".

In response to this:

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied- After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied- After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closedYou can:

- Reopen this issue with

/reopen- Mark this issue as fresh with

/remove-lifecycle rotten- Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close not-planned

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.