amazon-cloudwatch-agent

amazon-cloudwatch-agent copied to clipboard

amazon-cloudwatch-agent copied to clipboard

Support global metric dimensions

Description of the issue

Addresses https://github.com/aws/amazon-cloudwatch-agent/issues/172

- Frequently a specific EC2 deployment will have a set of Dimensions that should be associated with all published metrics

- Support this in the metrics configuration

Description of changes

-

Add a

global_dimensionsproperty to the metric configuration to support this use-case{ "agent": { "region": "us-east-1" }, "metrics": { "append_dimensions": { "AutoScalingGroupName": "${aws:AutoScalingGroupName}", "ImageId": "${aws:ImageId}", "InstanceId": "${aws:InstanceId}", "InstanceType": "${aws:InstanceType}" }, "global_dimensions": { "Environment": "test", "DeploymentStack": "green" }, "metrics_collected": { "mem": { "measurement": ["mem_used_percent"] }, "disk": { "measurement": ["used_percent"], "resources": ["*"] } } } } -

I opted not to use the existing

append_dimensionsproperty because:- The code required is quite different for the two use-cases

- This could be a breaking change if people have non

aws:values in theappend_dimensionsfield

-

With the current implementation, any dimension values defined in

global_dimensionsoverride dimensions set for individual metrics. This can easily be altered.

License

By submitting this pull request, I confirm that you can use, modify, copy, and redistribute this contribution, under the terms of your choice.

Tests

I have added / adjusted tests for:

- JSON to TOML translation of the configuration

- Extended tests for

BuildDimensionsto include the cases where global dimensions are provided

Requirements

Before commit the code, please do the following steps.

- Run

make fmtandmake fmt-sh - Run

make linter

- Are these instructions up-to-date?

make linterfails completely andmake fmtandmake fmt-shmake loads of changes to files I've not touched.

Have you built the agent locally and ran this end-to-end? I think that's one thing we'll need to confirm before accepting the change

Codecov Report

Merging #673 (8b1fc4f) into main (081cc0b) will increase coverage by

0.03%. The diff coverage is100.00%.

@@ Coverage Diff @@

## main #673 +/- ##

==========================================

+ Coverage 56.87% 56.90% +0.03%

==========================================

Files 364 376 +12

Lines 17049 17935 +886

==========================================

+ Hits 9696 10206 +510

- Misses 6802 7135 +333

- Partials 551 594 +43

| Impacted Files | Coverage Δ | |

|---|---|---|

| translator/config/schema.go | 81.81% <ø> (ø) |

|

| translator/totomlconfig/toTomlConfig.go | 72.72% <ø> (ø) |

|

| plugins/outputs/cloudwatch/cloudwatch.go | 76.34% <100.00%> (+1.72%) |

:arrow_up: |

| ...late/metrics/global_dimensions/globalDimensions.go | 100.00% <100.00%> (ø) |

|

| ...puts/logfile/tail/file_deleting_checker_windows.go | 36.36% <0.00%> (ø) |

|

| ...windows_event_log/wineventlog/wineventlogrecord.go | 62.50% <0.00%> (ø) |

|

| plugins/inputs/win_perf_counters/pdh.go | 65.47% <0.00%> (ø) |

|

| plugins/inputs/logfile/tmpfile_windows.go | 40.00% <0.00%> (ø) |

|

| ...nputs/windows_event_log/wineventlog/wineventlog.go | 36.40% <0.00%> (ø) |

|

| ...s/inputs/windows_event_log/wineventlog/sys_call.go | 47.36% <0.00%> (ø) |

|

| ... and 7 more |

Help us with your feedback. Take ten seconds to tell us how you rate us. Have a feature suggestion? Share it here.

LGTM but looks like failed unit tests after the change. Maybe I didn't look hard enough at the unit test code. Once it's passing I'm good to approve

Another interesting find I have found is that: we could leverage global_tags from telegraf. However, it would be override by tags within each plugin. Have we considered this option before? Will try to look the PR EOD.

This could be a breaking change if people have non aws: values in the append_dimensions field

I took this at face value, but after reviewing with product today, I think I don't understand the concern here. Don't we fail config translation today if you supply a non aws: prefixed value in the outer append_dimensions configuration?

If there's no real concern about breaking existing customers, I think it makes sense to consolidate the logic into the existing append_dimensions field, though I do hate pushing for that so late after the fact. Realistically, the existing JSON structure is already confusing enough as it is, so I think we have to be diligent with knowing what warrants adding more bloat to it.

Separate thing to validate with the change e2e regardless of the above - it was brought to my attention that there are some metrics configurations that do not support an inner append_dimensions field. One thing that we'll need to verify is that the global dimensions are added regardless of that fact. For example, our statsd plugin does not allow for statsd-specific append dimensions, but it's likely that if you are trying to append global dimensions, you'll want that to be present

LGTM but looks like failed unit tests after the change. Maybe I didn't look hard enough at the unit test code. Once it's passing I'm good to approve

Ah yes. Sorry I didn't spot these before (some of the TestSEH1Distribution tests always fail on my Mac so it's sometimes hard to spot genuine failures). I've pushed a fix for the broken tests now.

I took this at face value, but after reviewing with product today, I think I don't understand the concern here. Don't we fail config translation today if you supply a non

aws:prefixed value in the outer append_dimensions configuration?If there's no real concern about breaking existing customers, I think it makes sense to consolidate the logic into the existing

append_dimensionsfield, though I do hate pushing for that so late after the fact. Realistically, the existing JSON structure is already confusing enough as it is, so I think we have to be diligent with knowing what warrants adding more bloat to it.

I totally agree about limiting the complexity of the configuration but I've tried supplying non aws: prefixed values and they seem to be silently dropped. Certainly no tests fail if you e.g. add some in here: https://github.com/aws/amazon-cloudwatch-agent/blob/main/translator/config/sampleSchema/validLinuxMetrics.json#L106 and I can't find any code in the translator/translate/metrics/append_dimensions file tree that would reject a value that doesn't match one of the currently-supported values.

Separate thing to validate with the change e2e regardless of the above - it was brought to my attention that there are some metrics configurations that do not support an inner append_dimensions field. One thing that we'll need to verify is that the global dimensions are added regardless of that fact. For example, our statsd plugin does not allow for statsd-specific append dimensions, but it's likely that if you are trying to append global dimensions, you'll want that to be present

I can't see any reason why the global dimensions wouldn't be added provided they still use the CloudWatch output plugin but it would be good to check end-to-end if possible.

and I can't find any code in the

translator/translate/metrics/append_dimensionsfile tree that would reject a value that doesn't match one of the currently-supported values.

Ok. I'll take a look today to try to trace through where they're getting silently dropped.

--- FAIL: TestBackoffRetries (66.47s)

cloudwatch_test.go:807:

Error Trace: cloudwatch_test.go:807

Error: "1s" is not greater than "1.022734529s"

Test: TestBackoffRetries

FAIL

looks like a weird failure. I'm assuming this is flakiness and not related to your change. Will rerun the mac tests

@paulbrimicombe so in the append_dimensions translation, we register a specific set of rules to apply. The key problem that is causing unrecognized values to be dropped is that there isn't a matching rule. It looks kind of messy and not fit for arbitrary strings, unfortunately. Because there is no child rule that extracts the arbitrary key-value pairs, they never make it into the translated config.

The key problem that is causing unrecognized values to be dropped is that there isn't a matching rule. It looks kind of messy and not fit for arbitrary strings, unfortunately. Because there is no child rule that extracts the arbitrary key-value pairs, they never make it into the translated config.

@SaxyPandaBear I think this is all deliberate in that the original developers wanted to drop unsupported keys and the easiest way to do that was to iterate through the supported keys. It wouldn't be particularly difficult to extract any additional keys but my worry is that people who do have additional keys in their config already will suddenly have new dimensions appended to their metrics which would be a breaking change.

my worry is that people who do have additional keys in their config already will suddenly have new dimensions appended to their metrics which would be a breaking change

I'll start a conversation internally about it. My gut reaction is that if someone has configured an arbitrary key-value pair in their append dimensions and hasn't complained that they don't show up as dimensions on CW, they don't care either way. I'm a little less worried about this being a breaking change because it's meant for static strings, at least from a cost perspective. I can get confirmation to be sure, but I don't think that customers who do this should receive a spike in CW billing. If you start with [instanceID, autoScalingGroupName], there's already cardinality there so you're paying for unique metrics per instance ID and ASG name. Adding "foo=bar" to the dimension set is still going to produce the same number of metrics, just with another dimensions. At least that's what my gut tells me.

Back to the main point, I don't even really see an issue operationally. I just tested something out myself. I set up a config with just instance ID as the global append dimension:

{

"agent": {

"metrics_collection_interval": 60,

"run_as_user": "root"

},

"metrics": {

"append_dimensions": {

"InstanceId": "${aws:InstanceId}"

},

"metrics_collected": {

"mem": {

"measurement": [

"mem_used_percent"

],

"metrics_collection_interval": 60

}

}

}

}

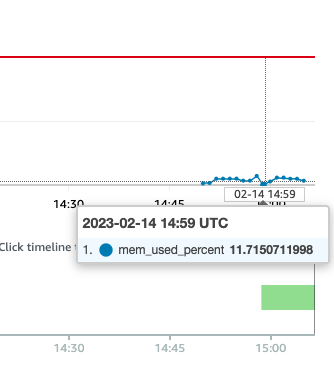

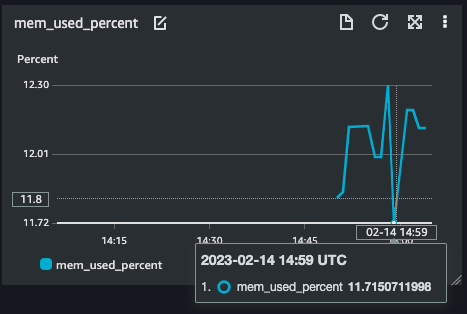

Then I set up a dashboard widget for the mem used metric, and also set an alarm.

Afterwards, I added InstanceType as a dimension:

{

"agent": {

"metrics_collection_interval": 60,

"run_as_user": "root"

},

"metrics": {

"append_dimensions": {

"InstanceId": "${aws:InstanceId}",

"InstnaceType": "${aws:InstanceType}"

},

"metrics_collected": {

"mem": {

"measurement": [

"mem_used_percent"

],

"metrics_collection_interval": 60

}

}

}

}

And monitored the alarm / dashboard widget. The metrics still flowed to the alarm and widget. 14:49 UTC is when I restarted the agent with the updated config, so that's why I highlighted that timestamp in the metric

my worry is that people who do have additional keys in their config already will suddenly have new dimensions appended to their metrics which would be a breaking change

My gut reaction is that if someone has configured an arbitrary key-value pair in their append dimensions and hasn't complained that they don't show up as dimensions on CW, they don't care either way.

This was what the original bug report #172 was requesting / complaining about so some people obviously do care about this (including my organisation...).

I'm a little less worried about this being a breaking change because it's meant for static strings, at least from a cost perspective. I can get confirmation to be sure, but I don't think that customers who do this should receive a spike in CW billing. If you start with [instanceID, autoScalingGroupName], there's already cardinality there so you're paying for unique metrics per instance ID and ASG name. Adding

"foo=bar"to the dimension set is still going to produce the same number of metrics, just with another dimensions. At least that's what my gut tells me.

I'm very confident that you're correct that this won't introduce additional costs for clients. But that's not my main worry (see commentary below).

Back to the main point, I don't even really see an issue operationally. I just tested something out myself. I set up a config with just instance ID as the global append dimension:

snip...

Then I set up a dashboard widget for the mem used metric, and also set an alarm.

Afterwards, I added InstanceType as a dimension:

snip...

And monitored the alarm / dashboard widget. The metrics still flowed to the alarm and widget.

All you have done here is confirmed the current behaviour — that unsupported append_dimensions keys are silently dropped when the JSON configuration is converted to TOML. If we suddenly start treating arbitrary static key / value pairs in append_dimensions as dimensions to be added to all metrics, client's alarms / metric queries / search expressions will be broken because every metric will have a different set of dimensions to those included previously.

In your second example, your mem_used_percent metric would have another dimension of InstnaceType: ${aws:InstanceType} added to it, meaning that both the alarm configuration and the dashboard widget would be broken (dimensions have to match exactly for CloudWatch alarms — if you used a very specific type of search expression, your dashboard widget might be OK, but most likely it wouldn't be).

This is why I think we should consider using append_dimensions as the configuration key for these global dimensions as a breaking change (some client's monitoring will be broken when they upgrade to the latest version of the CloudWatch Agent).

If any of this doesn't make sense / seems wrong @SaxyPandaBear , please let me know!

🤦 I had a typo in the config so it didn't append the other dimension. That's totally my bad. Okay that clears it up. I'll talk with others internally. Don't think there's a good way to figure out who would be impacted by this if we were to introduce the breaking change

This PR was marked stale due to lack of activity.

My gut reaction is that if someone has configured an arbitrary key-value pair in their append dimensions and hasn't complained that they don't show up as dimensions on CW, they don't care either way.

I'm agree with Andrew on this with using append_dimensions as a replace for global_dimensions. However, I'm not align with the implementations

- Global dimensions should be overriden by plugin dimensions , not other way around though

- The implementation shares the same mechanism with

global_tags; however, only the dimension's order is different so I'm still not sure the reason why we are not leverage it? (e.g using global_tags with non:AWS)

If others agrees with it then I'm fine with it, but this would be not a good behavior when introducing to other exporters in the future.

My gut reaction is that if someone has configured an arbitrary key-value pair in their append dimensions and hasn't complained that they don't show up as dimensions on CW, they don't care either way.

I'm agree with Andrew on this with using

append_dimensionsas a replace for global_dimensions

@khanhntd to me, as a client of this application, this would definitely be a breaking change. My production dashboards / alarms / monitoring etc. would be broken by a routine update to the CloudWatch Agent for the same input configuration.

- The implementation shares the same mechanism with

global_tags; however, only the dimension's order is different so I'm still not sure the reason why we are not leverage it? (e.g using global_tags with non:AWS)

This is interesting. I'd completely forgotten about the Telegraf global_tags option (I'd even used it a long time ago in a different project 🤦 ).

There seems to be some half-built(?) support for use of the built-in Telegraf global_tags (e.g. translator/translate/globaltags/globaltags.go) but it's untested, undocumented and doesn't seem to actually work with the current version of the agent (e.g. if I add a global_tags entry to the top level of the JSON config file, it never ends up in the TOML file — the service starts and creates the TOML file but something is filtering it out before it gets there).

If we do use global_tags instead of this PR, there are some things we need to decide / work out:

- Where

global_tagsshould appear in the JSON. Currently it looks like it's being picked up from the root of the JSON -- should it really be inmetricsinstead? - According to the Telegraf docs, "Global tags are overriden [sic] by tags set by plugins." — is this the behaviour we want?

- Do we want to have deterministic behaviour wrt. global vs local tags when there are too many tags / dimensions? I don't know whether we have access to the information we'd need in the output plugin (will we have to do anything to pass through the information about which tags are global?)

- How would we test that this works?

- Where

global_tagsshould appear in the JSON. Currently it looks like it's being picked up from the root of the JSON -- should it really be in metrics instead?

Yes we can put a translator for it. (e.g if you have global dimension in JSON, put it in global tags). Therefore, you can put the global_dimensions as part of metrics and global_tags are not being used based on my research (internal and external)

- According to the Telegraf docs, "Global tags are overriden [sic] by tags set by plugins." — is this the behaviour we want?

IMO, yes. Plugin dimension should be prioritized over global plugin. Since if the customers are not setting at plugin level, then there is no reason why the customers would expect it to begin with.

- Do we want to have deterministic behaviour wrt. global vs local tags when there are too many tags / dimensions? I don't know whether we have access to the information we'd need in the output plugin (will we have to do anything to pass through the information about which tags are global?)

I have the same thought of limiting the global tags to put some constraint on it. However, there are two ways to avoid that:

- Using aggregating dimensions which will generate more metrics but shimming down the dimensions. However, increasing the cost for customers

- Telegraf also has a feature of

tagdroportagfilterto filter out all the unwanted tags and can be used to filter global tags ifglobal tags+plugin tagsare over thirdty.

The difference is defining the default behavior for agent and the intention on which perspective (customer or us).

- How would we test that this works?

The most and easiest way that I could think off would be writing the toml config for agent (e.g

[global_tags]

user = "${USER}"

[[inputs.mem]]

)

The reason why I would bring up global_tags would be:

- Firstly, it won't be specific to any output plugins

- Secondly, support dimensions as part of traces and logs in the future

- Thirdly, reduce all the functional code that we want to rely on CloudWatch

Let's me know what are your thoughts about this too !

This PR was marked stale due to lack of activity.

IMO, yes. Plugin dimension should be prioritized over global plugin. Since if the customers are not setting at plugin level, then there is no reason why the customers would expect it to begin with.

Sorry but I'm not sure what you mean by "if the customers are not setting at plugin level, then there is no reason why the customers would expect it to begin with". Bear in mind that customers may well not be in control of the output of plugins in use (e.g. they are from third-party tools etc.). The original requests for global dimensions was to allow clients to add arbitrary dimensions to all metrics, which also wouldn't have been set at the plugin level.

Telegraf also has a feature of tagdrop or tagfilter to filter out all the unwanted tags and can be used to filter global tags if global tags + plugin tags are over thirdty.

How can we know at runtime if global tags + plugin tags are over thirty? As I understand it, the Telegraf config for things like tagdrop and tagfilter are static and so can't change depending on the number of tags for a specific metric etc.

Firstly, it won't be specific to any output plugins

This I feel may be a drawback in that it might have unintended consequences for other output plugins. The feature request is for CloudWatch metrics — are we sure that other output plugins would want the same global tags applied (now and in future)?

Pragmatically I think we need to try to actually come to some decision on this because it's turning out to be taking a long time.

Do we think that using global_dimensions is the right thing to use? That would mean:

- The additional dimensions would always be applied to all output plugins

- We have less fine-grained control over what happens when there are too many dimensions (e.g. deterministically choosing whether we prioritise plugin or global dimensions etc.)

- I worry a bit about testing (esp. for the conditions when the dimensions overflow)

Or, do we think using something like the code in the PR is the right thing to use? This would mean:

- Additional dimensions are only added to CloudWatch metrics

- We have fine-grained control over what happens when the number of dimensions overflows

- We can unit test lots of the additional code

- There is more code / more to maintain

## Notes on testing global_tags

- From the code, it looks like

global_tagsis supported. - I can add unit tests to (e.g. by adding it to the JSON and TOML files here https://github.com/aws/amazon-cloudwatch-agent/blob/main/translator/totomlconfig/toTomlConfig_test.go#L166-L168) that prove that the translation works correctly (there are some changes required to the tests to make sure they actually test this properly)

- On a real copy of the agent, however, any

global_tagsI add to the JSON don't get migrated to the TOML on startup for some reason. They seem to get stripped out by something. Any idea why that would be?

Hi @paulbrimicombe , Will come back to the PR within today. Sorry for taking a long time to respond though

This PR was marked stale due to lack of activity.

This PR was marked stale due to lack of activity.

Hello @khanhntd 5 months have passed, can you please share with us what is the opinion of the CloudWatch team on this issue and the solution proposed in this PR?

I think the limitation this PR is trying to address would already help a lot for the folks monitoring Ec2 tasks, since the dimensions you are forcing your users to use, might not make sense for some (most?) use cases.

But even more necessary for ECS or Serverless applications.... for which the current interface of the CloudWatch Agent does not allow ANY global dimensions to be set! See: https://github.com/aws/amazon-cloudwatch-agent/issues/864

Moreover, in theory, one could add a CloudWatch agent to his on-promise stack if he wants to use CloudWatch as a monitoring solution. So the CloudWatch Agent should allow some kind of generic configuration, agnostic of the stack used, and I think this PR goes exactly in this direction.

In general, when you look at the CloudWatch Agent interface you can really feel it was designed around EC2 which make sense historically, but AWS should now try to support and facilitate way more than that because of the portfolio of products it offers 🙏

This PR was marked stale due to lack of activity.

Forced to "up" so the PR does not stale... cc @khanhntd