hudi

hudi copied to clipboard

hudi copied to clipboard

[SUPPORT] HUDI partition table duplicate data cow hudi 0.10.0 flink 1.13.1

Tips before filing an issue

-

Have you gone through our FAQs?

-

Join the mailing list to engage in conversations and get faster support at [email protected].

-

If you have triaged this as a bug, then file an issue directly.

Describe the problem you faced

A clear and concise description of the problem.

To Reproduce

Steps to reproduce the behavior:

hudi sink config

'connector' = 'hudi',

'hoodie.table.name' = 'xxx',

'table.type' = 'COPY_ON_WRITE',

'path' = 'xxxx',

'hoodie.datasource.write.keygenerator.type' = 'COMPLEX',

'hoodie.datasource.write.recordkey.field' = 'id',

'hoodie.cleaner.policy' = 'KEEP_LATEST_FILE_VERSIONS',

'hoodie.cleaner.fileversions.retained' = '20',

'hoodie.keep.min.commits' = '30',

'hoodie.keep.max.commits' = '40',

'hoodie.cleaner.commits.retained' = '20',

'write.operation' = 'upsert',

'write.commit.ack.timeout' = '60000000',

'write.sort.memory' = '128',

'write.task.max.size' = '1024',

'write.merge.max_memory' = '100',

'write.tasks' = '96',

'write.precombine' = 'true',

'write.precombine.field' = 'meta_es_offset',

'index.state.ttl' = '0',

'index.global.enabled' = 'false',

'hive_sync.enable' = 'true',

'hive_sync.table' = 'xxx',

'hive_sync.auto_create_db' = 'true',

'hive_sync.mode' = 'hms',

'hive_sync.metastore.uris' = 'xxx',

'hive_sync.db' = 'xxx',

'hoodie.datasource.write.partitionpath.field' = 'year,month,day',

'hoodie.datasource.write.hive_style_partitioning' = 'true',

'hive_sync.partition_fields' = 'year,month,day',

'hive_sync.partition_extractor_class' = 'org.apache.hudi.hive.MultiPartKeysValueExtractor',

'index.bootstrap.enabled' = 'true'

description:

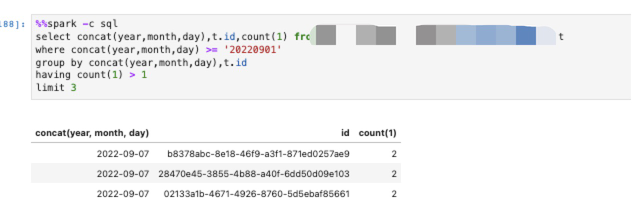

After initialize history data, set scan.startup.mode as timestamp,and set the timestamp ahead, the duplicate occur,and if we restart the job from checkpoint, the data is well

data duplicate result:

Expected behavior

A clear and concise description of what you expected to happen.

Environment Description

-

Hudi version : 0.10.0

-

flink version : 1.13.1

-

Hive version : 3.1.2

-

Hadoop version : 3.1.0

-

Storage (HDFS/S3/GCS..) : s3

-

Running on Docker? (yes/no) : no

Additional context

Add any other context about the problem here.

Stacktrace

Add the stacktrace of the error.

mark

@yuzhaojing @danny0405 Could any one of you chime in here?

@yuzhaojing @danny0405 : gentle ping. @zwj0110 : feel free to close if the issue is resolved.

Not enough details here, can you try 0.12.1 and see if the duplicates happen ? Would close the issue here first.