VictoriaMetrics

VictoriaMetrics copied to clipboard

VictoriaMetrics copied to clipboard

Support Prometheus Metric Metadata API

Is your feature request related to a problem? Please describe.

This feature request is to support the Prometheus Metric Metadata API at /api/v1/metadata in Prometheus v2.15.0+. Similar to #643, but this endpoint returns metadata about scraped metrics without any associated target information.

vmselect currently returns an empty placeholder response for this endpoint (see e6bf88a4d4948d60b9f1b9c432db46af7001368c).

More generally, the request is for VictoriaMetrics to support metric metadata throughout its Prometheus-compatible components. In addition to implementing /api/v1/metadata, metadata would need to be scraped from Prometheus targets and also propagated via Prometheus remote-write, using the metadata field added to the Prometheus remote-write protocol in v2.23.0.

Describe the solution you'd like

A full solution for Prometheus metadata would require updates to a few components:

vmselectimplements the/api/v1/metadataendpoint with a response containing at least one metadata object per metric name.vmstorageadds support for storing metric metadata.- If implementing separate metadata storage is not feasible, a workaround could be to store metadata using a special internal metric name (e.g.,

_prometheus_metadata) with metadata stored inmetric,type,help,unitlabels, requiring no change tovmstorageitself.

- If implementing separate metadata storage is not feasible, a workaround could be to store metadata using a special internal metric name (e.g.,

vmagentscrapes metadata from Prometheus targets, and add themetadatafield toremote_writerequests it sends.- Both

vmagentandvminsertextract themetadatafield fromremote_writerequests they receive.

Describe alternatives you've considered

Since Victoriametrics currently ignores Prometheus metric metadata, the only alternative would be to proxy this endpoint to another handler which serves metric metadata stored in another system.

Additional context

Since the introduction of the Metric Metadata API, metric metadata has become an increasingly critical part of the Prometheus user experience.

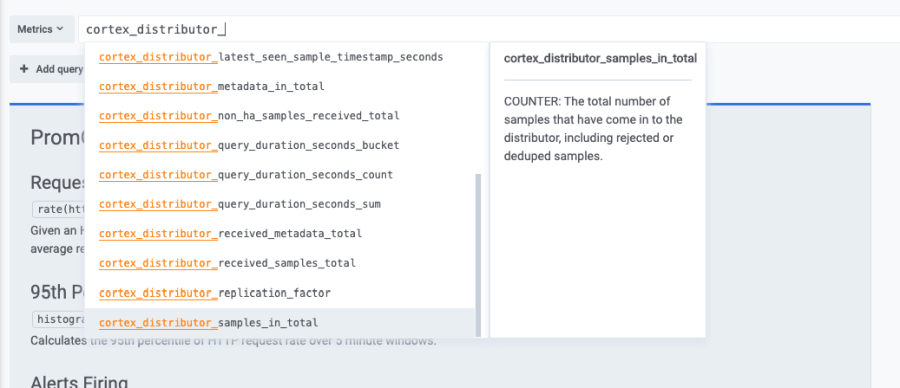

One key use-case related to this feature request is to support Grafana's in-line metrics help text when using VictoriaMetrics as a Prometheus datasource:

I recently encountered another use-case for storing metric metadata, which is that some systems scraping Prometheus data depend on a metric's declared type (as specified in the TYPE metadata) to handle the complex metrics types in special ways.

The example I came across is Datadog's Prometheus integration, which parses TYPE to automatically translate histogram metrics into its own internal distribution format. When scraping the /federate endpoint of a VictoriaMetrics cluster to collect metrics into DataDog, this type metadata is not preserved and histograms are incorrectly collected as gauge metrics instead.

Metrics can be explicitly set to histogram type with a type_overrides parameter, but ideally VictoriaMetrics would preserve the type metadata and pass it along for better compatibility with integrations.

The related feature request - https://github.com/VictoriaMetrics/VictoriaMetrics/issues/643

I think VM should start supporting metadata for the following reasons:

- It better aligns with OpenTelemetry specification for time series, that suggests specifying measurement units in metadata instead of metric name

- It potentially enables compatibility with stuff like MCP servers or other intelligent assistants that could appear in the future.

The two main points outlined in https://github.com/VictoriaMetrics/VictoriaMetrics/issues/643#issuecomment-662443375 seem to be outdated:

- "VictoriaMetrics supports many other data ingestion protocols, which have no such concepts as targets and metrics' metadata." - OpenTelemetry is about to replace all other ingestion protocols and it supports metadata.

- "The contents of the /api/v1/targets/metadata is mostly identical to the contents of the /metrics pages scraped from targets." - I believe, the usefulenes of this info will improve to address needs of AI assistants in future.

My design proposal for VictoriaMetrics to start supporting metadata is the following.

Delivery. OpenTelemetry. OpenTelemetry protocol supports sending metadata and has it outlined in specification - see https://opentelemetry.io/docs/specs/otel/metrics/data-model/#timeseries-model. We should investigate whether this metadata is compatible with Prometheus metrics metadata before moving further.

Delivery. Prometheus Remote Write. In Prometheus, metadata is exposed per metric family and is available during scraping. However, Prometheus Remote Write 1.0 ignores metadata on sending. We can update Prometheus Remote Write protocol on vmagent side, so it would collect and and send metadata together with samples payload. This is more than doable. However, all other agents that use Prometheus Remote Write 1.0 won't send the metadata. VM components do not support Prometheus Remote Write 2.0 (that has metadata support), as it seems to provide no benefits:

- its compression is worse than vmagent remote write compression

- VM doesn't support native histograms, which is one of the main features of protocol update.

By default, metadata parsing should be disabled.

Storage. To make it simple, we can store metadata info in-memory in a similar way to how Prometheus does it. Metric names are usually very limited in cardinality, so holding it in memory shouldn't cause excessive mem usage.

We can ignore persistance of metadata for simplicity's sake, as it is expected to be shortly populated with new incoming writes.

The in-memory cache should be protected to:

- To not consume more than 1% (discussable) of memory at max. If limit is reached, new entries should be ignored. Storage should warn about capacity depeltion via metrics and log messages.

- To ignore metadata that is too long

By default, in-memory storage for metadata should be disabled.

Read API.

We should start supporting Prometheus Metric Metadata API at /api/v1/metadata on vmselect. This API will consult the in-memory cache on vmstorage side, merge response and return to the user on request.

Open questions.

- Relabeling could change metric names. Should we account for that when sending metadata? The proper handling of such ituation could have additional compute costs.

- Even if VM supports metadata, clients that use Prometheus Remote Write 1.0 won't be able to send metadata anyway. So it will only work with otel or vmagent. Is it worth it?

@hagen1778

We should investigate whether this metadata is compatible with Prometheus metrics metadata before moving further.

As I understand, currently, the metadata we want to display and store are type, unit, and description/help. They are not identical in OTel and prometheus, but similar and can be translated between each other.

VM components do not support Prometheus Remote Write 2.0 (that has metadata support)

I think we will need to support RW2.0 in the future. Although RW2.0 performs worse than vmagent remote write, it still offers performance improvements over RW1.0 and will be adapted by Prometheus3.0 users. The prometheus remote write exporter in OTel collector will also support RW2.0, so users can easily switch to it.

Read API. We should start supporting Prometheus Metric Metadata API at /api/v1/metadata on vmselect. This API will consult the in-memory cache on vmstorage side, merge response and return to the user on request.

By merging, if there are multiple different metadata for one metric family from different vmstorage nodes(or the same storage node due to schema change, or multiple applications exposing the same metric with different metadata), should we display them all or only the latest ingested? I guess we should keep it simple and display them all(or with a warning), as we don't want to attach timestamp to the metadata and it can be existed on multiple nodes.

Relabeling could change metric names. Should we account for that when sending metadata? The proper handling of such ituation could have additional compute costs.

I'd say no, metadata shouldn't be changed after been scraped from the target.

Even if VM supports metadata, clients that use Prometheus Remote Write 1.0 won't be able to send metadata anyway. So it will only work with otel or vmagent. Is it worth it?

I think yes, metadata can already be sent via OTLP and prometheus remote write exporter in OTel with RW1.0.

send_metadata: If set to true, prometheus metadata will be generated and sent. Default: false. This option is ignored when using PRW 2.0, which always includes metadata.

And besides supporting metadata in VM, we should also enhance vmagent to parse and send metadata to remote destinations.

Even if VM supports metadata, clients that use Prometheus Remote Write 1.0 won't be able to send metadata anyway. So it will only work with otel or vmagent. Is it worth it?

It's actually a blocker. The majority of clients won't be able to forward metadata.

And besides supporting metadata in VM, we should also enhance vmagent to parse and send metadata to remote destinations.

It'll have performance cost and makes no sense without Prometheus Remote Write 2.0 or proprietary extension to vmagent remote write.

Maybe we should look on this problem with a different angle? What if vmselect will be able to load metadata from statically defined file and keep it in-memory? Majority of metric names help information will never change and it's only requires to generate this file and attach it to the vmselect nodes.

It's actually a blocker. The majority of clients won't be able to forward metadata.

I disagree. We should expect majoriy of clients to be either VM software or software that uses otel protocol.

It'll have performance cost and makes no sense without Prometheus Remote Write 2.0 or proprietary extension to vmagent remote write.

Sure it will have. But this could be behind feature flag and I don't expect performance penalty to be high. RW protocol is using protobuf - we can just add a new field to it without losing compatibility.

What if vmselect will be able to load metadata from statically defined file and keep it in-memory?

That could make sense if it were straightforward and convenient for users. What's your understanding of such procedure?

Sure it will have. But this could be behind feature flag and I don't expect performance penalty to be high. RW protocol is using protobuf - we can just add a new field to it without losing compatibility.

The actual change is more complicated. At first, it requires flag for metadata scrapping and attaching it to the remoteWrite request. Adding proprietary extension to Prometheus remote write protocol could make it incompatible ( if Prometheus team will add a new field, which conflicts with ours).

Next change will be - upgrade RPC protocol version for vminsert->vmstorage communication. It's painful for users.

And a new flag for storage. Which is not a problem at all. But for proper work, it's needed to set flags on collector and storage.

That could make sense if it were straightforward and convenient for users. What's your understanding of such procedure?

It's a downside of such feature. It could work, if there was any kind of public registry with Prometheus metrics HELP and TYPE meta information.

Adding proprietary extension to Prometheus remote write protocol could make it incompatible ( if Prometheus team will add a new field, which conflicts with ours).

As I noted in the original issue description, the upstream Prometheus remote write protocol has supported metric metadata since v2.23.0 (2020).

Although metadata is only officially part of the Remote Write 2.0 specification (see .proto), it has been given a reserved field in the Remote Write 1.x spec, and has been implemented by Prometheus (see .proto). So implementing the same Prometheus-compatible extension to RW1.0 would be entirely possible and wouldn't introduce any future compatibility issues, since it's already well-defined.

Thanks for update @wjordan. This feature is already in-progess.

Support of metadata scraping and forwarding was added in release v1.124.0:

FEATURE: vmagent : add -enableMetadata command-line flag to allow sending metadata to the configured -remoteWrite.url, metadata can be scraped from targets, received via VictoriaMetrics remote write, Prometheus remote write v1 or OpenTelemetry protocol. See #2974 .

This feature has been included into v1.130.0.