AutoGPT

AutoGPT copied to clipboard

AutoGPT copied to clipboard

crashes with error when using GPT-4-32k model in azure.

Duplicates

- [X] I have searched the existing issues

Steps to reproduce 🕹

while using the GPT-4-32k model (yes, i do have access to it) after entering my 5th "Goal" during the initial stage, i get this error message

Current behavior 😯

Goal 5: this the goal text 5 blah...tiktok.get_encoding to explicitly get the tokeniser you expect.'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:\Users\Brentf\source\repos\auto-gpt\scripts\main.py", line 441, in

Expected behavior 🤔

expected = no error

Your prompt 📝

# Paste your prompt here

while using the GPT-4-32k model (yes, i do have access to it)

Now you have to tell, how Is it? When does it excel and when is it barely better? On which tasks did you try it?

while using the GPT-4-32k model (yes, i do have access to it)

Now you have to tell, how Is it? When does it excel and when is it barely better? On which tasks did you try it?

edit:(sorry i mis-read your Q.) it is just like GPT-4 and GPT-4-0413, only MUCH bigger token limit. pasting a huge script in is nice.

If I re-run it, it is the same error, however it DOES bring back my AI name and goals. I was thinking maybe auto-GPT didn't have GPT-4-32k as a model option and maybe caused it to crash?

I have the same thing, and its because its tripping content filters. Azure content filters are hardcore.

And yes the 32k token model works fine except when it trips content filters.

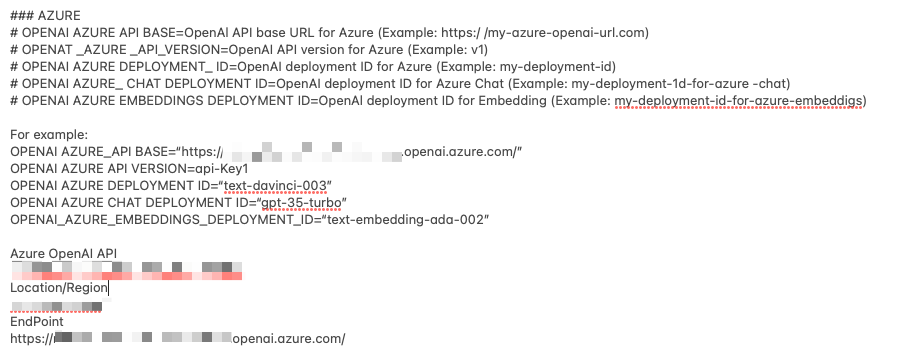

I read too fast. You need to put the name of your model you created in azure, not gpt4. In addition go to the azure studio and copy and paste the API Version. Its not v1. Its 2023-03-15-preview for the 32k model.

I read too fast. You need to put the name of your model you created in azure, not gpt4. In addition go to the azure studio and copy and paste the API Version. Its not v1. Its 2023-03-15-preview for the 32k model.

OPENAI_AZURE_API_BASE="https://mentor.openai.azure.com/" OPENAI_AZURE_API_VERSION="2023-03-15-preview" OPENAI_AZURE_DEPLOYMENT_ID=GPT-4-32k OPENAI_AZURE_CHAT_DEPLOYMENT_ID=GPT-4-32k OPENAI_AZURE_EMBEDDINGS_DEPLOYMENT_ID=text-embedding-ada-002

I already have it as you suggested (my definitions above from my .env). any other ideas? unless I got the ID wrong.? I named the deployed model exactly the same as the model name, so the ID should be GPT-4-32K, right?

I have the same thing, and its because its tripping content filters. Azure content filters are hardcore.

And yes the 32k token model works fine except when it trips content filters.

I believe it, considering how hard it was to gain access to the damn thing! how did you fix yours?

Your issue is different. Did you rename the azure.yaml.template to azure.yaml?

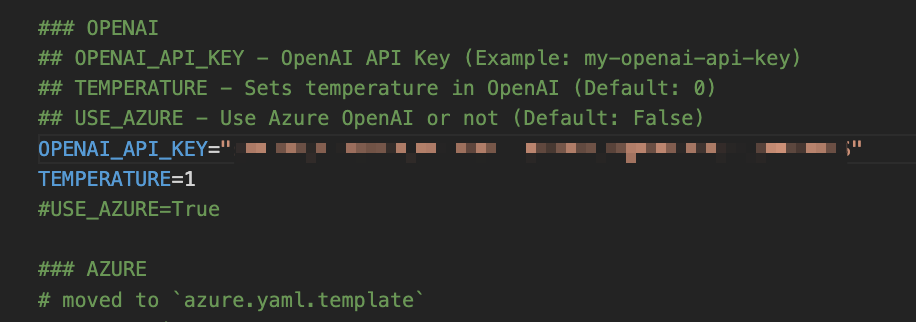

Once you do that there is another set of variables to change there. here is what my env looks like: OPENAI_API_KEY=YOUR AZURE KEY TEMPERATURE=1 USE_AZURE=True

OPENAI_AZURE_API_BASE="https://cdfds.openai.azure.com" <---Your URL OPENAI_AZURE_API_VERSION="2023-03-15-preview" <---this is the 32k API OPENAI_AZURE_DEPLOYMENT_ID="chatgpt" <--This is the name you gave your model OPENAI_AZURE_CHAT_DEPLOYMENT_ID="gpt4" <--This is the name you gave your model OPENAI_AZURE_EMBEDDINGS_DEPLOYMENT_ID="cyembed" <--This is the name you gave your model

Then edit the yaml

azure_api_type: "azure" <---this is important as its specific to the 32k azure_api_base: "https://YOURS.openai.azure.com" <---your URL azure_api_version: "2023-03-15-preview" <---your api version azure_model_map: fast_llm_model_deployment_id: "ChatGPT" <---the name you gave your model smart_llm_model_deployment_id: "gpt4" <---the name you gave your model embedding_model_deployment_id: "cyembed" <---the name you gave your model

Your issue is different. Did you rename the azure.yaml.template to azure.yaml?

yep, sure did.

azure_api_type: "azure" <---this is important as its specific to the 32k

mine is set as: azure_api_type: azure_ad <---it is the default as it was in the azure.yaml file.. should i change it?

Yes change it

I did that a a few other things. after a couple hours of hair-pulling, I gave up on the 32k model for now. The 8k works fine for most of my needs.

I did find it odd that there isn't a separate variable for the azure key vs. the OpenAI key. I would think there would be two separate variables.

Did anyone else successfully deploy the 32k?

Yes my 32k azure works just fine.

Hi @Cytranics , I'm facing similar issue running auto-gtp with Azure API since I cloned the latest auto-gpt project release

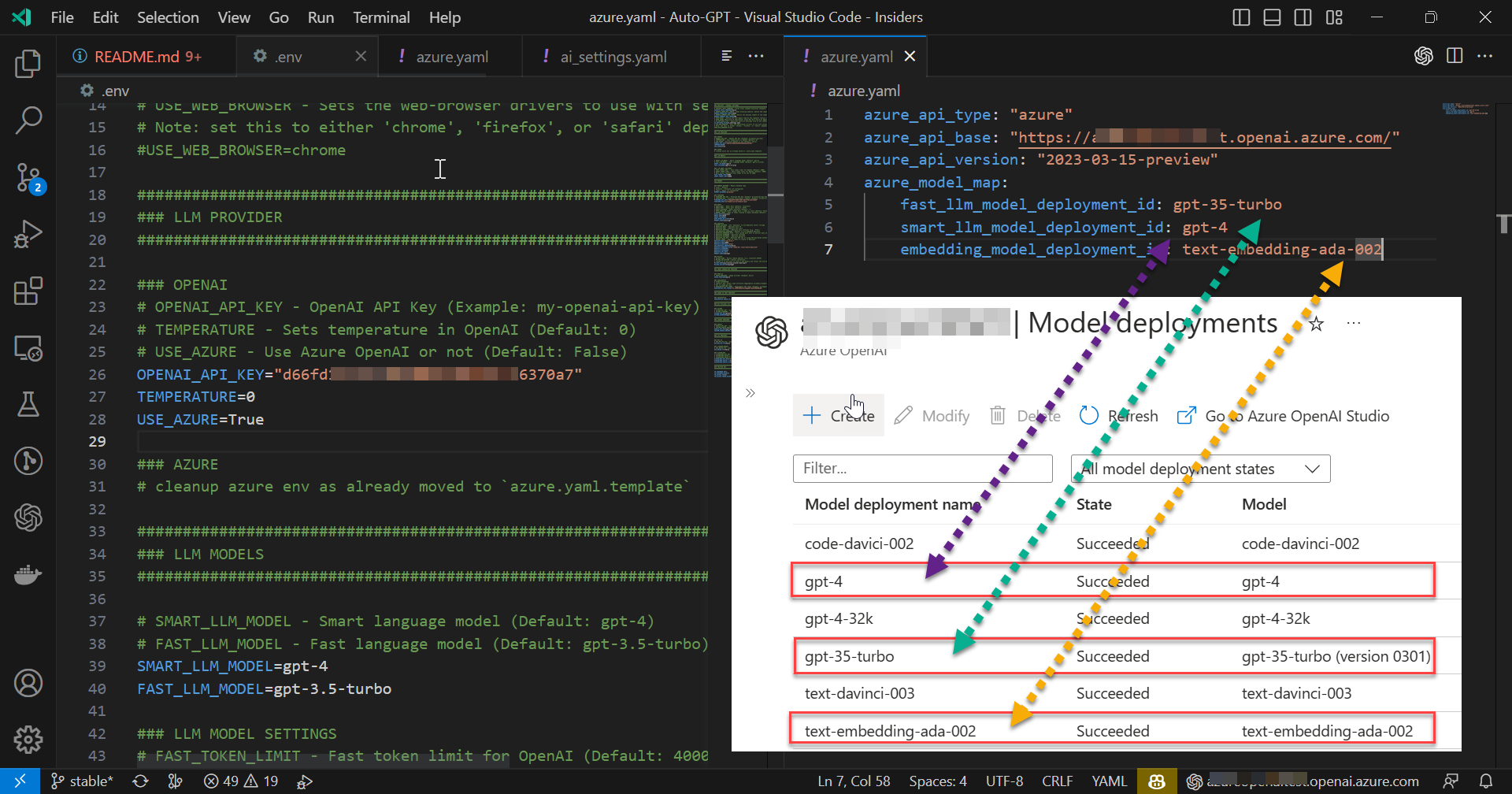

on the screenshot below you can see my setup : env file (left side) / azure.yaml (top right side) / my azure deployment models (bottom right side) Do you see something wrong here ? Thanks

Yes it is in-

Hi @Cytranics , I'm facing similar issue running auto-gtp with Azure API since I cloned the latest auto-gpt project release

on the screenshot below you can see my setup : env file (left side) / azure.yaml (top right side) / my azure deployment models (bottom right side) Do you see something wrong here ? Thanks

Your model map is incorrect. deployement ID is the NAME that you gave the model. When you create a model in azure you give it a name. The name for my gpt-4 is chatdawg. So I have chatdawg for my smartmodel ID.

Hi @Cytranics , thanks but as you can see from my screenshot above the name I gave to my "Model deployment name" on my Azure Open AI resource is gpt-4 (same a the model name) so I don't understand what's wrong in my model map file (azure.yaml ) 😢

PS : my issue seems to be the same as https://github.com/Significant-Gravitas/Auto-GPT/issues/2186 which will be fixed with https://github.com/Significant-Gravitas/Auto-GPT/pull/2214

Hi @Cytranics , thanks but as you can see from my screenshot above the name I gave to my "Model deployment name" on my Azure Open AI resource is gpt-4 (same a the model name) so I don't understand what's wrong in my model map file (azure.yaml ) 😢

Could be the code. I'll be releasing an actual working autonomous bot here in a day or so, strictly built for GPT-4-32k. Mine wrote an entire html form with a node.js backend in 10 min. https://i.imgur.com/80J6gsW.png

Hi @Cytranics , thanks but as you can see from my screenshot above the name I gave to my "Model deployment name" on my Azure Open AI resource is gpt-4 (same a the model name) so I don't understand what's wrong in my model map file (azure.yaml ) 😢

Could be the code. I'll be releasing an actual working autonomous bot here in a day or so, strictly built for GPT-4-32k. Mine wrote an entire html form with a node.js backend in 10 min. https://i.imgur.com/80J6gsW.png

There is no place to enter the Azure OpenAI API key. How would it use the correct service without the API key and/or just using the endpoint URL without any other credentials?

Hi @infinitelyloopy-bt, you have to use 'azure.yaml' for that, it's located on the project root folder

Hi @infinitelyloopy-bt, you have to use 'azure.yaml' for that, it's located on the project root folder

Thanks Rabbyn. I get that. I have updated the appropriate files accordingly, nonetheless, it doesn't work. Doesn't it NEED access to the API to make API calls to Azure OpenAI the same way it makes API calls using OpenAI?

Screenshots below.

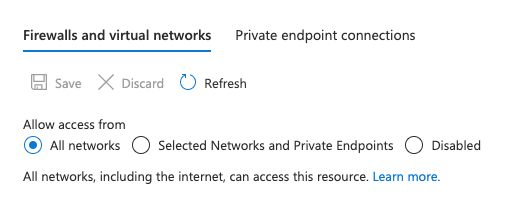

@infinitelyloopy-bt , İf your Azure Open AI deployment names from your Azure resource are equal to the names of the Azure Open AI as I can see on you screenshot then check the Network Setting (of your Azure Open AI resource), indeed the network access / Azure Firewall rule should allow the traffic with the machine running auto-gpt

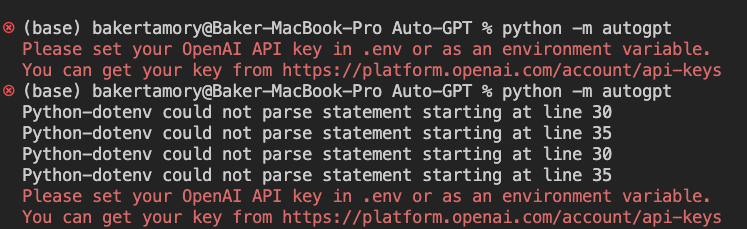

When I put in the Azure OpenAI credentials, this is the error I get.

OK can you share a screenshot with the model deployment names from your Azure Open AI ? ( you will hide the confidential information of course)

Sent from Outlook for Androidhttps://aka.ms/AAb9ysg

From: Baker Tamory @.> Sent: Wednesday, April 26, 2023 11:24:26 AM To: Significant-Gravitas/Auto-GPT @.> Cc: Guillaume Berthier @.>; Comment @.> Subject: Re: [Significant-Gravitas/Auto-GPT] crashes with error when using GPT-4-32k model in azure. (Issue #1360)

[CleanShot 2023-04-26 at 15 53 40]https://user-images.githubusercontent.com/3274593/234481973-c19c0d87-7a6b-4842-8e0d-b21ac15d76a7.png

[CleanShot 2023-04-26 at 15 54 37]https://user-images.githubusercontent.com/3274593/234482259-fb90222a-18c6-4df3-aa0a-7c6aef84df5c.png

— Reply to this email directly, view it on GitHubhttps://github.com/Significant-Gravitas/Auto-GPT/issues/1360#issuecomment-1522991087, or unsubscribehttps://github.com/notifications/unsubscribe-auth/ARMWWD5HVNPGLNMCB4CD6XLXDDLTVANCNFSM6AAAAAAW6VH34Q. You are receiving this because you commented.Message ID: @.***>

Do you have a contact we can get in touch with at Microsoft to get access to our test automation suite?

Do you have a contact we can get in touch with at Microsoft to get access to our test automation suite?

From MS docs; Azure OpenAI requires registration and is currently only available to approved enterprise customers and partners. Customers who wish to use Azure OpenAI are required to submit a registration form

Doesn't it NEED access to the API to make API calls to Azure OpenAI the same way it makes API calls using OpenAI?

Yes is the answer, and this is the question I previously had, but I am working with the standard 8K version, just fine. Am still trying to get 32K version working. only difference in the 2 is the model name. works great in the Azure Cognitive playground area.

**remember that you can only use OpenAI.com key or your Azure OpenAI key, not both. So just make sure you put your azure key1 or key2 into the OPEN_AI_KEY=

Got access to the 8k and can't replicate the issue unfortunately. Will wait until I get 32k to close

This issue has automatically been marked as stale because it has not had any activity in the last 50 days. You can unstale it by commenting or removing the label. Otherwise, this issue will be closed in 10 days.

This issue was closed automatically because it has been stale for 10 days with no activity.