k8s-device-plugin

k8s-device-plugin copied to clipboard

k8s-device-plugin copied to clipboard

What is the most recent stable beta and what do your tags mean?

Please clear up some confusion about versioning and your readme. Your readme says to use v0.6.0 of the plugin

kubectl create -f https://raw.githubusercontent.com/NVIDIA/k8s-device-plugin/v0.6.0/nvidia-device-plugin.yml

The nvidia-docs say to use the 1.0.0-beta version (should be 1.0.0-beta6).

kubectl create -f https://raw.githubusercontent.com/NVIDIA/k8s-device-plugin/1.0.0-beta6/nvidia-device-plugin.yml

Which is the most recent stable version?

Your readme also says to use nvidia-docker2, but nvidia support has been included in docker since at least v 1.19, so nvidia-docker2 isn't really needed any longer, is it? Is the nvidia-container-runtime needed any longer with docker v >= 1.19? The readme is generally confusing.

I'm confused by the branches and tags in your source code.

$ git branch -a

* master

remotes/origin/HEAD -> origin/master

remotes/origin/examples

remotes/origin/gh-pages

remotes/origin/latest-readme

remotes/origin/master

remotes/origin/v1.10

remotes/origin/v1.11

remotes/origin/v1.12

remotes/origin/v1.8

remotes/origin/v1.9

The dates (from the github page) indicate that all v1.x branches are at least 2 years old.

$ git tag

1.0.0-beta

1.0.0-beta1

1.0.0-beta2

1.0.0-beta3

1.0.0-beta4

1.0.0-beta5

1.0.0-beta6

v0.0.0

v0.1.0

v0.2.0

v0.3.0

v0.4.0

v0.5.0

v0.6.0

v0.7.0-rc.1

v0.7.0-rc.2

v0.7.0-rc.3

v0.7.0-rc.4

v0.7.0-rc.5

The dates indicate that v0.7.0-rc.5 is much newer than 1.0.0-beta6. If I want the most recent stable beta should I be using

kubectl create -f https://raw.githubusercontent.com/NVIDIA/k8s-device-plugin/v0.7.0-rc.5/nvidia-device-plugin.yml

instead of 1.0.0-beta6?

A partial answer to your question is here: https://github.com/NVIDIA/k8s-device-plugin#versioning

The gist of it is the following though:

- Before Kubernetes v1.10 the versioning scheme of the device plugin had to match exactly the version of Kubernetes. This is why you see tags for v1.8, v1.9, v1.10, v1.11, v1.12 in the device plugin (we kept this versioning scheme all the way to v1.12 even though it wasn't technically necessary).

- As mentioned here, we eventually moved to SEMVER since the release of the plugin no longer needed to be tied to the version of K8s it was being run on. When we first made this change, the version scheme used was of the format

1.0.0-beta*. This continued from1.0.0-beta0-1.0.0-beta6. - This versioning scheme had issues, however, because there was no easy way to produce a

pre-releaseof the plugin before releasing a more stable version later on. It left users of the plugin constantly wondering if the latest plugin should be considered production ready or not. - To address this, we changed the versioning scheme as part of the

1.0.0-beta6release to move away from the1.0.0-beta*scheme in favor of what you see today (i.e.v0.x.x). With this new scheme we are now able to producepre-releases(e.g.v0.7.0-rc.5) that early adopters can consume before the final stable version is released (e.g.v0.7.0). - So as not to break existing users we kept the labels for

1.0.0-beta-1.0.0-beta6, but mapped them as follows (1.0.0-beta-->v0.0.0,1.0.0-beta1-->v0.1.0,1.0.0-beta´2-->v0.2.0,1.0.0-beta3-->v0.3.0,1.0.0-beta4-->v0.4.0,1.0.0-beta5-->v0.5.0,1.0.0-beta6-->v0.6.0).

I hope this clears things up -- at least for how the versioning works, and why the set of tags exist as they do.

To address your other specific questions:

The nvidia-docs say to use the 1.0.0-beta version (should be 1.0.0-beta6).

This is a very outdated document and is no longer linked from anywhere on the official nvidia docs page. It must still be cached by google somehow if you were able to find it. New docs are being produced now and should be updated soon on docs.nvidia.com.

Which is the most recent stable version?

The most recent stable version is v0.6.0. The latest pre-release is v0.7.0-rc.5. It should be promoted to stable (i.e. v0.7.0 in the next couple of weeks).

Your readme also says to use nvidia-docker2, but nvidia support has been included in docker since at least v 1.19, so nvidia-docker2 isn't really needed any longer, is it? Is the nvidia-container-runtime needed any longer with docker v >= 1.19? The readme is generally confusing.

Yes, nvidia-docker2 is still required. Kubernetes doesn't know how to invoke docker to use the integrated gpu support, so it still relies on the use of the custom nvidia-container-runtime provided by nvidia-docker2. I agree that the README is confusing and should be udated.

If I want the most recent stable beta should I be using

v0.7.0-rc.5?

That is the latest pre-release, whereas v0.6.0 is the latest stable release. It is up to you to decide which makes more sense for your use case.

I can confirm that the plugin works with docker 1.19.03 with the nvidia-container-runtime installed and does not need nvidia-docker2.

Sure. The only thing that nvidia-docker2 really gives you is the preconfigured daemon.json under /etc/docker/daemon.json. It just easier to tell people to install nvidia-docker2 than to explain the manual steps they have to do if all they install is nvidia-container-runtime.

Here is a link to a description of how the various components fit together, and what their purpose is: https://github.com/NVIDIA/nvidia-docker/issues/1268#issuecomment-632692949

I want to use the MIG (example: mig-strategy= mix), but the plugin should be given a parameter --mig-strategy=mix! If i do not use the mix mod as before, can i set the gpu MIG mod (example:nvidia-smi -mig 1) in the device-plugin container which is deployed in k8s? I wonder to know whether this operation in container makes use in host? Thanks!

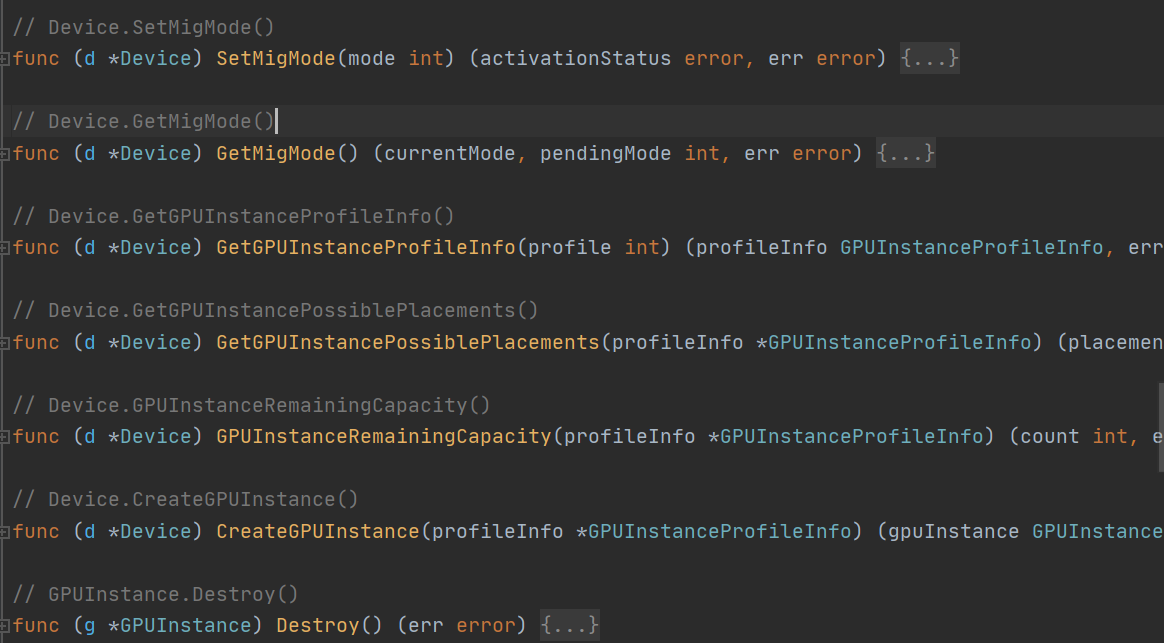

Can i change the GPU mod (none、mig、mix) dynamicly in k8s-device-plugin container (not the host) ? I have read the code of plugin, and found that there are interfaces in mig.go (vendor\github.com\NVIDIA\gpu-monitoring-tools\bindings\go\nvml\ mig.go):

Can i use them (example: Destroy()、SetMigMode...) in the container to set vGPUs of A100?And how?

Thanks!

Can i use them (example: Destroy()、SetMigMode...) in the container to set vGPUs of A100?And how?

Thanks!

Original question answered. @qingshanyinyin please open a separate issue if your question is still relevant.