stable-diffusion-webui

stable-diffusion-webui copied to clipboard

stable-diffusion-webui copied to clipboard

Add support for RunwayML In-painting Model

Apologies about the mess, I wrecked my repo trying to merge changes from upstream. It was easier to just make a new one.

This commit adds support for the v1.5 in-painting model located here: https://github.com/runwayml/stable-diffusion

We can use this model in all modes, txt2img and img2img, by just selecting the mask for each mode.

Working

- K-Diffusion img2img, txt2img, inpainting

- DDIM txt2img, img2img, inpainting

- Switching models between in-painting and regular v1.4

TODO

- Test all sorts of variations, image sizes, etc.

@random-thoughtss For creating masks when necessary - if my understanding that all these masks are necessary, at all modes and times, for this model is correct - wouldn't a simple check against the checkpoint filename suffice?

Amazing work, by the way.

@C43H66N12O12S2 I decided to just check for the attention method. Sadly it looks like a lot of the stable-diffusion code assumes that there is something in the cond so setting it to None will require a couple of changes. For now I'll just initialize a zero latent tensor, they generally don't take up that much space and it skips the image encoder call.

The miniscule amount of VRAM zero tensors consume is no big deal. Thanks for adding support for hires fix. IMO this pr is ready for merge. @AUTOMATIC1111

For anybody interested, this model does exceptionally well with NAI models.

Thank you for the PR. One thing I noticed: setting Inpaint at full resolution to True breaks inpainting.

Thank you for the PR. One thing I noticed: setting

Inpaint at full resolutiontoTruebreaks inpainting.

@genesst I could not reproduce on my end.

What is the error? What model and sampler are you using?

how could you add support for other models? I suspect just merging the models might no be enough?

PLZ AUTOMATIC1111 CHECK THIS ONE OUT ?

Can we use this before committed to main branch?

To use this do you switch models like any other model before ?

The miniscule amount of VRAM zero tensors consume is no big deal. Thanks for adding support for hires fix. IMO this pr is ready for merge. @AUTOMATIC1111

How much VRAM are we talking about here? Some people have very slim margins between their maximum possible rendering resolution and OOM errors.

Tested it. It works amazingly well. For those who are wondering how to use it

- first switch to this pull request in git.

- download runwayml model and place it in the same place you put SD model file.

- select this inpainting model from dropdown at top left corner of the page in web interface

- enjoy inpainting

@ProGamerGov It would take up a little under 81KB for the standard sized image at fp32. However, looking at it, it shouldn't care what the size of the dummy latent image is, just that the batch size is correct. It should be enough to make a dummy 1x1 image, meaning it'll only take up an extra 20 bytes per image at fp32.

@ProGamerGov It would take up a little under 81KB for the standard sized image at fp32. However, looking at it, it shouldn't care what the size of the dummy latent image is, just that the batch size is correct. It should be enough to make a dummy 1x1 image, meaning it'll only take up an extra 20 bytes per image at fp32.

That sounds fine then!

how could you add support for other models? I suspect just merging the models might no be enough?

@ryukra The weights have a completely different first layer, so you can't just merge models together. I guess you could try just dropping the extra 5 inputs from the input layer's weights, no idea how the network would react.

It looks like they have some training code using diffusers available here: https://huggingface.co/runwayml/stable-diffusion-inpainting but the "generate synthetic masks and in 25% mask everything" pipeline is not implemented anywhere.

I've downloaded and selected the runwayml model, but I'm still getting bad results. Could someone please explain their process for getting good in/out painting?

- Are you using 1 of the custom scripts?

- Are you manually modifying your input images to have transparency / are you creating custom masks?

- What dimensions & generation settings are you using?

A short video would be super helpful!

this PR breaks all other models in DDIM mode

File "stable-diffusion-webui/modules/sd_samplers.py", line 240, in <lambda>

samples_ddim = self.launch_sampling(steps, lambda: self.sampler.sample(S=steps+1, conditioning=conditioning, batch_size=int(x.shape[0]), shape=x[0].shape, verbose=False, unconditional_guidance_scale=p.cfg_scale, unconditional_conditioning=unconditional_conditioning, x_T=x, eta=self.eta)[0])

File "miniconda3/lib/python3.8/site-packages/torch/autograd/grad_mode.py", line 27, in decorate_context

return func(*args, **kwargs)

File "stable-diffusion-webui/repositories/stable-diffusion/ldm/models/diffusion/ddim.py", line 84, in sample

cbs = conditioning[list(conditioning.keys())[0]].shape[0]

AttributeError: 'list' object has no attribute 'shape'

@remixer-dec Took some time to replicate, but it turns out if you never load the in-painting model, the the DDIM methods never get replaced. Made sure to always replace them. Thanks for spotting that!

Should work now even when never loading an in-painting model.

I've noticed whilst trying to produce comparisons that the inpainting model does not work when using the X/Y Plot.

If you specify the inpainting model within an X/Y plot under the "Checkpoint" section, when it gets to that model it does not load it and instead uses the previously used model again. Even the console seems to get confused.

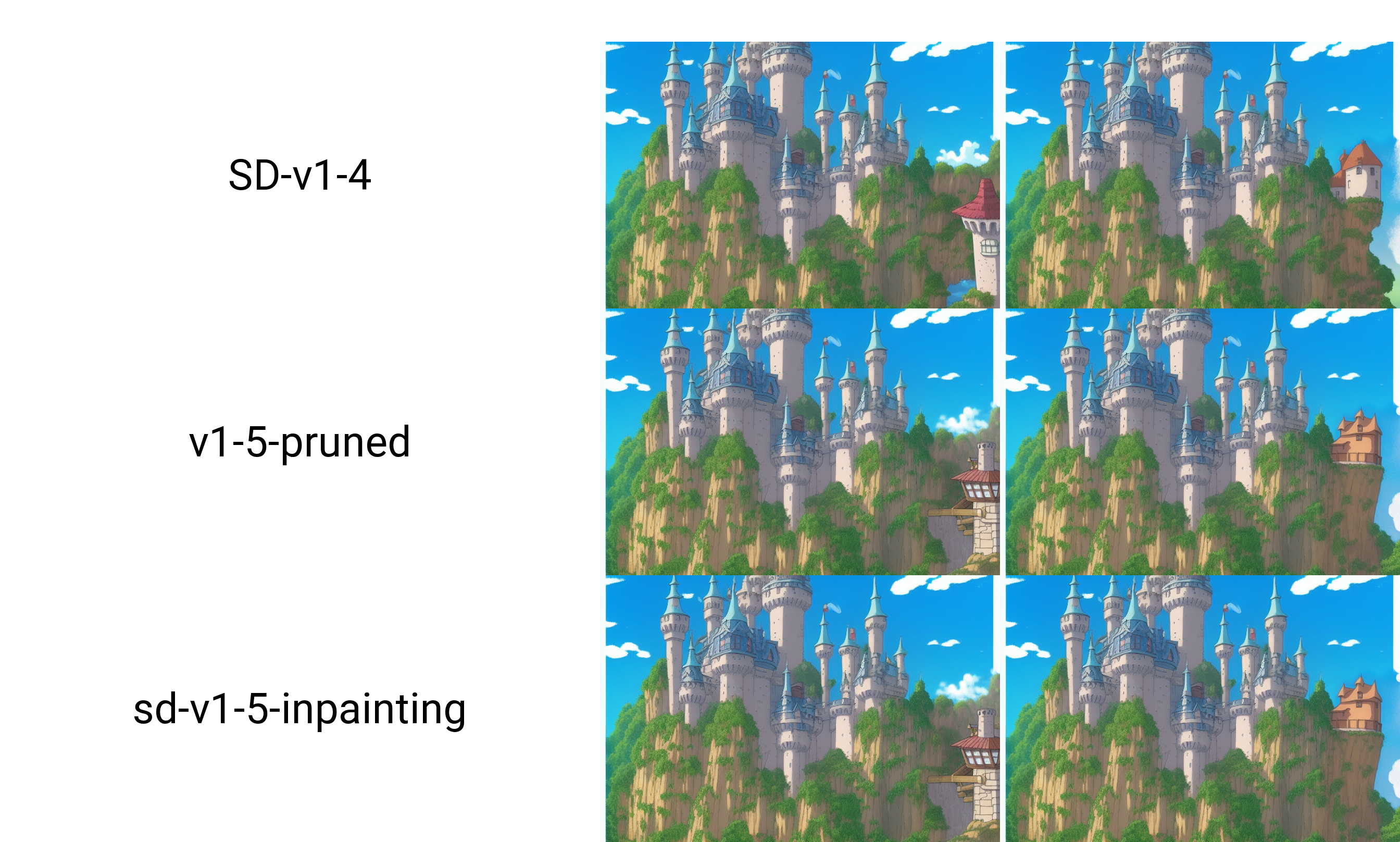

This is an image I inpainted, it was to add an extra tower on the far right of the image:

You can see here that the output from the 1.5 model and the inpainting model are identical, however if you look at the console output.

X/Y plot will create 6 images on a 1x3 grid. (Total steps to process: 240) 100%|██████████████████████████████████████████████████████████████████████████████████| 31/31 [00:05<00:00, 6.01it/s] 100%|██████████████████████████████████████████████████████████████████████████████████| 31/31 [00:05<00:00, 5.78it/s] Loading weights [a9263745] from D:\Code\Stable-Diffusion\AUTOMATIC1111\stable-diffusion-webui\models\Stable-diffusion\v1-5-pruned.ckpt Global Step: 840000 Applying cross attention optimization (Doggettx). Weights loaded. 100%|██████████████████████████████████████████████████████████████████████████████████| 31/31 [00:05<00:00, 5.86it/s] 100%|██████████████████████████████████████████████████████████████████████████████████| 31/31 [00:05<00:00, 5.58it/s] LatentDiffusion: Running in eps-prediction mode███████ | 124/240 [00:41<00:19, 5.82it/s] DiffusionWrapper has 859.52 M params. making attention of type 'vanilla' with 512 in_channels Working with z of shape (1, 4, 32, 32) = 4096 dimensions. making attention of type 'vanilla' with 512 in_channels Loading weights [7460a6fa] from D:\Code\Stable-Diffusion\AUTOMATIC1111\stable-diffusion-webui\models\Stable-diffusion\SD-v1-4.ckpt Global Step: 470000 Applying cross attention optimization (Doggettx). Model loaded. 100%|██████████████████████████████████████████████████████████████████████████████████| 31/31 [00:10<00:00, 2.93it/s] 100%|██████████████████████████████████████████████████████████████████████████████████| 31/31 [00:07<00:00, 4.21it/s] Loading weights [7460a6fa] from D:\Code\Stable-Diffusion\AUTOMATIC1111\stable-diffusion-webui\models\Stable-diffusion\SD-v1-4.ckpt Global Step: 470000 Applying cross attention optimization (Doggettx). Weights loaded.

You can see that it starts the plot, the SD-v1-4 model is already loaded so it does not load anything new, it does 2 images and then loads the v1-5-pruned model. After this it should be selecting the inpainting model, but it goes back to showing the SD-v-1-4 model.

But when compared to the image, it obviously doesn't match up, as the v1-5-pruned images and the sd-v1-5-inpainting models are identical, and the SD-v1-4 are completely different.

Also, if you have the Inpainting model already loaded and then try to do an X/Y Plot with other models, it just ignores those models and only uses the Inpainting model.

I believe it may have something to do with it detecting the inpainting model and using "LatentInpaintDiffusion" instead of the usual "LatentDiffusion"

Example of what happens when using the X/Y Plot whilst the inpainting model is your currently selected model.

Outputs are identical because it's only using the inpainting model, but you can see from the screenshot that it thinks it's using the other models.

X/Y plot will create 2 images on a 2x1 grid. (Total steps to process: 40) LatentInpaintDiffusion: Running in eps-prediction mode DiffusionWrapper has 859.54 M params. making attention of type 'vanilla' with 512 in_channels Working with z of shape (1, 4, 32, 32) = 4096 dimensions. making attention of type 'vanilla' with 512 in_channels Loading weights [3e16efc8] from D:\Code\Stable-Diffusion\AUTOMATIC1111\stable-diffusion-webui\models\Stable-diffusion\sd-v1-5-inpainting.ckpt Global Step: 440000 Applying cross attention optimization (Doggettx). Model loaded. 100%|██████████████████████████████████████████████████████████████████████████████████| 20/20 [00:02<00:00, 9.49it/s] LatentInpaintDiffusion: Running in eps-prediction mode | 19/40 [00:27<00:05, 3.71it/s] DiffusionWrapper has 859.54 M params. making attention of type 'vanilla' with 512 in_channels Working with z of shape (1, 4, 32, 32) = 4096 dimensions. making attention of type 'vanilla' with 512 in_channels Loading weights [3e16efc8] from D:\Code\Stable-Diffusion\AUTOMATIC1111\stable-diffusion-webui\models\Stable-diffusion\sd-v1-5-inpainting.ckpt Global Step: 440000 Applying cross attention optimization (Doggettx). Model loaded. 100%|██████████████████████████████████████████████████████████████████████████████████| 20/20 [00:02<00:00, 9.58it/s] Total progress: 100%|██████████████████████████████████████████████████████████████████| 40/40 [00:50<00:00, 1.25s/it]

There's a slight issue, not sure if it's just me.

While doing inpainting with mask, unmasked area is always processed, with 1 sampling step, what it seems. It's obvious on previews and on resulting image. Funny thing is, color corrected image is correct with those enabled.

UPD: whole image changes slightly with each iteration, not once at the start, as i first thought.

experiment:

UPD: whole image changes slightly with each iteration, not once at the start, as i first thought.

experiment:

There's a slight issue, not sure if it's just me. While doing inpainting with mask, unmasked area is always processed, with 1 sampling step, what it seems. It's obvious on previews and on resulting image. Funny thing is, color corrected image is correct with those enabled.

UPD: whole image changes slightly with each iteration, not once at the start, as i first thought. experiment:

This looks like a very old quirk that was fixed at some point, it was modifying a bit of the rest of the image to blend it better, from what I gather, color correction is just applying processing to the image, so maybe OP's PR is somehow bypassing some crucial fonction

I've noticed whilst trying to produce comparisons that the inpainting model does not work when using the X/Y Plot.

@Arron17 This ended up being a more general bug with xy grid, but the fix is really small. Essentially when loading a different set of weights but the same config, it would update the weights in-place and the sampler would see the new model. When the config changes, the entire model needs to be remade, and the sampler's model wasn't updated. I guess the issue has just never come up before since most models use the default config.

@AUTOMATIC1111 Let me know if this change doesn't belong in this PR.

@AUTOMATIC1111 It looks like RunwayML's 1.5 checkpoint is actually based on the real 1.5 model from Stability AI. So, this isn't just some random model.

Source: https://www.reddit.com/r/StableDiffusion/comments/y91pp7/stable_diffusion_v15/

Were RunwayML the original authors of SD? If so we could very well switch to their repo and get rid of the hijacking code. I initially thought it was just some dude who trained the inpainting model.

Anyway, great work, I did some testing and it all seems to work fine.

CompVis is the original author. They're a research group who were staffed by student research (IIRC) and some RunwayML employees.

Masked Inpainting is broken right now on every model. Non-masked parts of the image are being changed slightly.

Its working fine for me

That is so weird. Are you using color correction?

Do you have the color correction setting selected by any chance?

i'm using it, and it saves both images, look here

wtf So for me the color-corrected image is identical in non-masked area, but the one without color correction is slightly distorted.

Does it happens with images that don't have a complete white bg?